MobileManiBench: Simplifying Model Verification for Mobile Manipulation

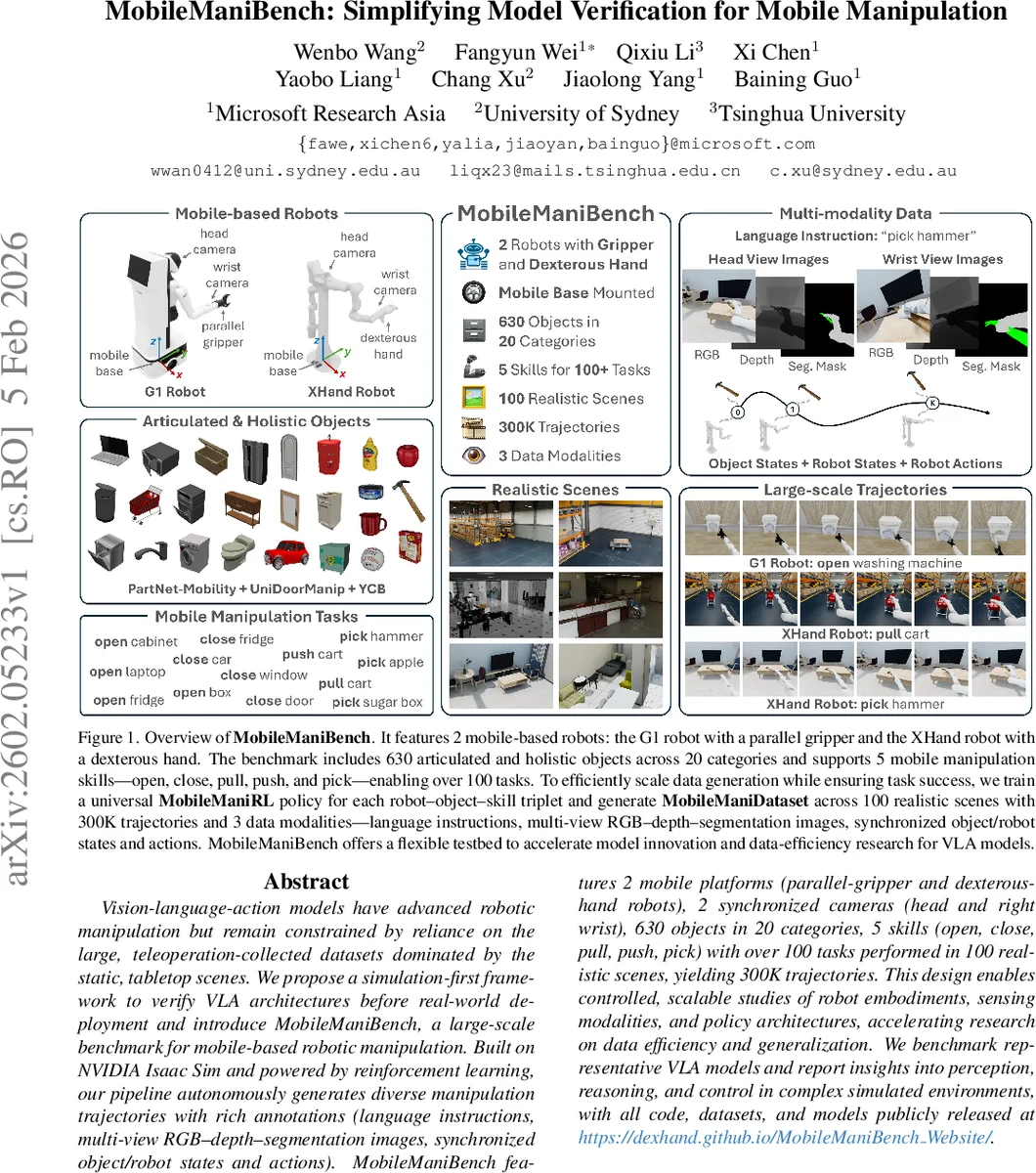

Vision-language-action models have advanced robotic manipulation but remain constrained by reliance on the large, teleoperation-collected datasets dominated by the static, tabletop scenes. We propose a simulation-first framework to verify VLA architectures before real-world deployment and introduce MobileManiBench, a large-scale benchmark for mobile-based robotic manipulation. Built on NVIDIA Isaac Sim and powered by reinforcement learning, our pipeline autonomously generates diverse manipulation trajectories with rich annotations (language instructions, multi-view RGB-depth-segmentation images, synchronized object/robot states and actions). MobileManiBench features 2 mobile platforms (parallel-gripper and dexterous-hand robots), 2 synchronized cameras (head and right wrist), 630 objects in 20 categories, 5 skills (open, close, pull, push, pick) with over 100 tasks performed in 100 realistic scenes, yielding 300K trajectories. This design enables controlled, scalable studies of robot embodiments, sensing modalities, and policy architectures, accelerating research on data efficiency and generalization. We benchmark representative VLA models and report insights into perception, reasoning, and control in complex simulated environments.

💡 Research Summary

MobileManiBench addresses a critical bottleneck in Vision‑Language‑Action (VLA) research: the reliance on massive tele‑operated datasets that are confined to static tabletop scenes and fixed‑base manipulators. The authors propose a simulation‑first framework built on NVIDIA Isaac Sim that automatically generates large‑scale, richly annotated trajectories for mobile‑based robots, thereby enabling rapid verification of VLA architectures before costly real‑world deployment.

Hardware and Simulation Setup

Two distinct robot platforms are simulated: (1) the G1 robot equipped with a 7‑DOF arm, a 2‑DOF mobile base, and a parallel gripper (1 DOF); (2) the XHand robot, which shares the same base and arm but carries a 12‑DOF dexterous anthropomorphic hand. Both robots host synchronized RGB‑Depth‑Segmentation cameras on the head and the right wrist, providing multi‑view visual streams.

Data Generation Pipeline

For each robot‑object‑skill triplet, a universal state‑based reinforcement‑learning policy—MobileManiRL—is trained. Inputs to the policy include a timestep embedding, object grasp and goal points, full robot proprioception (joint angles, velocities, base pose), robot‑object distances, and the previous action. The network is a four‑layer MLP (1024‑1024‑512‑512) ending in a fully‑connected action head that outputs a (6 + D)‑dimensional vector (7 D for G1, 18 D for XHand). The reward function combines global distance penalties, an approach reward, and, after a successful grasp (signaled by a binary grasp flag), separate rewards for grasp stability, movement toward the goal, and final success. Training occurs in two simplified environments (ground and tabletop) that contain the 20 object categories. Once a policy reliably succeeds (≤ 300 steps), it is deployed across 100 realistic digital scenes to collect successful trajectories.

Dataset Scale and Modalities

- Objects: 630 items spanning 20 categories (including articulated objects from PartNet‑Mobility, doors from UniDoorManip, and holistic YCB objects).

- Skills: five manipulation primitives—open, close, pull, push, pick—combined into over 100 distinct tasks (e.g., opening a laptop, closing a cabinet, pushing a cart, picking a mustard bottle).

- Scenes: 100 semi‑structured indoor environments with varied lighting, textures, and object placements.

- Trajectories: 300 K successful episodes, each containing a natural‑language instruction, synchronized multi‑view RGB‑Depth‑Segmentation image sequences, object state vectors, full robot state/action logs.

Benchmarking VLA Models

Using the aggregated MobileManiDataset, the authors train a unified VLA model—MobileManiVLA—and compare it against several state‑of‑the‑art VLA architectures (e.g., RT‑1, PerAct, VIMA). Key findings include:

- Multi‑modal Fusion Gains: Adding wrist‑mounted RGB‑Depth data improves task success rates by an average of 12 % across all models, highlighting the importance of close‑range visual cues for precise manipulation.

- Mobile Base Challenge: Models originally designed for fixed‑base setups suffer a >30 % drop in performance when evaluated on mobile‑base tasks, underscoring the need for policies that jointly reason about navigation and manipulation.

- Dexterous Hand Advantage: The XHand robot achieves an 18 % higher success rate on complex skills such as lever‑door opening and object re‑orientation compared to the parallel‑gripper G1, while both robots perform similarly on simple pick‑and‑place tasks.

Implications and Future Directions

MobileManiBench provides a “virtual testbed” where researchers can systematically vary sensor configurations (e.g., adding LiDAR, tactile arrays), robot embodiments (different base kinematics, alternative end‑effectors), and task families (assembly, tool use) without incurring real‑world data collection costs. The large, multi‑modal dataset serves both as a pre‑training corpus for foundation VLA models and as a benchmark for evaluating data‑efficiency and generalization. By decoupling algorithmic development from hardware constraints, the benchmark accelerates the co‑design of perception‑action pipelines and robot platforms, paving the way toward truly general‑purpose embodied AI.

In summary, MobileManiBench demonstrates that a simulation‑first, reinforcement‑learning‑driven data generation pipeline can produce massive, high‑fidelity trajectories for mobile manipulation. This enables rigorous, scalable evaluation of VLA models, reveals the critical role of multi‑view and depth sensing, and highlights the performance gap between fixed‑base and mobile‑base manipulation. The benchmark is publicly released, offering the community a powerful resource to advance data‑efficient, generalizable robot learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment