Boosting SAM for Cross-Domain Few-Shot Segmentation via Conditional Point Sparsification

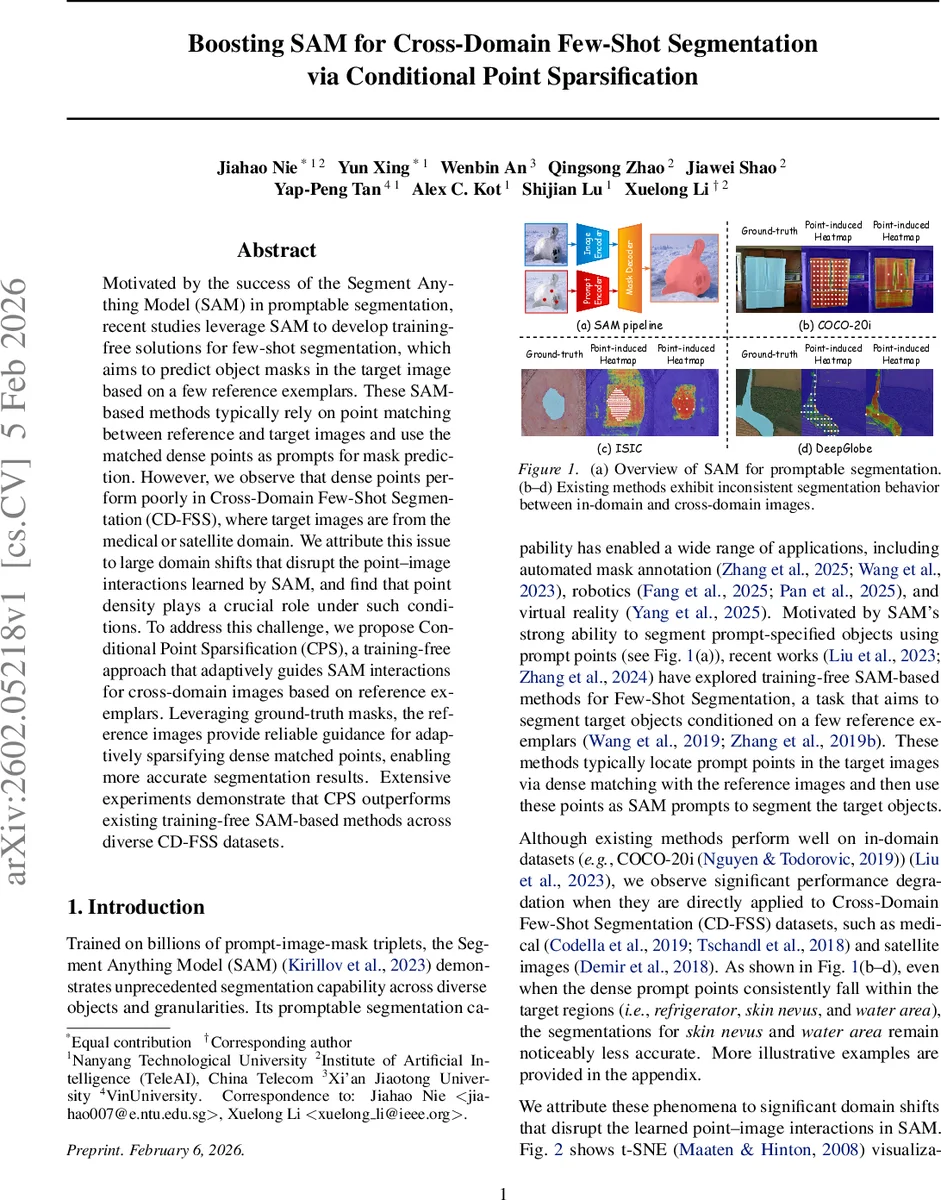

Motivated by the success of the Segment Anything Model (SAM) in promptable segmentation, recent studies leverage SAM to develop training-free solutions for few-shot segmentation, which aims to predict object masks in the target image based on a few reference exemplars. These SAM-based methods typically rely on point matching between reference and target images and use the matched dense points as prompts for mask prediction. However, we observe that dense points perform poorly in Cross-Domain Few-Shot Segmentation (CD-FSS), where target images are from medical or satellite domains. We attribute this issue to large domain shifts that disrupt the point-image interactions learned by SAM, and find that point density plays a crucial role under such conditions. To address this challenge, we propose Conditional Point Sparsification (CPS), a training-free approach that adaptively guides SAM interactions for cross-domain images based on reference exemplars. Leveraging ground-truth masks, the reference images provide reliable guidance for adaptively sparsifying dense matched points, enabling more accurate segmentation results. Extensive experiments demonstrate that CPS outperforms existing training-free SAM-based methods across diverse CD-FSS datasets.

💡 Research Summary

The paper addresses a critical limitation of recent training‑free Few‑Shot Segmentation (FSS) methods that rely on the Segment Anything Model (SAM). While SAM‑based approaches that match dense points between reference and target images work well on in‑domain datasets such as COCO‑20i, they suffer severe performance drops on cross‑domain datasets (e.g., medical ISIC and satellite DeepGlobe). The authors identify two root causes: (1) large domain shifts break the point‑image interactions learned by SAM during pre‑training, and (2) objects in medical and satellite images tend to have homogeneous appearance and simple shapes, making dense prompts redundant or even harmful. Quantitative analysis of SAM’s vision encoder features (t‑SNE visualizations and intra‑object variance measurements) confirms that cross‑domain objects exhibit far lower feature variance than in‑domain objects, indicating that fewer, more informative points are sufficient.

To overcome these issues, the authors propose Conditional Point Sparsification (CPS), a completely training‑free framework that adaptively determines the appropriate prompt point density for each task using the ground‑truth masks of the few reference exemplars. CPS consists of four stages:

-

Dense Point Matching – Using DINOv2 as the visual backbone, the Positive‑Negative Alignment (PNA) module computes dense correspondences between a reference image (I_r) and a target image (I_t). The resulting matched points are projected from the DINOv2 feature map to SAM’s input coordinate space.

-

Boundary Point Pruning – Because the linear projection can cause misalignment, the convex hull of the projected points is computed and points lying on the hull boundary (most likely to be background or mis‑projected) are removed.

-

Reference‑Driven Density Lookup & Adaptive Sparsification – From each reference mask, CPS extracts a “density signal” (e.g., number of points per unit area, shape complexity). This signal guides a subsampling of the dense point set to the target density that best matches the reference’s characteristics. The subsampling is not random; it preserves a balanced distribution of points across object interiors and edges using distance‑weighted sampling.

-

SAM Prompting & Post‑hoc Refinement – The sparsified points are fed to SAM as prompts, producing an initial mask (\hat{M}_t). A lightweight refinement step (e.g., CRF‑like smoothing, morphological operations, or iterative IoU‑based correction using the reference mask) cleans small holes and sharpens boundaries, yielding the final mask (\tilde{M}_t).

The method requires no additional training; all components are either existing pretrained models (SAM, DINOv2) or simple geometric operations. Experiments on four benchmarks (ISIC, DeepGlobe, COCO‑20i, plus an additional satellite set) under 1‑shot and 5‑shot settings show that CPS consistently outperforms the strongest prior SAM‑based baseline (Matcher) and heuristic sparsification schemes. Gains range from 4.2 % to 7.8 % absolute mIoU, with the most pronounced improvements on the cross‑domain datasets where dense prompts previously caused severe degradation. Importantly, CPS maintains comparable performance on the in‑domain COCO‑20i, demonstrating that adaptive sparsification does not hurt cases where dense prompts are already optimal.

The contributions are threefold: (1) a thorough analysis exposing why dense prompts fail under large domain shifts, (2) the introduction of a simple yet effective conditional sparsification mechanism that leverages only the few reference masks, and (3) extensive empirical validation confirming that a training‑free pipeline can achieve state‑of‑the‑art results on challenging cross‑domain few‑shot segmentation tasks.

In summary, Conditional Point Sparsification provides a practical, plug‑and‑play enhancement for SAM‑based few‑shot segmentation, enabling robust performance across vastly different visual domains without any additional model training. Future work may explore richer density estimators, integration with other foundation models, and multimodal prompts to further strengthen cross‑domain generalization.

Comments & Academic Discussion

Loading comments...

Leave a Comment