Disentangled Representation Learning via Flow Matching

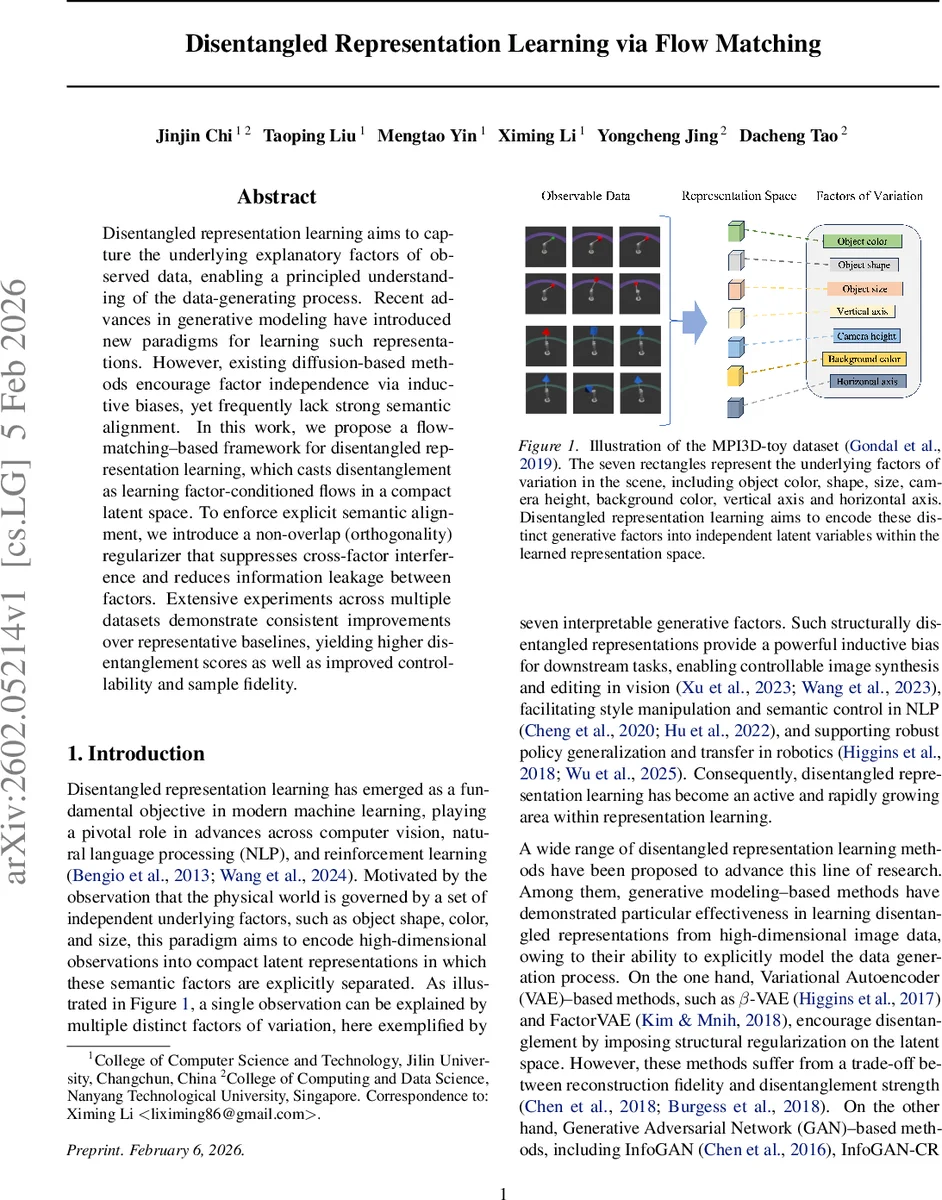

Disentangled representation learning aims to capture the underlying explanatory factors of observed data, enabling a principled understanding of the data-generating process. Recent advances in generative modeling have introduced new paradigms for learning such representations. However, existing diffusion-based methods encourage factor independence via inductive biases, yet frequently lack strong semantic alignment. In this work, we propose a flow matching-based framework for disentangled representation learning, which casts disentanglement as learning factor-conditioned flows in a compact latent space. To enforce explicit semantic alignment, we introduce a non-overlap (orthogonality) regularizer that suppresses cross-factor interference and reduces information leakage between factors. Extensive experiments across multiple datasets demonstrate consistent improvements over representative baselines, yielding higher disentanglement scores as well as improved controllability and sample fidelity.

💡 Research Summary

The paper introduces a novel framework for disentangled representation learning built on the deterministic generative paradigm of flow matching. Traditional approaches—VAE‑based methods that rely on KL‑weighting or total‑correlation penalties, GAN‑based methods that lack an invertible encoder, and diffusion‑based methods that depend on stochastic denoising trajectories—either sacrifice reconstruction fidelity, suffer from expensive sampling, or fail to guarantee a clear semantic alignment between latent dimensions and underlying factors of variation.

Flow matching, as formalized by Lipman et al. (2022), learns a time‑dependent vector field vₜ that transports probability mass from a simple prior p₀ (standard Gaussian) to the data distribution p₁ by solving an ordinary differential equation (ODE). This deterministic transport eliminates the need for a noise schedule, enables exact likelihood‑free training via supervised regression, and permits fast ODE‑based sampling.

The authors extend this idea to disentanglement by conditioning the flow on a set of N factor embeddings extracted from the input image. An image is first encoded into a compact latent code z₁ using a pretrained VQ‑GAN encoder, which serves as a stable target for the flow. Simultaneously, a trainable factor encoder Tγ produces N vectors Sγ(I) = {s₁,…,s_N} that are intended to capture distinct semantic attributes (e.g., color, shape, size). These factor vectors are injected into a U‑Net backbone that parameterizes vθ via cross‑attention at multiple resolutions: queries are derived from spatial feature maps, while keys and values are the factor embeddings. This design ensures that each layer can selectively attend to the factor most relevant for its computation, providing a structured conditioning pathway.

To enforce true disentanglement, the predicted velocity field is explicitly decomposed into N factor‑specific components vθ⁽ⁱ⁾. A non‑overlap (orthogonality) regularizer

ℒ_ort = Σ_{i≠j} ‖⟨vθ⁽ⁱ⁾, vθ⁽ʲ⁾⟩‖²

penalizes inner‑product overlap between any pair of components, thereby suppressing cross‑factor interference and preventing information leakage. The overall training objective combines the standard conditional flow‑matching loss

ℒ_FM = 𝔼_{t, z₀, z₁}

Comments & Academic Discussion

Loading comments...

Leave a Comment