ShapePuri: Shape Guided and Appearance Generalized Adversarial Purification

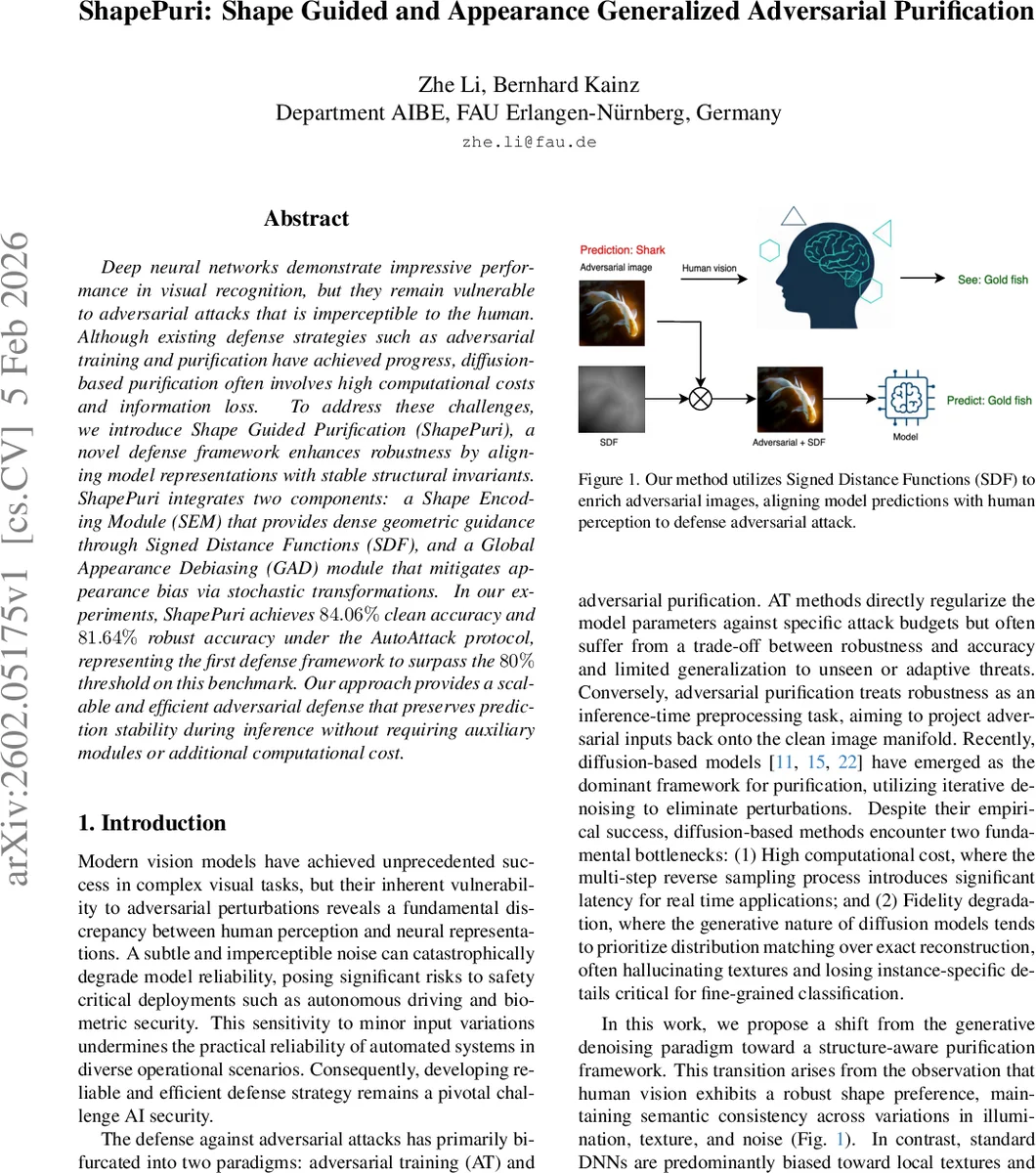

Deep neural networks demonstrate impressive performance in visual recognition, but they remain vulnerable to adversarial attacks that is imperceptible to the human. Although existing defense strategies such as adversarial training and purification have achieved progress, diffusion-based purification often involves high computational costs and information loss. To address these challenges, we introduce Shape Guided Purification (ShapePuri), a novel defense framework enhances robustness by aligning model representations with stable structural invariants. ShapePuri integrates two components: a Shape Encoding Module (SEM) that provides dense geometric guidance through Signed Distance Functions (SDF), and a Global Appearance Debiasing (GAD) module that mitigates appearance bias via stochastic transformations. In our experiments, ShapePuri achieves $84.06%$ clean accuracy and $81.64%$ robust accuracy under the AutoAttack protocol, representing the first defense framework to surpass the $80%$ threshold on this benchmark. Our approach provides a scalable and efficient adversarial defense that preserves prediction stability during inference without requiring auxiliary modules or additional computational cost.

💡 Research Summary

ShapePuri proposes a diffusion‑free adversarial purification framework that leverages geometric priors and appearance debiasing to achieve state‑of‑the‑art robustness on ImageNet. The method consists of two complementary modules. The Shape Encoding Module (SEM) extracts a dense Signed Distance Function (SDF) from each input image. The pipeline first applies Gaussian smoothing, then Otsu thresholding to obtain a binary mask, followed by Euclidean distance transforms for the interior and exterior of the mask. The final SDF is the signed difference of these transforms, providing a continuous, low‑frequency field that encodes object boundaries and remains stable under pixel‑level perturbations. During training, the adversarial image is fused with the SDF via element‑wise multiplication (I_fusion = I_adv ⊙ (1 + β·SDF)), where β controls the strength of geometric modulation. This fusion amplifies interior intensities and suppresses background noise, encouraging the classifier to focus on shape cues.

The Global Appearance Debiasing (GAD) module applies stochastic shallow convolutions and non‑linear intensity transformations to both clean and adversarial images. By diversifying global color and texture, GAD reduces the model’s reliance on fragile texture shortcuts, steering learning toward shape‑based representations.

Training employs five parallel input streams: (1) clean image, (2) GAD‑processed clean image, (3) adversarial image, (4) GAD‑processed adversarial image, and (5) SDF‑fused adversarial image. Each stream contributes a cross‑entropy loss (L_clean, L_adv, L_SDF, L_GAD). The total loss is a weighted sum of a base loss (clean + adversarial CE) and the two auxiliary losses, enabling the network to learn consistent predictions across all variants. Crucially, at inference time the SEM and GAD modules are removed; the classifier operates as a standard feed‑forward network with no additional computational overhead.

Empirical evaluation on ImageNet under the rigorous AutoAttack benchmark reports 81.64 % robust accuracy and 84.06 % clean accuracy, surpassing prior diffusion‑based defenses by more than 7 % and marking the first defense to exceed an 80 % robust‑accuracy threshold on this dataset. The method also maintains near‑zero inference latency compared to multi‑step diffusion purifiers.

The paper acknowledges limitations: SDF generation relies on accurate mask extraction, which can be challenged by cluttered scenes or overlapping objects, and the current approach is limited to 2‑D shape cues. Future work may explore multi‑scale or 3‑D shape priors, improve mask robustness, and test generalization on other domains such as medical imaging or remote sensing.

Overall, ShapePuri demonstrates that embedding explicit shape information and mitigating appearance bias during training can yield a lightweight, highly robust classifier, offering a compelling alternative to computationally expensive diffusion‑based purification methods.

Comments & Academic Discussion

Loading comments...

Leave a Comment