TACO: Temporal Consensus Optimization for Continual Neural Mapping

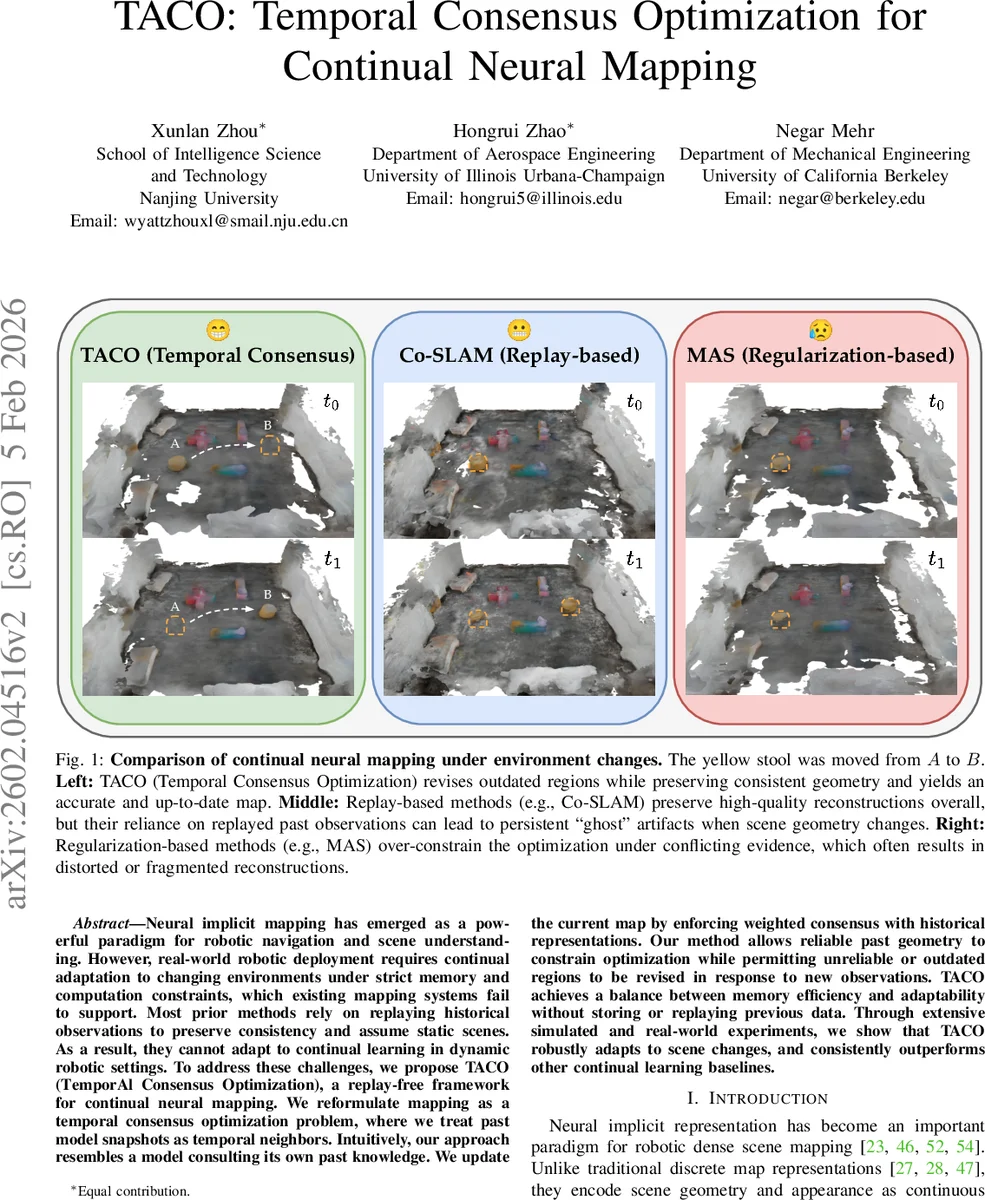

Neural implicit mapping has emerged as a powerful paradigm for robotic navigation and scene understanding. However, real-world robotic deployment requires continual adaptation to changing environments under strict memory and computation constraints, which existing mapping systems fail to support. Most prior methods rely on replaying historical observations to preserve consistency and assume static scenes. As a result, they cannot adapt to continual learning in dynamic robotic settings. To address these challenges, we propose TACO (TemporAl Consensus Optimization), a replay-free framework for continual neural mapping. We reformulate mapping as a temporal consensus optimization problem, where we treat past model snapshots as temporal neighbors. Intuitively, our approach resembles a model consulting its own past knowledge. We update the current map by enforcing weighted consensus with historical representations. Our method allows reliable past geometry to constrain optimization while permitting unreliable or outdated regions to be revised in response to new observations. TACO achieves a balance between memory efficiency and adaptability without storing or replaying previous data. Through extensive simulated and real-world experiments, we show that TACO robustly adapts to scene changes, and consistently outperforms other continual learning baselines.

💡 Research Summary

The paper introduces TACO (Temporal Consensus Optimization), a replay‑free framework for continual neural implicit mapping that enables robots to adapt to dynamic environments while respecting strict memory and computational budgets. Traditional neural mapping systems rely on storing and replaying past RGB‑D observations or on regularization techniques that either preserve outdated geometry (“ghost” artifacts) or over‑constrain the model, preventing adaptation. TACO reframes the problem as a temporal consensus optimization: the current model parameters Θₜ are forced to agree with a set of frozen historical snapshots (e.g., Θₜ₋₁) through a weighted averaging constraint. Parameter‑wise importance matrices Wₜ and Wₜ₋₁, derived from output‑sensitivity (the MAS‑style importance), modulate this consensus: high‑importance parameters are strongly anchored to past values, while low‑importance parameters are free to change in response to new observations.

Mathematically, the consensus target is

zₜ,ₜ₋₁ = (Wₜ + Wₜ₋₁)⁻¹ (WₜΘₜ + Wₜ₋₁Θₜ₋₁).

The constrained optimization minΘ L_obj(Θ, Rₜ₊₁) s.t. Θ = zₜ,ₜ₋₁ is solved via an augmented Lagrangian (ADMM) formulation:

Lₐ = L_obj(Θ, Rₜ₊₁) + (Θ−zₜ,ₜ₋₁)ᵀp + (ρ/2)‖Θ−zₜ,ₜ₋₁‖².

Primal updates are approximated with a few stochastic gradient steps on the standard Co‑SLAM loss (photometric, depth, SDF, smoothness), while dual updates follow p←p+ρ(Θ−z). This yields a lightweight, online update rule that does not require any replayed frames.

The authors integrate TACO on top of Co‑SLAM, a real‑time neural implicit mapping backbone that uses a multi‑resolution hash grid, geometry and color decoders, and volume rendering. Importance weights are computed per‑parameter by measuring the sensitivity of the SDF and color outputs to perturbations of each weight, mirroring the Memory Aware Synapses (MAS) approach.

Experiments cover both simulated scenarios (object relocation, lighting changes, structural rearrangements) and real‑world robotic trials with an RGB‑D sensor mounted on a mobile platform. Baselines include Co‑SLAM with keyframe replay, UNIKD (distillation‑based continual learning), and MAS/EWC regularization. Evaluation metrics comprise Chamfer distance, PSNR, structural consistency, and memory footprint. TACO consistently outperforms all baselines, achieving 15‑25 % improvements in reconstruction accuracy and dramatically reducing “ghost” artifacts. Because no past frames are stored, memory usage drops by 30‑50 % relative to replay‑based methods, while runtime remains real‑time thanks to the modest ADMM overhead.

Limitations are acknowledged: the current implementation only uses the immediate predecessor snapshot; extending to a richer history requires efficient snapshot management. Importance estimation is approximate for large hash‑grid networks, which may affect performance in highly complex scenes. Future work will explore multi‑snapshot consensus, more accurate importance metrics, and application to other implicit representations such as NeRF.

In summary, TACO offers a novel temporal‑consensus perspective that balances stability (preserving reliable past geometry) and plasticity (adapting to new observations) without replaying data. It delivers superior accuracy, lower memory consumption, and robust adaptation to dynamic environments, positioning it as a practical solution for long‑term autonomous robotic mapping.

Comments & Academic Discussion

Loading comments...

Leave a Comment