Mitigating Conversational Inertia in Multi-Turn Agents

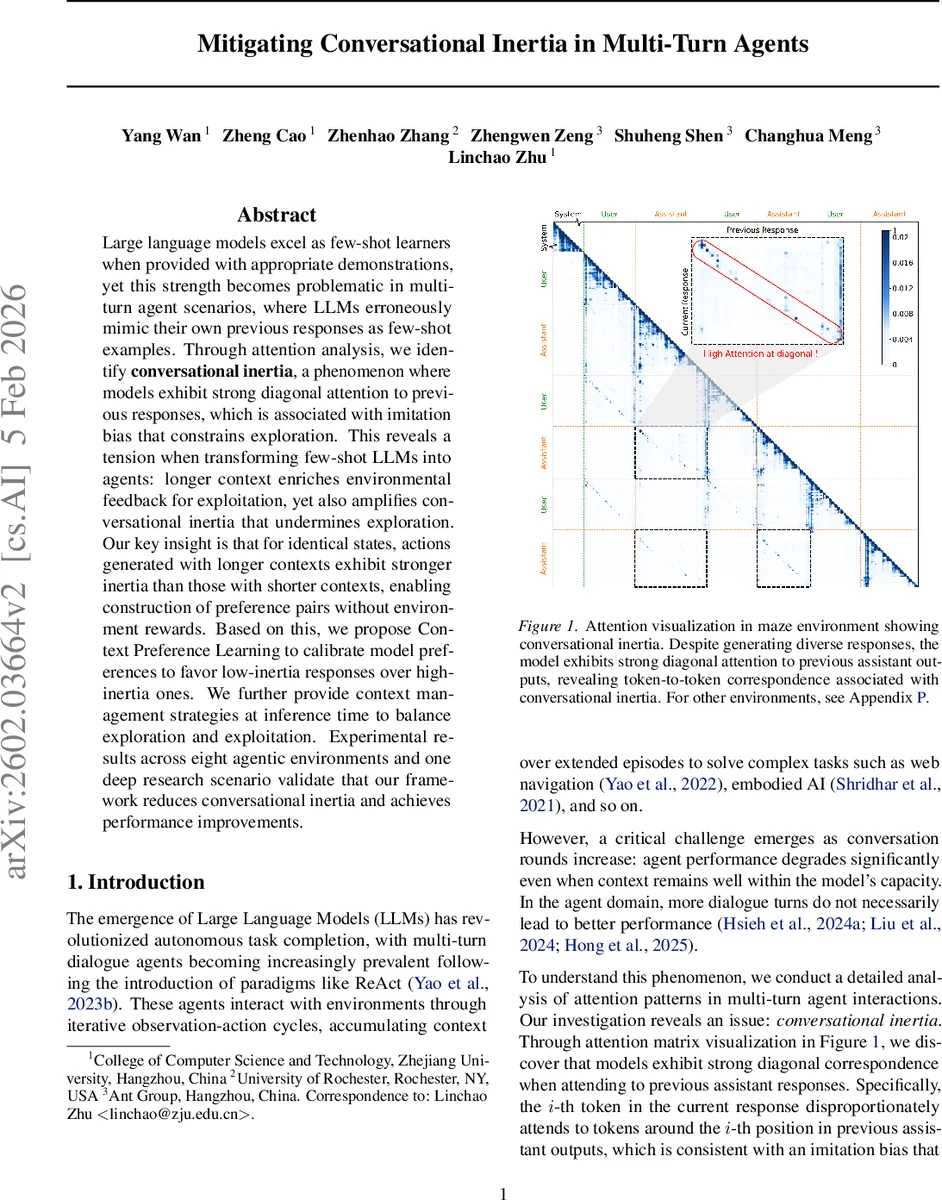

Large language models excel as few-shot learners when provided with appropriate demonstrations, yet this strength becomes problematic in multiturn agent scenarios, where LLMs erroneously mimic their own previous responses as few-shot examples. Through attention analysis, we identify conversational inertia, a phenomenon where models exhibit strong diagonal attention to previous responses, which is associated with imitation bias that constrains exploration. This reveals a tension when transforming few-shot LLMs into agents: longer context enriches environmental feedback for exploitation, yet also amplifies conversational inertia that undermines exploration. Our key insight is that for identical states, actions generated with longer contexts exhibit stronger inertia than those with shorter contexts, enabling construction of preference pairs without environment rewards. Based on this, we propose Context Preference Learning to calibrate model preferences to favor low-inertia responses over highinertia ones. We further provide context management strategies at inference time to balance exploration and exploitation. Experimental results across eight agentic environments and one deep research scenario validate that our framework reduces conversational inertia and achieves performance improvements.

💡 Research Summary

The paper investigates a critical failure mode that emerges when large language models (LLMs) are repurposed from few‑shot learners into multi‑turn autonomous agents. While few‑shot prompting enables impressive zero‑shot performance, the authors discover that, as dialogue length grows, LLMs begin to treat their own previous responses as implicit demonstrations. This “conversational inertia” manifests as a strong diagonal pattern in the attention matrix: the i‑th token of the current assistant output disproportionately attends to the i‑th token of the prior assistant output. The phenomenon intensifies with longer context windows, while attention to user inputs and system prompts remains relatively stable. Consequently, the model over‑relies on its own past utterances, reproducing stale patterns and limiting exploration, which degrades task performance even when the total context stays within the model’s capacity.

Key insight: for the same environmental state, actions generated with a longer context exhibit higher inertia than those generated with a shorter context. This observation allows the construction of preference pairs without any external reward signal. The authors generate two actions per state—one using the full interaction history (long context) and one using only recent turns (short context). The long‑context action typically carries more inertia and lower quality, while the short‑context action is less inertial and more exploratory. These (short > long) pairs are fed into a Direct Preference Optimization (DPO) loss, training the model to prefer low‑inertia outputs. The fine‑tuning is parameter‑efficient: LoRA adapters modify only 0.4 % of the model’s weights, making the approach scalable to large models.

In addition to training‑time mitigation, the paper proposes inference‑time context‑management strategies. “Clip Context” periodically clears the dialogue history once a predefined turn threshold H is reached, retaining only the most recent L turns. This method preserves KV‑cache efficiency (unlike sliding windows that require cache invalidation) and, crucially, resets the diagonal attention that underlies inertia. The authors also compare a traditional “Window Context” (fixed‑size recent window) and a “Summarization Context” that compresses cleared history into a summary token sequence. Empirical analysis shows that the performance gains of summarization stem largely from the inertia‑reduction effect of clipping rather than the semantic content of the summary.

Experiments span eight diverse agent environments from the AgentGym suite—including maze navigation, web browsing, embodied robotics, strategic games, and crafting tasks—as well as a deep‑research scenario (BrowseComp) that requires code exploration and modification. Models evaluated include Qwen‑3‑8B, Llama‑3.1‑8B‑Instruct, and GPT‑4o‑mini. Results demonstrate:

- CPL reduces diagonal attention ratios by an average of 11 % on Qwen‑3‑8B.

- Clip Context consistently outperforms both Window and Long‑context baselines, delivering ~4 % absolute success‑rate improvements across all models; Qwen‑3‑8B sees a 7.6 %p boost.

- When combined with CPL, Clip Context yields the highest scores (e.g., 72.5 % vs. 68.9 % for the base model).

- Summarization methods achieve comparable performance, but ablations reveal that the clipping component, not the generated summary, drives the gains.

- Attention visualizations confirm that Clip Context diminishes the diagonal attention peak and re‑balances attention toward user inputs and system instructions.

The authors conclude that effective multi‑turn agents must explicitly manage the trade‑off among context length, inertia, and exploration. CPL offers a reward‑free, data‑efficient way to bias models toward low‑inertia decisions, while Clip Context provides a lightweight, cache‑friendly inference mechanism that curtails error accumulation. Future work may explore the interaction between inertia reduction and long‑term memory retention, extend the approach to multimodal agents, and investigate meta‑learning schemes that adapt inertia control dynamically. Overall, the paper delivers a rigorous diagnosis of conversational inertia and practical solutions that advance the reliability and adaptability of LLM‑driven agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment