KANFIS: A Neuro-Symbolic Framework for Interpretable and Uncertainty-Aware Learning

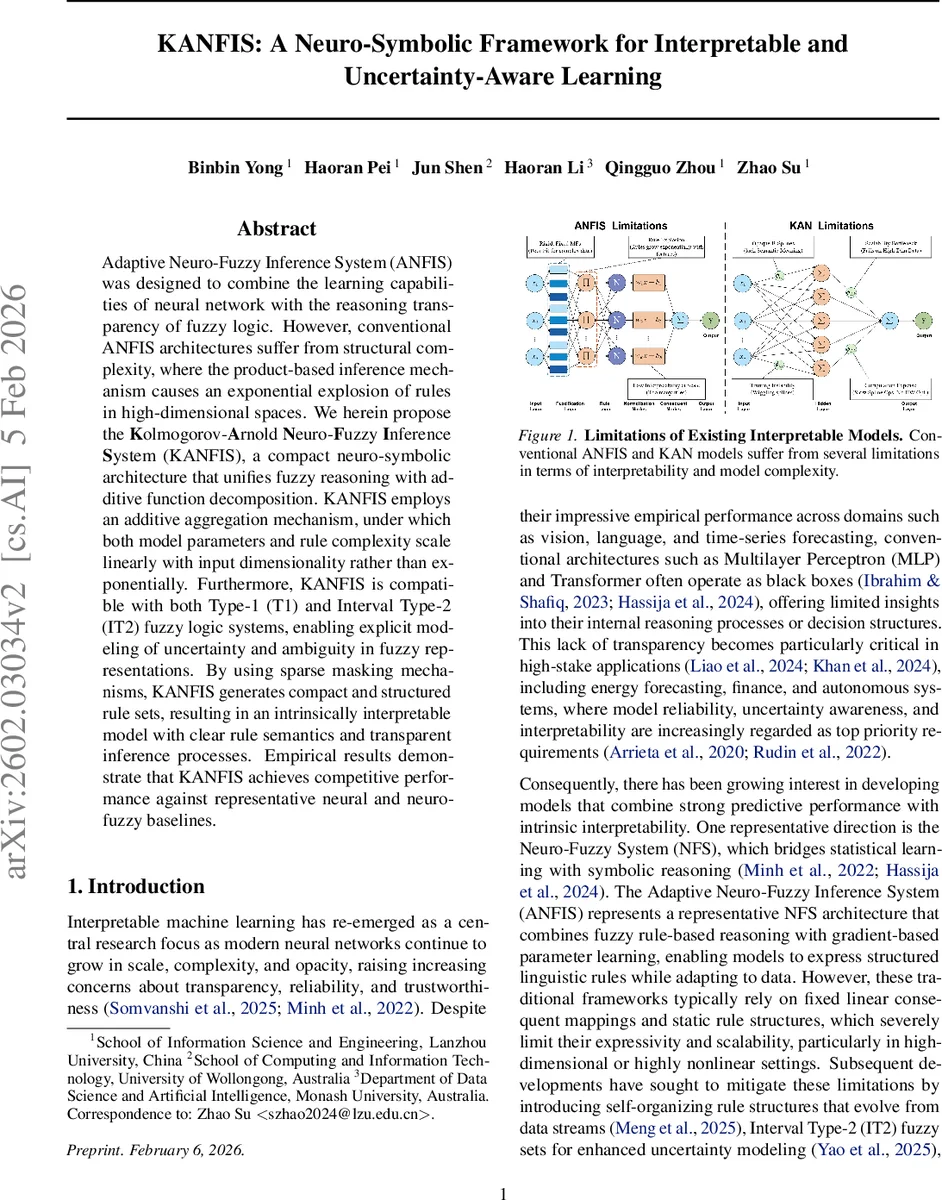

Adaptive Neuro-Fuzzy Inference System (ANFIS) was designed to combine the learning capabilities of neural network with the reasoning transparency of fuzzy logic. However, conventional ANFIS architectures suffer from structural complexity, where the product-based inference mechanism causes an exponential explosion of rules in high-dimensional spaces. We herein propose the Kolmogorov-Arnold Neuro-Fuzzy Inference System (KANFIS), a compact neuro-symbolic architecture that unifies fuzzy reasoning with additive function decomposition. KANFIS employs an additive aggregation mechanism, under which both model parameters and rule complexity scale linearly with input dimensionality rather than exponentially. Furthermore, KANFIS is compatible with both Type-1 (T1) and Interval Type-2 (IT2) fuzzy logic systems, enabling explicit modeling of uncertainty and ambiguity in fuzzy representations. By using sparse masking mechanisms, KANFIS generates compact and structured rule sets, resulting in an intrinsically interpretable model with clear rule semantics and transparent inference processes. Empirical results demonstrate that KANFIS achieves competitive performance against representative neural and neuro-fuzzy baselines.

💡 Research Summary

The paper introduces KANFIS, a novel neuro‑symbolic framework that merges the interpretability of fuzzy rule‑based systems with the expressive power of Kolmogorov‑Arnold Networks (KAN). Traditional Adaptive Neuro‑Fuzzy Inference Systems (ANFIS) suffer from an exponential explosion of rules when the input dimensionality grows, because their antecedent part relies on product‑based aggregation. KANFIS replaces this multiplicative mechanism with an additive superposition principle derived from the Kolmogorov‑Arnold representation theorem, which states that any multivariate continuous function can be expressed as a finite sum of univariate functions. In practice, each input feature is connected to a set of learnable univariate membership functions (implemented as B‑splines). A rule is formed by selecting one membership function per feature and aggregating the resulting activations by simple addition. Consequently, the number of parameters and the number of rules scale linearly with the number of input dimensions (O(d·K)), eliminating the rule‑explosion problem.

KANFIS supports both Type‑1 (T1) and Interval Type‑2 (IT2) fuzzy logic. In the T1 setting, standard Gaussian, generalized bell, and sigmoid membership functions are used. For IT2, each basis function has a lower and an upper standard deviation, allowing the model to capture aleatoric uncertainty directly and to output interval‑valued membership grades. This dual‑type capability makes KANFIS suitable for safety‑critical domains where uncertainty quantification is essential.

To preserve interpretability while keeping the model compact, the authors introduce two regularization terms. A sparsity regularizer enforces a mask that limits the number of active features per rule, effectively forcing each rule to involve only a few salient inputs. A diversity regularizer penalizes similarity between the masks of different rules, ensuring that the rule set does not contain redundant patterns. Together, these constraints produce a concise, human‑readable rule base such as “IF x₁ IS Gaussian(µ₁,σ₁) AND x₃ IS Bell(µ₃,σ₃) THEN y = …”.

Training proceeds via standard back‑propagation with Adam optimizer. The univariate functions are parameterized by the control points of B‑splines, which are learned jointly with the mask parameters. Because the functions are differentiable, gradient‑based learning is straightforward, and the number of control points directly controls model capacity, offering a fine‑grained trade‑off between accuracy and computational cost. Theoretical work on KAN provides generalization bounds that apply to KANFIS, giving a formal justification for its scalability.

Empirical evaluation covers six public datasets spanning regression (e.g., power‑consumption forecasting, Parkinson’s disease progression) and classification (spam detection, medical diagnosis). Two variants—IT2‑KANFIS and T1‑KANFIS—are benchmarked against MLP, traditional ANFIS (both T1 and IT2), and the original KAN. Metrics such as MAPE, RMSE, MAE for regression and accuracy, F1‑score, AUROC for classification show that KANFIS matches or slightly outperforms the baselines. Notably, on high‑dimensional tasks like the Combined Cycle Power Plant dataset, KANFIS achieves comparable error rates while using fewer than one‑tenth the number of rules, confirming the linear scaling claim. Visualizations of learned membership functions demonstrate that each rule can be inspected and understood, and ablation studies reveal that removing the sparsity or diversity regularizers leads to rule proliferation and loss of interpretability.

In summary, KANFIS makes four key contributions: (1) a linear‑complexity fuzzy inference architecture based on additive superposition, (2) native support for both T1 and IT2 fuzzy sets enabling explicit uncertainty modeling, (3) a principled regularization scheme that yields compact, non‑redundant rule bases, and (4) empirical evidence that these design choices do not sacrifice predictive performance. The work therefore establishes a new paradigm for building neuro‑symbolic models that are simultaneously accurate, scalable, and intrinsically interpretable.

Comments & Academic Discussion

Loading comments...

Leave a Comment