A Study of Adaptive Modeling Towards Robust Generalization

Large language models (LLMs) increasingly support reasoning over biomolecular structures, but most existing approaches remain modality-specific and rely on either sequence-style encodings or fixed-length connector tokens for structural inputs. These designs can under-expose explicit geometric cues and impose rigid fusion bottlenecks, leading to over-compression and poor token allocation as structural complexity grows. We present a unified all-atom framework that grounds language reasoning in geometric information while adaptively scaling structural tokens. The method first constructs variable-size structural patches on molecular graphs using an instruction-conditioned gating policy, enabling complexity-aware allocation of query tokens. It then refines the resulting patch tokens via cross-attention with modality embeddings and injects geometry-informed tokens into the language model to improve structure grounding and reduce structural hallucinations. Across diverse all-atom benchmarks, the proposed approach yields consistent gains in heterogeneous structure-grounded reasoning. An anonymized implementation is provided in the supplementary material.

💡 Research Summary

The paper introduces Cuttlefish, a unified all‑atom large language model (LLM) that overcomes the limitations of existing multimodal LLMs which either rely on sequence‑only encodings (e.g., SMILES, protein residues) or fixed‑length connector tokens such as Q‑Former. These approaches suffer from two major problems: (1) they compress rich geometric information into a constant token budget, causing over‑compression for large molecules and under‑utilization for small ones, and (2) they lack explicit geometric grounding, leading to structural hallucinations where the model generates chemically impossible explanations.

Cuttlefish addresses these issues with two novel components. First, Scaling‑Aware Patching dynamically allocates a variable number of structural tokens based on the complexity of each input graph. An instruction‑conditioned gating network (Ganc) scores every atom using the instruction embedding and the SE(3)‑equivariant node embeddings produced by an EGNN encoder. Atoms are ranked by softmax probabilities, and the top‑k atoms are selected such that the cumulative probability exceeds a preset mass threshold ρ. This yields a per‑graph anchor count k_g that scales with size. Each anchor then expands into a soft patch via a weighted assignment that combines Euclidean distance and the anchor’s gate score. Weighted pooling over the patch produces a set of patch tokens t_a, which serve as queries for the next stage. This mechanism eliminates the rigid token bottleneck and ensures that larger structures receive more representational capacity while smaller structures are not over‑allocated.

Second, the Geometry Grounding Adapter injects high‑resolution geometric evidence directly into the LLM. Patch tokens are projected to query vectors Q and passed through a stack of cross‑attention blocks that attend over the full set of modality embeddings X from the EGNN. The cross‑attention retrieves detailed spatial features, which are then projected back into the LLM’s embedding space as modality tokens b_T. These tokens are inserted at designated placeholder positions (y_ins) within the instruction sequence, accompanied by appropriate attention masks. By providing the LLM with explicit, geometry‑aware tokens, the model’s outputs become grounded in the actual atomic configuration, dramatically reducing hallucinations.

Training proceeds in two phases. Phase 1 pre‑trains the EGNN on a masked reconstruction task that predicts atom types, inter‑atomic distances, and noisy direction vectors, enforcing SE(3) equivariance. Phase 2 fine‑tunes the connector (Scaling‑Aware Patching + Geometry Grounding Adapter) on an all‑atom instruction‑following corpus (GEO‑AT) while keeping the EGNN and LLM frozen, thereby learning a clean geometry‑to‑text interface. Finally, the LLM is unfrozen and jointly fine‑tuned with a smaller learning rate to adapt to the new token distribution without losing its general language knowledge.

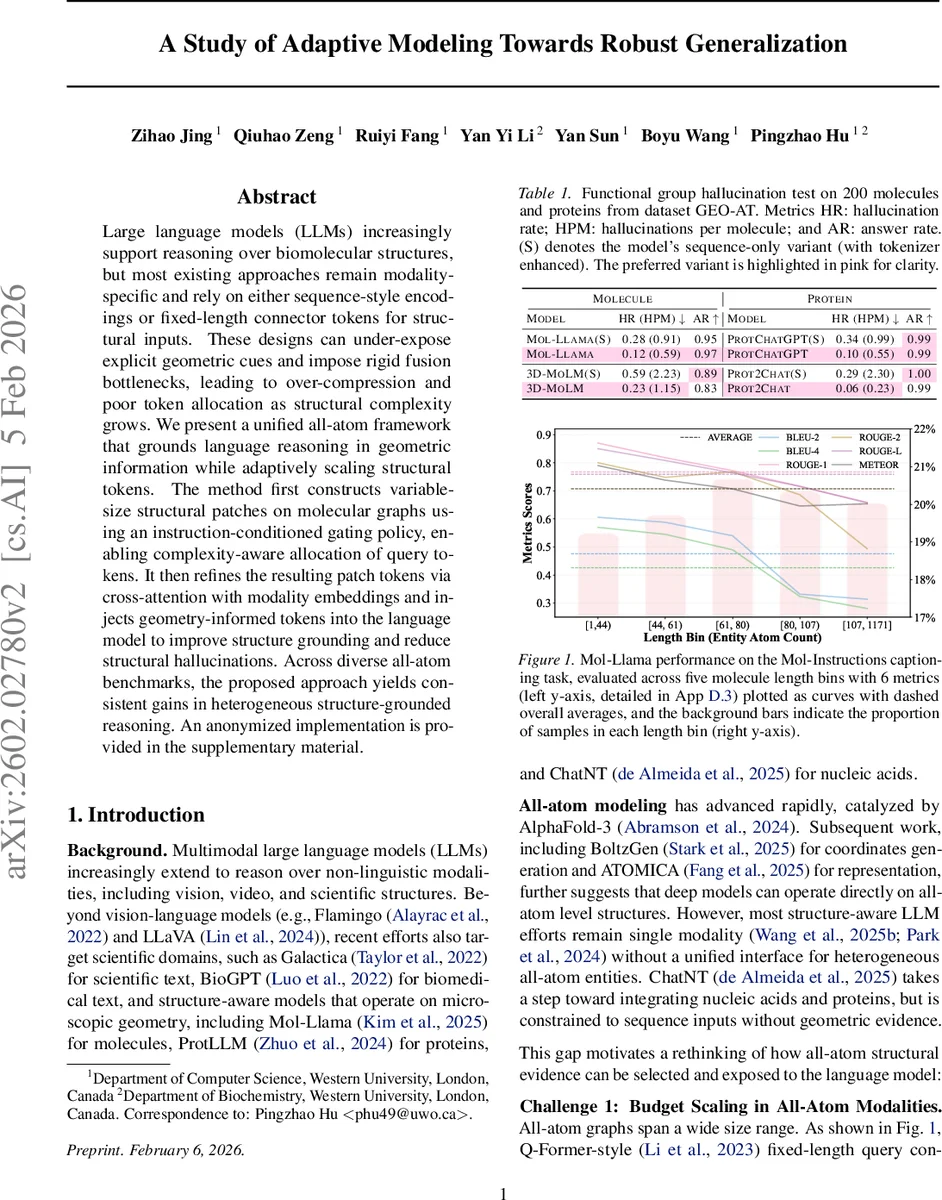

Empirical evaluation focuses on two benchmarks. The Functional Group Hallucination test (200 molecules and proteins) measures hallucination rate (HR), hallucinations per molecule (HPM), and answer rate (AR). Cuttlefish reduces HR from ~0.12 (baseline Mol‑Llama or ChatGPT variants) to ~0.03, while AR rises from 0.95 to 0.99, indicating near‑perfect grounding. The Mol‑Instructions captioning task assesses performance across five length bins (1‑170 atoms) using BLEU‑2/4, ROUGE‑1/2/L, and METEOR. Across all bins, especially the largest (≥80 atoms), Cuttlefish consistently outperforms fixed‑length baselines by 5‑12 % absolute, demonstrating that adaptive token scaling mitigates the degradation seen in traditional connectors.

In summary, Cuttlefish presents a principled solution for robust, geometry‑aware reasoning in all‑atom domains. By coupling a scaling‑aware patch generation mechanism with a cross‑attention‑based grounding adapter, it simultaneously solves the token‑budget scaling problem and eliminates structural hallucinations. This work paves the way for future scientific LLMs that can reliably reason, generate, and converse about complex molecular structures while preserving the rich spatial information essential for accurate scientific discourse.

Comments & Academic Discussion

Loading comments...

Leave a Comment