Active Perception Agent for Omnimodal Audio-Video Understanding

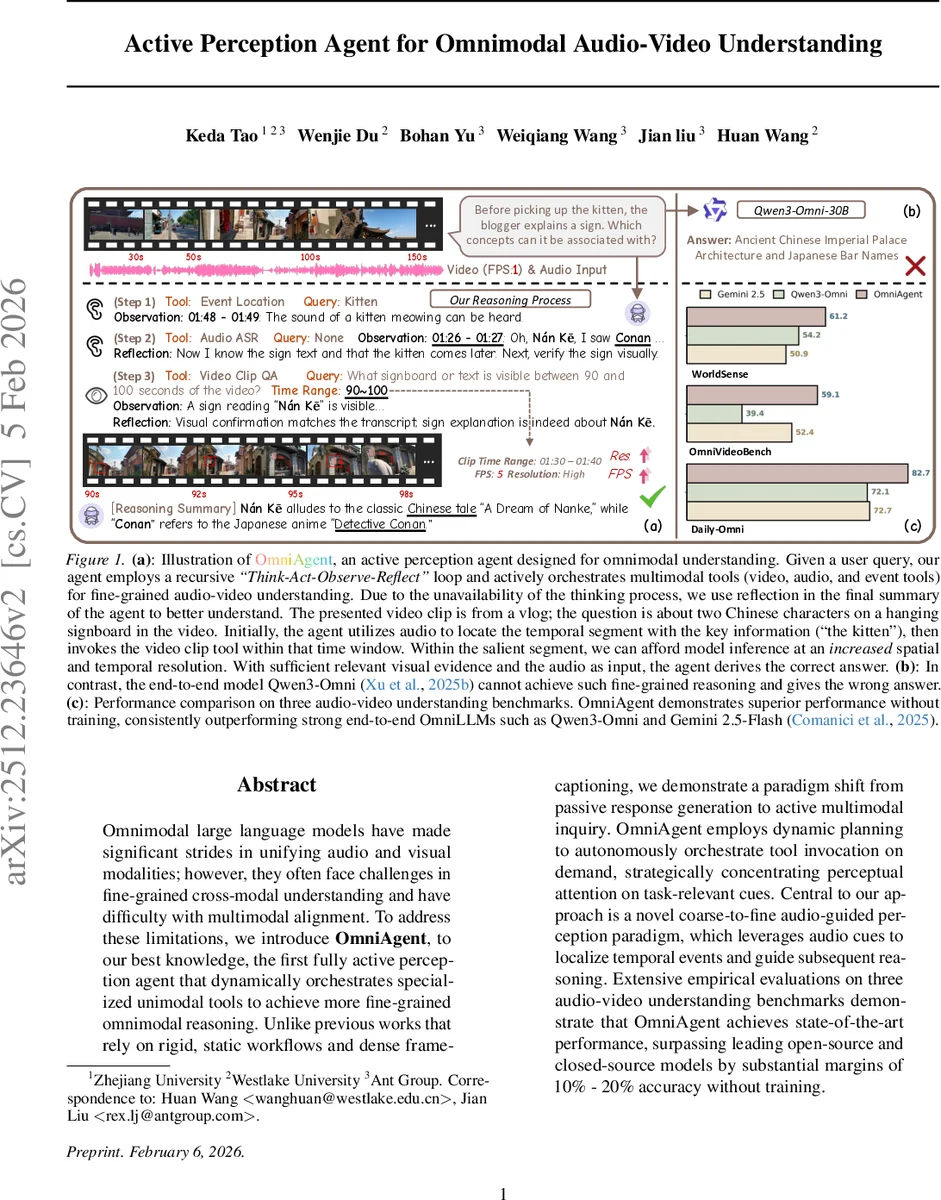

Omnimodal large language models have made significant strides in unifying audio and visual modalities; however, they often face challenges in fine-grained cross-modal understanding and have difficulty with multimodal alignment. To address these limitations, we introduce OmniAgent, to our best knowledge, the first fully active perception agent that dynamically orchestrates specialized unimodal tools to achieve more fine-grained omnimodal reasoning. Unlike previous works that rely on rigid, static workflows and dense frame-captioning, we demonstrate a paradigm shift from passive response generation to active multimodal inquiry. OmniAgent employs dynamic planning to autonomously orchestrate tool invocation on demand, strategically concentrating perceptual attention on task-relevant cues. Central to our approach is a novel coarse-to-fine audio-guided perception paradigm, which leverages audio cues to localize temporal events and guide subsequent reasoning. Extensive empirical evaluations on three audio-video understanding benchmarks demonstrate that OmniAgent achieves state-of-the-art performance, surpassing leading open-source and closed-source models by substantial margins of 10% - 20% accuracy without training.

💡 Research Summary

The paper introduces OmniAgent, the first fully active perception agent designed for fine‑grained audio‑video understanding. Existing omnimodal large language models (OmniLLMs) integrate visual and auditory encoders into a single architecture, but they suffer from two major drawbacks: (1) difficulty aligning audio and visual representations, which hampers precise cross‑modal reasoning, and (2) reliance on dense frame‑captioning pipelines that are computationally expensive and often produce irrelevant captions for the query.

OmniAgent reframes the problem as an active decision‑making process rather than a static retrieval task. At its core is a large language model (LLM) that orchestrates a library of modality‑specific tools in a Think‑Act‑Observe‑Reflect loop. The tool library is divided into three categories:

- Video tools – a global QA tool (low‑resolution, sparse frame sampling) for coarse temporal localization, and a clip‑QA tool (high‑resolution, high frame‑rate) for detailed analysis of a selected time window.

- Audio tools – an automatic speech recognition (ASR) module that provides timestamped transcripts, and a global audio captioning tool that summarizes acoustic events.

- Event tools – an audio‑guided event localization algorithm that scans the entire audio stream to produce precise temporal anchors for subsequent visual inspection.

The agent begins by listening: it runs the audio tools over the whole video, extracts speech transcripts and detects salient sounds (e.g., a kitten meowing). This inexpensive step yields a coarse temporal segment that is likely to contain the answer. The agent then decides whether to invoke a video tool; if the query requires visual verification (e.g., reading a signboard), it calls the clip‑QA tool on the narrowed segment, using higher spatial and temporal resolution.

Each tool call returns an observation (transcript, caption, visual answer) that is stored in a memory buffer. In the reflection phase the LLM synthesizes the observations, evaluates whether the accumulated evidence sufficiently answers the user question, and either produces the final answer or plans additional actions (e.g., listen to another segment or watch a different clip). This loop continues until the agent is confident, making the reasoning process transparent and explainable because every action and observation is logged.

The authors evaluate OmniAgent on three public audio‑video understanding benchmarks (including OmniVideoBench and AVQA). Without any additional training, OmniAgent outperforms strong open‑source and closed‑source baselines such as Qwen3‑Omni‑30B and Gemini 2.5‑Flash by 10–20 percentage points in accuracy. The gains are especially pronounced on queries that require fine‑grained temporal grounding or visual detail (e.g., identifying specific characters on a sign between 90 s and 100 s). In contrast, end‑to‑end OmniLLMs either miss the relevant segment or generate incorrect answers because they cannot dynamically allocate attention between modalities or adjust processing granularity.

Key contributions are:

- Introducing an active perception framework that lets the model autonomously choose when to “listen” and when to “watch,” thereby solving the cross‑modal alignment problem without joint training.

- Building a comprehensive modality‑aware toolkit and a novel audio‑guided event localization algorithm that provides low‑cost temporal anchors for visual analysis.

- Demonstrating state‑of‑the‑art performance on multiple benchmarks without any fine‑tuning, highlighting the efficiency and scalability of the approach.

The paper also discusses limitations: the current toolkit is limited to video, audio, and event tools; extending to other modalities (e.g., depth, OCR) will require new interfaces. Moreover, while the agent can reason about tool‑calling costs, a formal meta‑planning strategy for optimal cost‑benefit trade‑offs remains future work.

In summary, OmniAgent mimics human cognition—first exploiting the concise temporal cues in audio, then focusing visual attention where needed—offering a flexible, explainable, and highly effective solution for fine‑grained multimodal understanding.

Comments & Academic Discussion

Loading comments...

Leave a Comment