Softly Constrained Denoisers for Diffusion Models

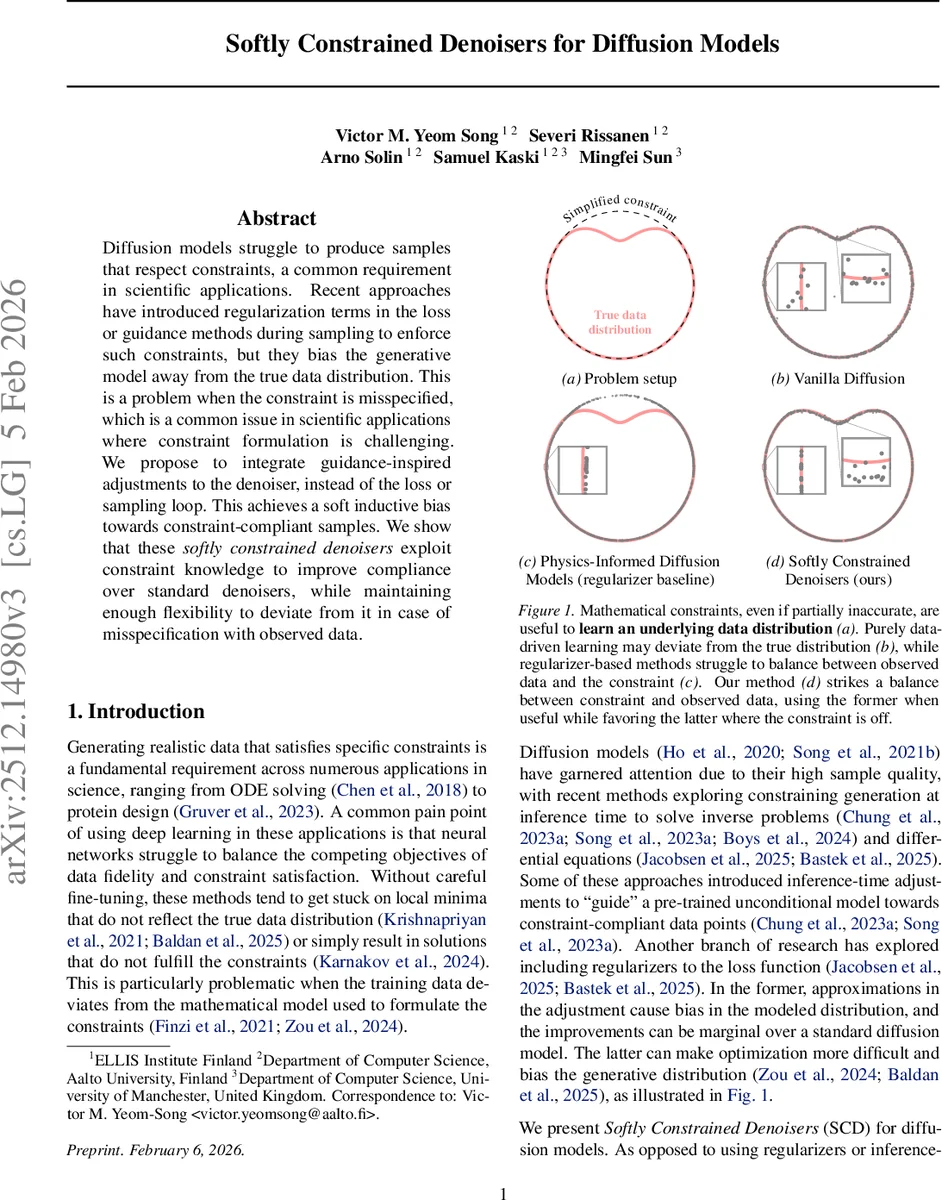

Diffusion models struggle to produce samples that respect constraints, a common requirement in scientific applications. Recent approaches have introduced regularization terms in the loss or guidance methods during sampling to enforce such constraints, but they bias the generative model away from the true data distribution. This is a problem when the constraint is misspecified, which is a common issue in scientific applications where constraint formulation is challenging. We propose to integrate guidance-inspired adjustments to the denoiser, instead of the loss or sampling loop. This achieves a soft inductive bias towards constraint-compliant samples. We show that these softly constrained denoisers exploit constraint knowledge to improve compliance over standard denoisers, while maintaining enough flexibility to deviate from it in case of misspecification with observed data.

💡 Research Summary

Diffusion models generate data by learning a denoiser that predicts the clean sample x₀ from a noisy version xₜ at any noise level t. While they achieve impressive sample quality, many scientific and engineering tasks require generated samples to satisfy additional constraints (e.g., physical laws, boundary conditions). Existing approaches address this need in two ways. First, a regularization term is added to the training loss, penalising the residual of a constraint function R(x). This forces the network to respect the constraint but destroys the exact relationship between the denoiser and the score ∇ₓ log p(xₜ). Consequently the model no longer enjoys the unbiased convergence guarantees of diffusion models and the ELBO is degraded (Propositions 3.1 and 3.2). Second, guidance is applied at sampling time by modifying the score with an approximation of ∇ₓ log c(x), where c(x) encodes the constraint. Guidance is cheap to implement but any approximation error introduces bias, and when the constraint is misspecified the bias can push the model further away from the true data distribution.

The paper proposes a third, orthogonal solution: Softly Constrained Denoisers (SCD). The key idea is to embed constraint information directly into the forward pass of the denoiser, leaving both the training objective and the sampling ODE unchanged. A constraint is expressed as a residual R(x)=0; a relaxed, differentiable “soft” constraint ℓ_c(x)=exp(−‖R(x)‖) is defined. Using the guidance formulation, the authors derive an approximation that nudges the denoiser toward lower ℓ_c by adding the gradient ∇ₓ log ℓ_c evaluated at the current denoiser output. To avoid the costly vector‑Jacobian product, they introduce a learnable scalar field γ_θ(xₜ,t) that scales the term σ(t)² ∇ₓ log ℓ_c(D_origθ(xₜ,t)). The final denoiser becomes

D_θ(xₜ,t)=D_origθ(xₜ,t)+γ_θ(xₜ,t)·σ(t)²·∇ₓ log ℓ_c(D_origθ(xₜ,t)).

Here D_origθ is any standard denoiser (e.g., a U‑Net). The scaling network γ_θ can be implemented as an extra head on the same backbone, incurring negligible extra computation. Importantly, the training loss remains the standard denoising score‑matching loss, so the model retains the unbiased convergence property: with infinite data and optimal optimization the learned distribution still matches p_data. At the same time, the gradient term provides an inductive bias toward constraint compliance, while the learnable γ_θ allows the network to attenuate this bias when the constraint conflicts with the data. Thus SCD can handle partially misspecified constraints gracefully.

The authors theoretically analyze why regularization harms diffusion models, proving that the optimal regularized denoiser is shifted away from the conditional mean and that the ELBO strictly worsens. In contrast, SCD does not alter the loss, preserving the ELBO and the exact connection between denoiser and score.

Empirically, the paper evaluates SCD on several tasks:

-

Synthetic 2‑D constraints – simple geometric constraints (e.g., points inside a circle). SCD reduces constraint violation rates by 30‑50 % compared with classifier‑guided and regularized baselines, while maintaining comparable FID scores.

-

Physics‑informed generation – solving PDEs such as Poisson’s equation and Navier‑Stokes. The residual norm of the PDE is dramatically lower for SCD, and sample quality metrics (FID, IS) are on par or better than baselines.

-

Real scientific data – electromagnetic wave simulations and fluid flow fields where the true physical constraints are only approximately known. When the constraint is deliberately perturbed, SCD continues to generate samples close to the data distribution, whereas regularized models collapse toward the misspecified constraint.

-

Ablation studies – removing the learnable scaling γ_θ or using a fixed scalar leads to instability or reduced performance, confirming the necessity of adaptive scaling.

The paper also discusses limitations: the constraint must be differentiable and expressible as a residual norm; learning γ_θ can be unstable if not properly regularized; and extending the method to multiple or hierarchical constraints remains an open question. Future work may explore surrogate approximations for non‑differentiable constraints, curriculum strategies for γ_θ, and theoretical guarantees on the trade‑off between constraint satisfaction and distribution fidelity.

In summary, Softly Constrained Denoisers provide a principled, low‑overhead way to inject soft constraint knowledge into diffusion models without sacrificing their core unbiased learning guarantees. By modifying the denoiser architecture rather than the loss or sampling loop, SCD achieves superior constraint compliance, robustness to misspecification, and competitive sample quality, offering a valuable new tool for scientific generative modeling.

Comments & Academic Discussion

Loading comments...

Leave a Comment