MindDrive: A Vision-Language-Action Model for Autonomous Driving via Online Reinforcement Learning

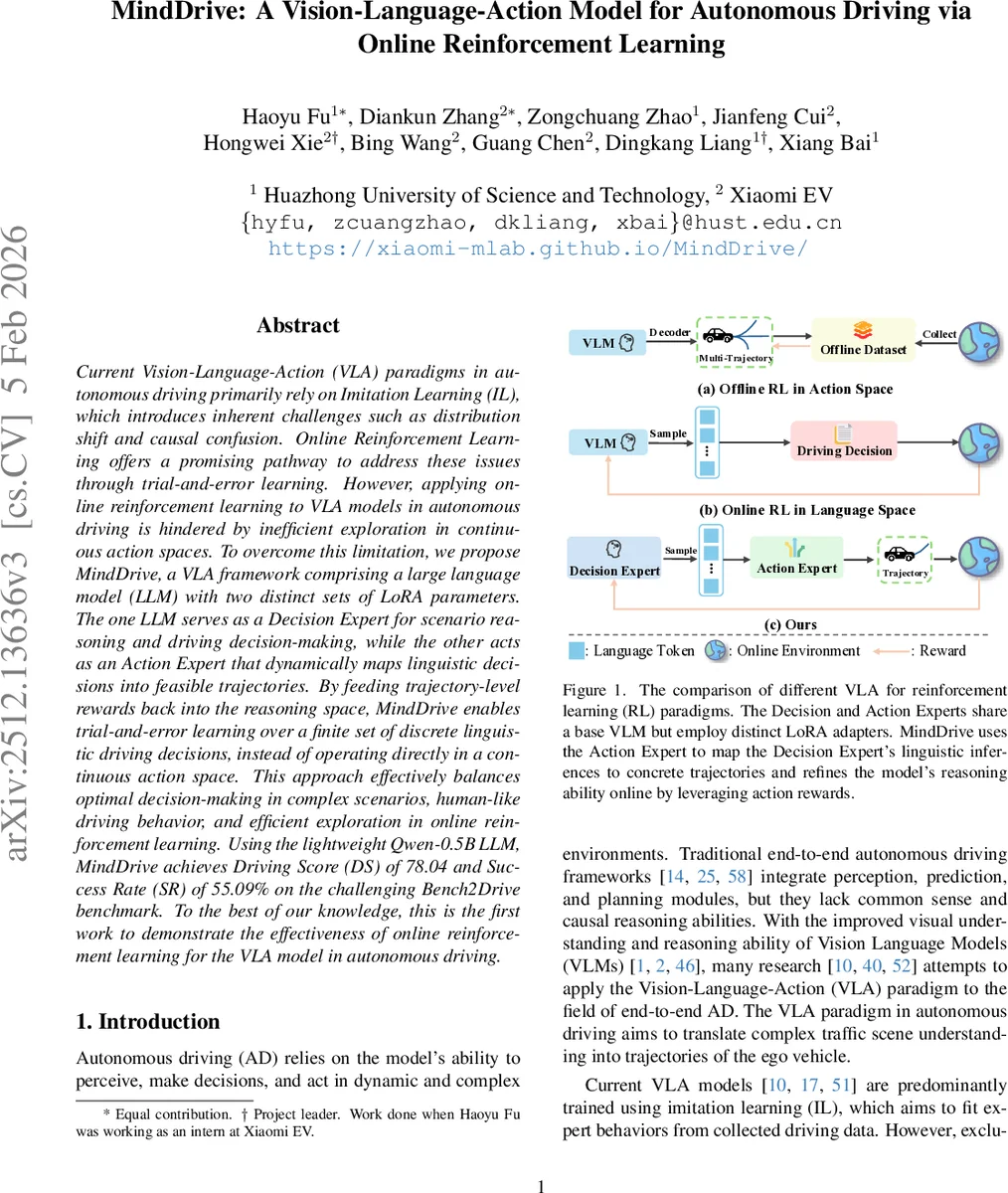

Current Vision-Language-Action (VLA) paradigms in autonomous driving primarily rely on Imitation Learning (IL), which introduces inherent challenges such as distribution shift and causal confusion. Online Reinforcement Learning offers a promising pathway to address these issues through trial-and-error learning. However, applying online reinforcement learning to VLA models in autonomous driving is hindered by inefficient exploration in continuous action spaces. To overcome this limitation, we propose MindDrive, a VLA framework comprising a large language model (LLM) with two distinct sets of LoRA parameters. The one LLM serves as a Decision Expert for scenario reasoning and driving decision-making, while the other acts as an Action Expert that dynamically maps linguistic decisions into feasible trajectories. By feeding trajectory-level rewards back into the reasoning space, MindDrive enables trial-and-error learning over a finite set of discrete linguistic driving decisions, instead of operating directly in a continuous action space. This approach effectively balances optimal decision-making in complex scenarios, human-like driving behavior, and efficient exploration in online reinforcement learning. Using the lightweight Qwen-0.5B LLM, MindDrive achieves Driving Score (DS) of 78.04 and Success Rate (SR) of 55.09% on the challenging Bench2Drive benchmark. To the best of our knowledge, this is the first work to demonstrate the effectiveness of online reinforcement learning for the VLA model in autonomous driving.

💡 Research Summary

MindDrive introduces a novel Vision‑Language‑Action (VLA) framework for autonomous driving that leverages online reinforcement learning (RL) to overcome the inherent limitations of imitation‑learning‑only (IL) approaches. The core idea is to shift exploration from the high‑dimensional continuous trajectory space to a compact discrete language‑based decision space. To achieve this, the authors employ a large language model (LLM) equipped with two separate LoRA adapters, creating a “Decision Expert” and an “Action Expert”. Both experts share the same base LLM weights, preserving world knowledge, but each LoRA module specializes in a distinct function.

The Decision Expert receives multi‑view visual inputs and natural‑language navigation instructions, then generates high‑level meta‑actions (e.g., “turn left”, “slow down”). The Action Expert takes these meta‑actions and, conditioned on the same visual context, produces concrete vehicle trajectories expressed as sequences of speed and path waypoints. This two‑stage design decouples high‑level reasoning from low‑level control while maintaining a tight, learnable mapping between language and motion.

Training proceeds in two phases. First, an imitation‑learning (IL) stage aligns meta‑actions with expert trajectories using a curated dataset of language‑action pairs. The IL stage establishes a one‑to‑one correspondence, ensuring that the Action Expert can generate high‑quality, human‑like trajectories for any meta‑action produced by the Decision Expert. Second, an online RL stage runs in a closed‑loop CARLA simulator. The system interacts with the environment, receives scalar rewards that encode task success, collisions, traffic‑rule violations, etc., and feeds these rewards back to update only the Decision Expert’s LoRA parameters. Because the Action Expert already provides feasible trajectories, the RL agent explores a dramatically reduced action space, leading to far more efficient learning.

The authors formalize the problem as a Markov Decision Process (MDP) ⟨S, A, P, R, γ⟩ where the state sₜ is a compact “scene token” pre‑computed from visual and textual inputs, minimizing memory overhead and enabling large‑batch updates. The policy π_d (Decision Expert) selects a meta‑action, while π_g (Action Expert) deterministically maps it to a trajectory. The objective J(θ)=E_{τ∼π_d}

Comments & Academic Discussion

Loading comments...

Leave a Comment