Data Heterogeneity and Forgotten Labels in Split Federated Learning

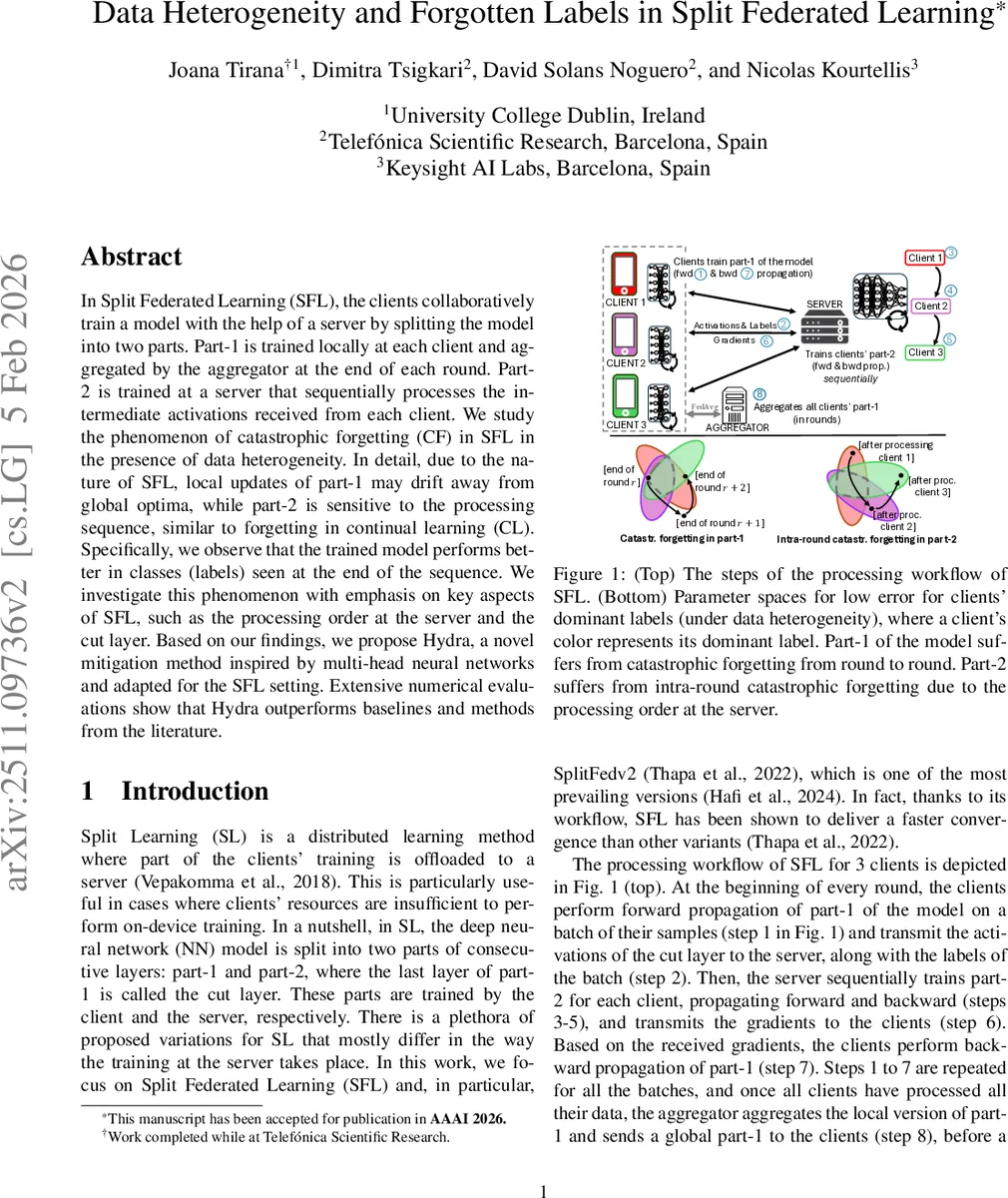

In Split Federated Learning (SFL), the clients collaboratively train a model with the help of a server by splitting the model into two parts. Part-1 is trained locally at each client and aggregated by the aggregator at the end of each round. Part-2 is trained at a server that sequentially processes the intermediate activations received from each client. We study the phenomenon of catastrophic forgetting (CF) in SFL in the presence of data heterogeneity. In detail, due to the nature of SFL, local updates of part-1 may drift away from global optima, while part-2 is sensitive to the processing sequence, similar to forgetting in continual learning (CL). Specifically, we observe that the trained model performs better in classes (labels) seen at the end of the sequence. We investigate this phenomenon with emphasis on key aspects of SFL, such as the processing order at the server and the cut layer. Based on our findings, we propose Hydra, a novel mitigation method inspired by multi-head neural networks and adapted for the SFL setting. Extensive numerical evaluations show that Hydra outperforms baselines and methods from the literature.

💡 Research Summary

Split Federated Learning (SFL) partitions a deep neural network into a client‑side Part‑1 and a server‑side Part‑2. Clients train Part‑1 locally on their private data, send the cut‑layer activations (and labels) to the server, and the server processes these activations sequentially to update Part‑2. While prior work has mainly focused on the drift of Part‑1 caused by federated averaging, this paper uncovers a previously unstudied form of catastrophic forgetting that occurs inside each training round: the processing order of clients at the server leads to “intra‑round catastrophic forgetting” (intra‑CF) in Part‑2.

When data are non‑IID—particularly when each client has a dominant label—the model consistently performs better on the labels of the clients processed last, while earlier‑processed labels suffer a drop in accuracy. This phenomenon mirrors forgetting in continual learning (CL) but differs because SFL’s data stream is fixed and cyclic: every client’s activations are revisited each round, and repetitions of the same label can occur within a round depending on the order.

The authors examine two server‑side ordering policies: (i) random (first‑come‑first‑served) and (ii) cyclic, where clients are grouped by their dominant label and processed in a fixed label‑wise sequence each round. Experiments across MobileNetV1 and ResNet101, and datasets CIFAR‑10/100, SVHN, TinyImageNet, show that the cyclic order yields higher global accuracy and lower forgetting metrics (Backward Transfer, BW, and Performance Gap, PG). Moreover, the scale factor ϕ (the number of clients sharing the same dominant label) influences forgetting: smaller ϕ (more label diversity in the sequence) reduces PG, whereas large ϕ causes the server to see many consecutive samples of the same label, leading to over‑learning of that label and severe forgetting of earlier ones.

Cut‑layer depth also plays a crucial role. A shallow cut (few early layers in Part‑1, most layers in Part‑2) amplifies intra‑CF because the server controls most parameters; a deeper cut shifts the burden to Part‑1, where traditional federated drift dominates. The paper therefore highlights that both the processing order and the cut‑layer choice are key levers for controlling forgetting in SFL.

Existing mitigation techniques from CL (e.g., Elastic Weight Consolidation) or FL (e.g., Scaffold, knowledge replay) are unsuitable for SFL: they either rely on temporal data streams or require access to the full model, which contradicts SFL’s split architecture and privacy constraints. To address intra‑CF, the authors propose Hydra, a multi‑head extension applied to the last layers of Part‑2. Clients are first grouped by their dominant label; each group updates its own head while sharing the underlying backbone. After training, the heads are aggregated (averaged or weighted) for inference. This design confines repeated updates for a given label to its dedicated head, thereby preventing the label from being overwritten when other labels are processed later. At the same time, the shared backbone preserves the benefits of joint learning with minimal additional memory or computation.

Hydra is evaluated under three heterogeneity partitioning schemes (Dominant‑Label, Dirichlet, Sharding) and compared against baselines such as EWC, Scaffold, SplitFed‑v1/v2, and recent split‑learning forgetting mitigations. Across all settings, Hydra improves global accuracy by 4–7 percentage points, reduces BW and PG substantially, and narrows the per‑label performance gap by more than 30 %. The gains are especially pronounced when ϕ is large or when a shallow cut layer is used, confirming that Hydra directly counteracts the intra‑CF mechanism identified earlier.

In summary, the paper makes four major contributions: (1) identification and systematic characterization of intra‑round catastrophic forgetting in SFL; (2) empirical evidence that processing order and cut‑layer depth critically affect forgetting; (3) introduction of new evaluation metrics (PG, BW, per‑position accuracy) tailored to the SFL setting; and (4) the Hydra multi‑head mitigation strategy, which demonstrably restores balanced performance across labels while preserving the efficiency and privacy advantages of split federated learning. The authors also release their implementation on GitHub, facilitating reproducibility and future extensions to more complex heterogeneous or multi‑task scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment