RefAM: Attention Magnets for Zero-Shot Referral Segmentation

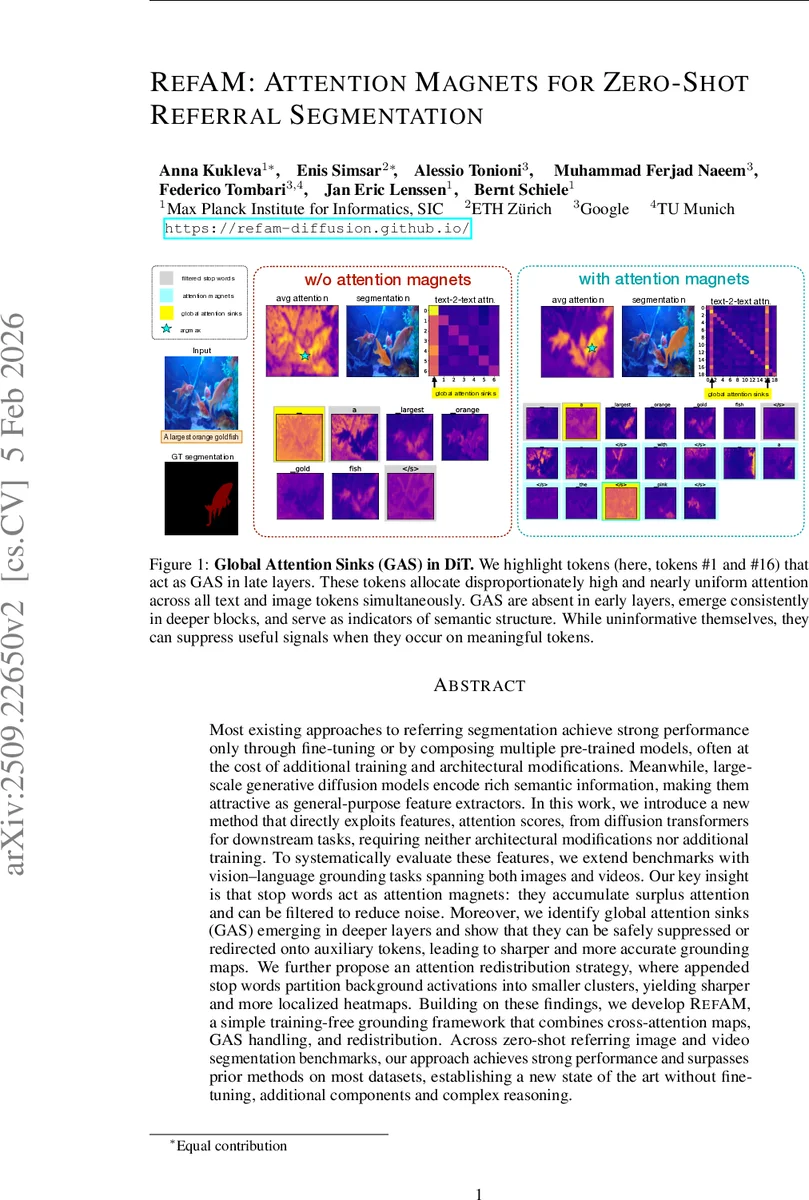

Most existing approaches to referring segmentation achieve strong performance only through fine-tuning or by composing multiple pre-trained models, often at the cost of additional training and architectural modifications. Meanwhile, large-scale generative diffusion models encode rich semantic information, making them attractive as general-purpose feature extractors. In this work, we introduce a new method that directly exploits features, attention scores, from diffusion transformers for downstream tasks, requiring neither architectural modifications nor additional training. To systematically evaluate these features, we extend benchmarks with vision-language grounding tasks spanning both images and videos. Our key insight is that stop words act as attention magnets: they accumulate surplus attention and can be filtered to reduce noise. Moreover, we identify global attention sinks (GAS) emerging in deeper layers and show that they can be safely suppressed or redirected onto auxiliary tokens, leading to sharper and more accurate grounding maps. We further propose an attention redistribution strategy, where appended stop words partition background activations into smaller clusters, yielding sharper and more localized heatmaps. Building on these findings, we develop RefAM, a simple training-free grounding framework that combines cross-attention maps, GAS handling, and redistribution. Across zero-shot referring image and video segmentation benchmarks, our approach achieves strong performance and surpasses prior methods on most datasets, establishing a new state of the art without fine-tuning, additional components and complex reasoning.

💡 Research Summary

The paper introduces RefAM, a training‑free framework for referring (or “referral”) segmentation that directly leverages the cross‑attention maps produced by large‑scale diffusion transformers (DiTs). Unlike prior zero‑shot approaches that either rely on CLIP embeddings, generate object proposals, or fine‑tune additional modules, RefAM uses the attention scores already computed during the diffusion denoising process. The authors observe two emergent phenomena in DiTs: (1) stop‑words such as “a”, “the”, “with” consistently attract a disproportionate amount of attention, acting as “attention magnets”; (2) in deeper layers a small set of tokens become Global Attention Sinks (GAS), allocating uniformly high attention across both text and image tokens while carrying little semantic content. Both phenomena are treated as opportunities rather than bugs.

The method proceeds in three stages. First, for a given image (or video frame) and a referring expression, the model extracts cross‑attention maps M(k) from multiple layers and heads for each token k. Second, the original expression is augmented with a predefined list of stop‑words (the “attention magnets”). During aggregation, the attention maps belonging to these stop‑words are discarded, while the presence of the extra stop‑words forces surplus background attention to be absorbed by them, effectively cleaning the remaining maps. Simultaneously, GAS tokens are automatically identified (by measuring per‑token attention mass) and either zero‑ed out or redirected onto auxiliary tokens, preventing them from suppressing useful signals. The filtered maps are averaged to produce a heatmap H_e; the argmax of H_e yields a query point p_ref. Finally, this point is fed to a foundation segmentation model such as SAM or SAM2 to generate the final mask. For videos, the point from the first frame is propagated across time using SAM2, requiring no additional temporal modeling.

Extensive experiments cover both static image benchmarks (RefCOCO, RefCOCO+, RefCOCOg) and video benchmarks (A2D Sentences, JHMDB Sentences). RefAM consistently outperforms prior training‑free baselines (e.g., Global‑Local, Ref‑Diff, AL‑Ref‑SAM) and often matches or exceeds methods that involve fine‑tuning or multi‑stage pipelines. Ablation studies demonstrate that (i) removing the stop‑word augmentation reduces IoU by 3–5 %, (ii) suppressing GAS improves heatmap sharpness and downstream accuracy, and (iii) filtering up to 60 % of the early transformer blocks has negligible impact, confirming that semantic alignment emerges only after the middle layers.

The contribution of the paper is threefold: (1) a systematic analysis of global attention sinks in diffusion transformers and evidence that they are semantically empty; (2) the novel use of stop‑words as attention magnets to redistribute background activations, yielding cleaner grounding signals; (3) the construction of RefAM, a simple yet effective zero‑shot grounding pipeline that requires no model modifications, additional training data, or complex reasoning. The work demonstrates that large diffusion models already encode rich multimodal semantics, and that careful manipulation of their attention dynamics can unlock strong performance for vision‑language tasks without any extra learning. Future directions include learning optimal “magnet” token sets, extending the approach to other multimodal tasks such as visual question answering, and exploring dynamic GAS mitigation strategies for even finer‑grained localization.

Comments & Academic Discussion

Loading comments...

Leave a Comment