Dynamic Context Adaptation for Consistent Role-Playing Agents with Retrieval-Augmented Generations

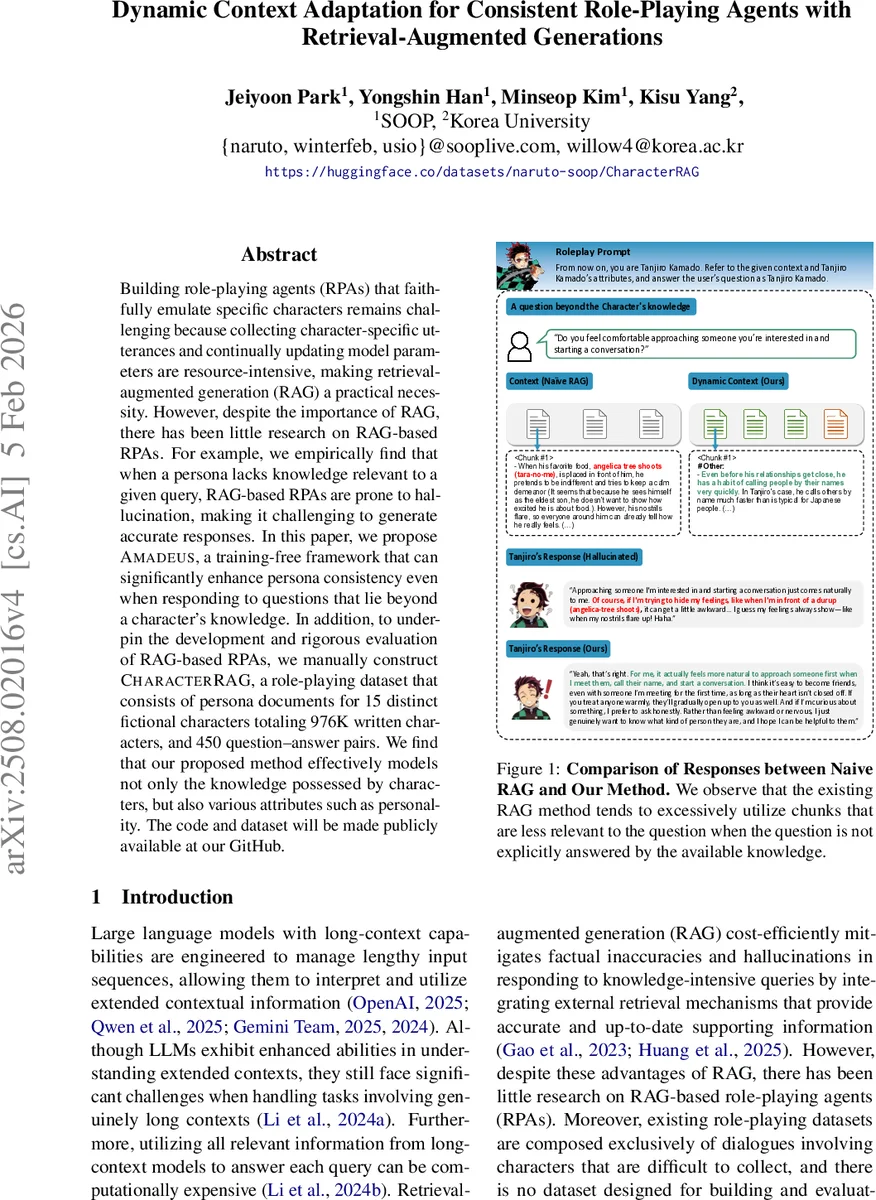

Building role-playing agents (RPAs) that faithfully emulate specific characters remains challenging because collecting character-specific utterances and continually updating model parameters are resource-intensive, making retrieval-augmented generation (RAG) a practical necessity. However, despite the importance of RAG, there has been little research on RAG-based RPAs. For example, we empirically find that when a persona lacks knowledge relevant to a given query, RAG-based RPAs are prone to hallucination, making it challenging to generate accurate responses. In this paper, we propose Amadeus, a training-free framework that can significantly enhance persona consistency even when responding to questions that lie beyond a character’s knowledge. In addition, to underpin the development and rigorous evaluation of RAG-based RPAs, we manually construct CharacterRAG, a role-playing dataset that consists of persona documents for 15 distinct fictional characters totaling 976K written characters, and 450 question-answer pairs. We find that our proposed method effectively models not only the knowledge possessed by characters, but also various attributes such as personality.

💡 Research Summary

The paper tackles two fundamental challenges in building role‑playing agents (RPAs): the high cost of collecting character‑specific utterances and continuously fine‑tuning language models, and the propensity of retrieval‑augmented generation (RAG) systems to hallucinate when a persona lacks direct knowledge about a user query. To address these issues, the authors introduce a training‑free framework called Amadeus and a new evaluation dataset named CharacterRAG.

CharacterRAG comprises 15 well‑known fictional characters (e.g., Tanjiro, Megumin) with a total of 976 K characters of persona text and 450 question‑answer pairs. Each persona is organized around six canonical role‑playing attributes: Activity, Belief & Value, Demographic Information, Psychological Traits, Skill & Expertise, and Social Relationships. The dataset was manually curated from Korean wiki sources, stripped of editorial speculation, and rewritten from the perspective of each character, ensuring that the information reflects the character’s own voice rather than external commentary.

Amadeus consists of three sequential modules designed to work with any off‑the‑shelf large language model (LLM) and external retriever, without any additional fine‑tuning:

-

Adaptive Context‑aware Text Splitter (ACTS) – Instead of naïvely chopping a persona into fixed‑size chunks, ACTS first determines the maximum paragraph length (l_max) in the persona, then creates overlapping windows of length l_max with an overlap of l_max/2. Each chunk is appended with its hierarchical header (e.g., “Tanjiro’s actions → 4.1.1”), preserving intra‑document context and reducing information loss. The operation runs in O(N) time, making it scalable to large persona files.

-

Guided Selection (GS) – GS goes beyond pure semantic similarity. After sorting chunks by similarity to the user query, it iteratively feeds each chunk to an LLM that decides whether the chunk can be used to infer the answer, even if the answer is not explicitly stated. Chunks deemed useful are added to a “slot” until it is full; if no chunk passes the inference test, the top‑K most similar chunks are returned as a fallback. This step enables the system to retrieve implicit cues such as personality traits or values that are embedded in narrative descriptions, thereby mitigating the over‑use of irrelevant chunks that commonly causes hallucinations in standard RAG pipelines.

-

Attribute Extractor (AE) – From the set of chunks selected by GS, AE extracts two high‑level attribute groups: (i) Belief & Value, which capture a character’s core principles and ideological stance, and (ii) Psychological Traits, encompassing personality, emotional tendencies, and cognitive preferences. These attributes are injected directly into the final prompt, guiding the LLM to generate responses that are not only factually grounded but also aligned with the character’s internal worldview.

The authors evaluate Amadeus against five contemporary RAG baselines—Naïve RAG, CRA G, Raptor, Adaptive RAG, and LightRAG—using three LLM back‑ends (GPT‑4.1, Gemma‑3‑27B, Qwen‑3‑32B with chain‑of‑thought). Evaluation metrics include exact answer accuracy, hallucination rate, and personality consistency measured via 60 MBTI items and 120 BFI items. Human‑in‑the‑loop interviews supplement automatic metrics.

Results show that Amadeus consistently reduces hallucinations by more than 30 % relative to the strongest baseline and improves personality‑consistency scores dramatically (MBTI: 0.68 → 0.84; BFI: 0.71 → 0.86). The gains are especially pronounced for queries that lie outside the explicit knowledge stored in the persona; in these cases, the attribute information supplied by AE proves crucial for producing coherent, character‑consistent answers.

The paper also discusses limitations. CharacterRAG is currently limited to Korean‑language sources, so cross‑cultural generalization remains untested. The GS stage requires repeated LLM calls, which can increase inference latency and cost. Future work is suggested on multimodal extensions (image, audio), dynamic attribute updating via user feedback, and more efficient routing mechanisms to lower computational overhead.

In summary, Amadeus offers a practical, model‑agnostic pipeline that transforms a raw persona document into a context‑rich, attribute‑aware prompt, enabling RAG‑based RPAs to answer out‑of‑knowledge queries while preserving the target character’s personality and values. The publicly released CharacterRAG dataset provides a solid benchmark for further research on retrieval‑augmented role‑playing, and the empirical results demonstrate that the proposed approach markedly outperforms existing RAG methods in both factual accuracy and persona fidelity.

Comments & Academic Discussion

Loading comments...

Leave a Comment