Generalizable Trajectory Prediction via Inverse Reinforcement Learning with Mamba-Graph Architecture

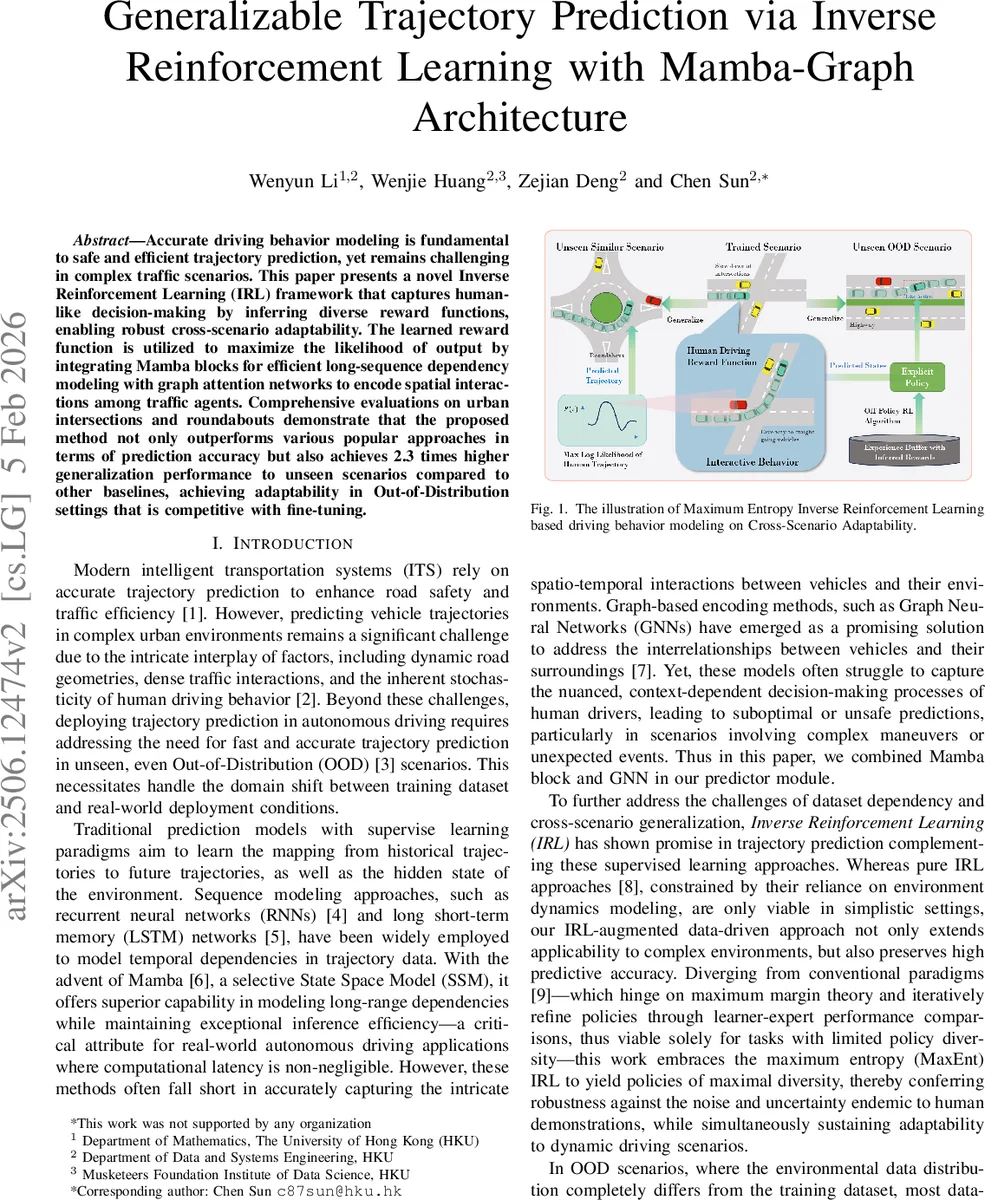

Accurate driving behavior modeling is fundamental to safe and efficient trajectory prediction, yet remains challenging in complex traffic scenarios. This paper presents a novel Inverse Reinforcement Learning (IRL) framework that captures human-like decision-making by inferring diverse reward functions, enabling robust cross-scenario adaptability. The learned reward function is utilized to maximize the likelihood of output by integrating Mamba blocks for efficient long-sequence dependency modeling with graph attention networks to encode spatial interactions among traffic agents. Comprehensive evaluations on urban intersections and roundabouts demonstrate that the proposed method not only outperforms various popular approaches in terms of prediction accuracy but also achieves 2.3 times higher generalization performance to unseen scenarios compared to other baselines, achieving adaptability in Out-of-Distribution settings that is competitive with fine-tuning.

💡 Research Summary

The paper introduces a novel trajectory‑prediction framework that tightly integrates three state‑of‑the‑art components: (1) the Mamba block, a selective state‑space model (SSM) designed for efficient long‑range sequence modeling; (2) a Graph Attention Network (GAT) that encodes spatial interactions among traffic agents; and (3) a Maximum‑Entropy Inverse Reinforcement Learning (MaxEnt IRL) module that learns a reward function directly from human driving demonstrations.

Problem motivation

Autonomous driving systems must predict future vehicle positions under diverse, often unseen, traffic conditions. Conventional supervised models (RNN/LSTM, Transformers) either struggle with very long temporal dependencies or fail to capture the nuanced decision‑making process of human drivers. Moreover, domain shift between training data and real‑world deployment (Out‑of‑Distribution, OOD) leads to severe performance drops. The authors argue that learning a human‑like reward function can provide scenario‑agnostic guidance, while a powerful sequence model can generate accurate future states.

Architecture

The predictor follows an encoder‑decoder scheme. The encoder consists of a GRU that processes the historical trajectory, followed by a GAT that aggregates information from neighboring vehicles represented as a heterogeneous graph (nodes = agents, edges = relative distance, velocity, heading, etc.). Attention weights are computed with a LeakyReLU‑based scoring function, allowing the model to focus on the most influential neighbors.

The decoder is built exclusively from Mamba blocks. Each block updates a hidden state h_t using discrete SSM equations: h_t = Ā h_{t‑1} + B̄ ŝ_{t‑1}, ŷ_t = C h_t. This formulation yields linear‑time inference while preserving the ability to capture dependencies over hundreds of timesteps—crucial for real‑time autonomous driving where latency must be low.

MaxEnt IRL component

Human demonstrations are transformed into state‑action pairs (s_t, a_t), where the “action” is defined as the geometric displacement between consecutive positions (Δx). The reward function R(s,a;θ_RF) is parameterized by a deep neural network rather than a linear model. Following the MaxEnt principle, the likelihood of a trajectory τ is proportional to exp(∑t R(s_t,a_t;θ_RF)), normalized by a partition function Z. Because Z is intractable, the authors approximate it via importance sampling with Monte‑Carlo draws from the demonstration set, assuming a uniform policy for simplicity. The loss for the reward network is L_RF = –E{τ∼π*}

Comments & Academic Discussion

Loading comments...

Leave a Comment