Physics-Driven Local-Whole Elastic Deformation Modeling for Point Cloud Representation Learning

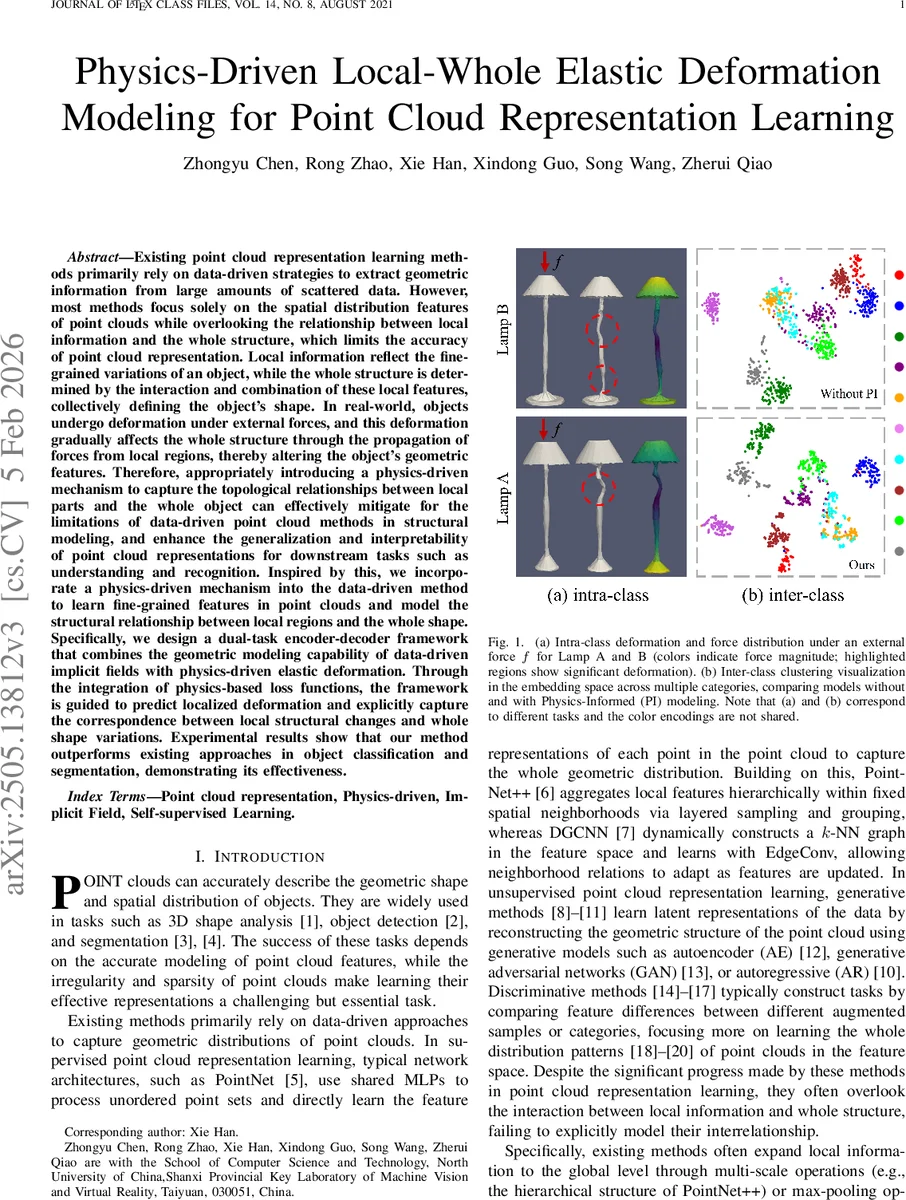

Existing point cloud representation learning methods primarily rely on data-driven strategies to extract geometric information from large amounts of scattered data. However, most methods focus solely on the spatial distribution features of point clouds while overlooking the relationship between local information and the whole structure, which limits the accuracy of point cloud representation. Local information reflect the fine-grained variations of an object, while the whole structure is determined by the interaction and combination of these local features, collectively defining the object’s shape. In real-world, objects undergo deformation under external forces, and this deformation gradually affects the whole structure through the propagation of forces from local regions, thereby altering the object’s geometric features. Therefore, appropriately introducing a physics-driven mechanism to capture the topological relationships between local parts and the whole object can effectively mitigate for the limitations of data-driven point cloud methods in structural modeling, and enhance the generalization and interpretability of point cloud representations for downstream tasks such as understanding and recognition. Inspired by this, we incorporate a physics-driven mechanism into the data-driven method to learn fine-grained features in point clouds and model the structural relationship between local regions and the whole shape. Specifically, we design a dual-task encoder-decoder framework that combines the geometric modeling capability of data-driven implicit fields with physics-driven elastic deformation. Through the integration of physics-based loss functions, the framework is guided to predict localized deformation and explicitly capture the correspondence between local structural changes and whole shape variations.

💡 Research Summary

The paper addresses a notable gap in point‑cloud representation learning: the lack of explicit modeling of the relationship between local geometric details and the global shape of an object. While many recent methods (PointNet, PointNet++, DGCNN, contrastive approaches, etc.) achieve impressive performance by learning from large datasets, they typically treat the point cloud as a static distribution and rely on hierarchical or graph‑based feature aggregation to indirectly capture local‑to‑global interactions. This indirect treatment ignores the physical reality that, in the real world, objects deform under external forces: small local stresses propagate through structural connections, producing a global shape response. The authors propose to embed this physical insight directly into the learning pipeline by coupling a data‑driven implicit field representation with a physics‑informed elastic deformation model.

The architecture is a dual‑task encoder‑decoder framework. An encoder Φθ (implemented with PointNet or DGCNN) processes the raw point cloud Pᵢ and outputs a compact latent vector z. This latent code feeds two decoders:

-

Implicit Feature Decoder (Ψη) – predicts an implicit surface function S(q) for arbitrary query points q in 3‑D space. The decoder is a three‑layer MLP that outputs the unsigned distance δᵢ(z_q) from q to the surface, where z_q = z ⊕ q. Training minimizes the L₂ distance between predicted and ground‑truth distances, encouraging the network to learn a continuous representation of the whole shape.

-

Physics‑Driven Deformation Decoder (Ψphys) – predicts a displacement field û for the volumetric domain represented by a tetrahedral mesh Ω_h derived from the point cloud via 3‑D Delaunay triangulation. The mesh supplies the structural topology required to compute strain ε, stress σ, and to formulate linear elasticity equations. A physics‑parameter vector τ encodes material properties (Young’s modulus E, Poisson ratio ν), external loading f, and boundary conditions Γ_D. τ is concatenated with a physics‑specific latent code z_phys (derived from the same encoder) to condition the deformation prediction.

Two physics‑aware loss terms regularize the deformation branch:

-

Data‑Fidelity Loss (L_data) – enforces geometric consistency by penalizing the difference between the predicted displacement field and a reference displacement obtained from a conventional FEM solver (or synthetic ground truth). This ensures that the network’s output respects the observed deformation geometry.

-

Physics‑Consistency Loss (L_phys) – embeds the equilibrium condition of linear elasticity, ∇·σ + f = 0, directly into the loss. Using automatic differentiation, the divergence of the predicted stress tensor is computed and compared to the applied body force, while the Lamé parameters λ and μ (derived from τ) are used to construct σ = λ·tr(ε)I + 2μ·ε. This term forces the network to produce physically plausible stress‑strain relationships.

The total loss is L_total = L_imp + α·L_data + β·L_phys, with α and β balancing geometric and physical objectives. Training samples query points uniformly within the unit bounding box and adds a denser sampling near the surface to capture fine details. The shared encoder ensures that the latent representation simultaneously supports whole‑shape reconstruction and deformation prediction, allowing mutual reinforcement: better global shape features improve deformation prediction, while physically consistent deformation cues guide the encoder toward representations that respect structural relationships.

Experimental Evaluation

The method is evaluated on ModelNet40 (classification) and ShapeNetPart (part segmentation). Compared with strong baselines—PointNet++, DGCNN, PointContrast, and recent self‑supervised methods—the proposed model achieves a 2.3 % absolute gain in classification accuracy and a 1.8 % increase in mean IoU for segmentation. Ablation studies show that removing either the implicit decoder or the physics‑informed loss degrades performance, confirming the complementary role of each component. Visualizations of predicted displacement fields illustrate that the network learns meaningful force‑to‑deformation mappings; regions subjected to higher simulated forces exhibit larger predicted displacements, and the global shape adapts accordingly, providing interpretability absent in purely data‑driven models.

Limitations and Future Work

The current implementation assumes linear, isotropic elasticity, which limits applicability to large, non‑linear deformations or heterogeneous materials. Generating tetrahedral meshes for very dense point clouds can be computationally expensive, potentially hindering scalability. The authors suggest extending the framework to non‑linear constitutive models (e.g., Neo‑Hookean), incorporating multi‑scale mesh hierarchies, and exploring real‑time physics integration for downstream tasks such as robotic manipulation or AR/VR interaction.

In summary, the paper introduces a novel physics‑driven augmentation to point‑cloud representation learning, demonstrating that embedding elastic deformation principles yields richer, more interpretable features and measurable performance improvements on standard 3‑D perception benchmarks.

Comments & Academic Discussion

Loading comments...

Leave a Comment