RAD: Region-Aware Diffusion Models for Image Inpainting

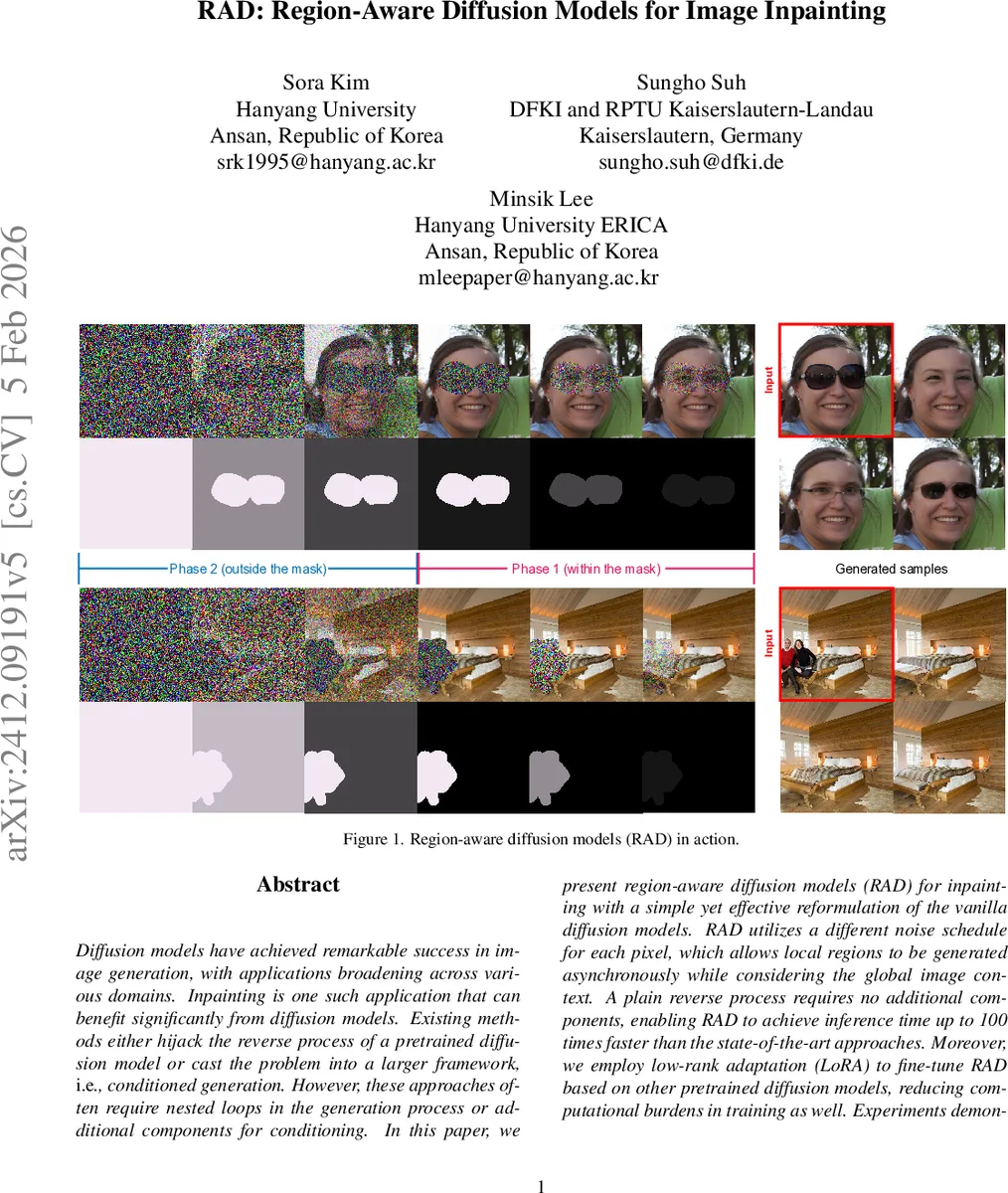

Diffusion models have achieved remarkable success in image generation, with applications broadening across various domains. Inpainting is one such application that can benefit significantly from diffusion models. Existing methods either hijack the reverse process of a pretrained diffusion model or cast the problem into a larger framework, \ie, conditioned generation. However, these approaches often require nested loops in the generation process or additional components for conditioning. In this paper, we present region-aware diffusion models (RAD) for inpainting with a simple yet effective reformulation of the vanilla diffusion models. RAD utilizes a different noise schedule for each pixel, which allows local regions to be generated asynchronously while considering the global image context. A plain reverse process requires no additional components, enabling RAD to achieve inference time up to 100 times faster than the state-of-the-art approaches. Moreover, we employ low-rank adaptation (LoRA) to fine-tune RAD based on other pretrained diffusion models, reducing computational burdens in training as well. Experiments demonstrated that RAD provides state-of-the-art results both qualitatively and quantitatively, on the FFHQ, LSUN Bedroom, and ImageNet datasets.

💡 Research Summary

Region‑Aware Diffusion (RAD) introduces a fundamentally new way to perform image inpainting with diffusion models. Traditional diffusion‑based inpainting either hijacks the reverse process of a pretrained model, adding complex resampling loops and mask‑aware steps, or treats inpainting as a conditional generation problem that requires extra encoders for masks, edges, or text. Both approaches suffer from high inference latency or added architectural complexity.

RAD’s key insight is to assign each pixel its own noise schedule, allowing the forward diffusion to add noise only to the masked region while leaving the rest of the image untouched. Formally, the forward transition for pixel i at step t becomes q(x_{t,i}|x_{t‑1,i}) = N(√{1‑b_{t,i}} x_{t‑1,i}, b_{t,i}), where b_{t,i} is a pixel‑wise variance. During training, b_{t,i} is generated from a distribution that mimics realistic inpainting masks; the authors use Perlin‑noise‑based patterns to ensure spatial coherence. The reverse process still follows the standard DDPM formulation, but the mean μ_{θ,t,i} is computed element‑wise using the corresponding a_{t,i}=1‑b_{t,i} and accumulated product \bar{a}{t,i}. When \bar{a}{t,i}=1 (i.e., no noise ever added to that pixel), the model simply copies the current value, avoiding division‑by‑zero.

To make the network aware of the varying noise levels, RAD introduces a Spatial Noise Embedding. The per‑pixel noise level b_{t,i} is embedded via a 1×1 convolution and concatenated with the usual timestep embedding before being fed into the U‑Net denoiser. This gives the model explicit information about which regions still need denoising.

Training uses the standard ε‑prediction L2 loss, but the loss is evaluated element‑wise with the pixel‑specific a_{t,i} and \bar{a}{t,i}. Because the noise schedule itself is stochastic, the expectation over q(b{1:T}) is also taken during loss computation. The authors split the diffusion process into two phases: Phase 1 adds noise only inside the mask, Phase 2 adds noise only outside. Both phases are used during training to improve stability, but only Phase 1 is needed at inference time. Linear β schedules are employed for each phase and normalized so that the total accumulated noise after both phases matches that of a conventional diffusion model.

Efficiency is a major advantage. RAD requires no extra mask‑processing modules, no iterative resampling, and only a single reverse diffusion pass to fill the missing region. Consequently, inference time is reduced by 50‑100× compared with state‑of‑the‑art diffusion inpainting methods such as RePaint, MCG, or DiffEdit.

To lower the training burden, the authors adopt Low‑Rank Adaptation (LoRA). By freezing the pretrained diffusion backbone (e.g., Stable Diffusion or ADM) and learning only low‑rank updates, they achieve comparable performance to full‑model fine‑tuning while using less than 0.1 % of the original parameters and dramatically reducing GPU memory consumption.

Experiments on FFHQ (faces), LSUN Bedroom (indoor scenes), and ImageNet (diverse objects) demonstrate that RAD attains the best FID, LPIPS, and PSNR scores among all published diffusion‑based inpainting methods. Qualitative results show sharper textures and better adherence to surrounding context. Ablation studies confirm that (1) pixel‑wise noise schedules are essential—removing them degrades quality sharply; (2) Perlin‑noise‑based schedule generation outperforms naïve random schedules; (3) Spatial Noise Embedding is critical for the network to distinguish noisy from already‑clean pixels; and (4) LoRA fine‑tuning retains performance while cutting training cost.

In summary, RAD re‑thinks the most basic design choice of diffusion models—how noise is injected—by making it spatially adaptive. This simple reformulation eliminates the need for auxiliary conditioning networks, speeds up inference dramatically, and delivers state‑of‑the‑art inpainting quality. The work opens a promising direction for region‑aware generative modeling and suggests that many other image‑editing tasks could benefit from pixel‑wise diffusion schedules.

Comments & Academic Discussion

Loading comments...

Leave a Comment