Duality Models: An Embarrassingly Simple One-step Generation Paradigm

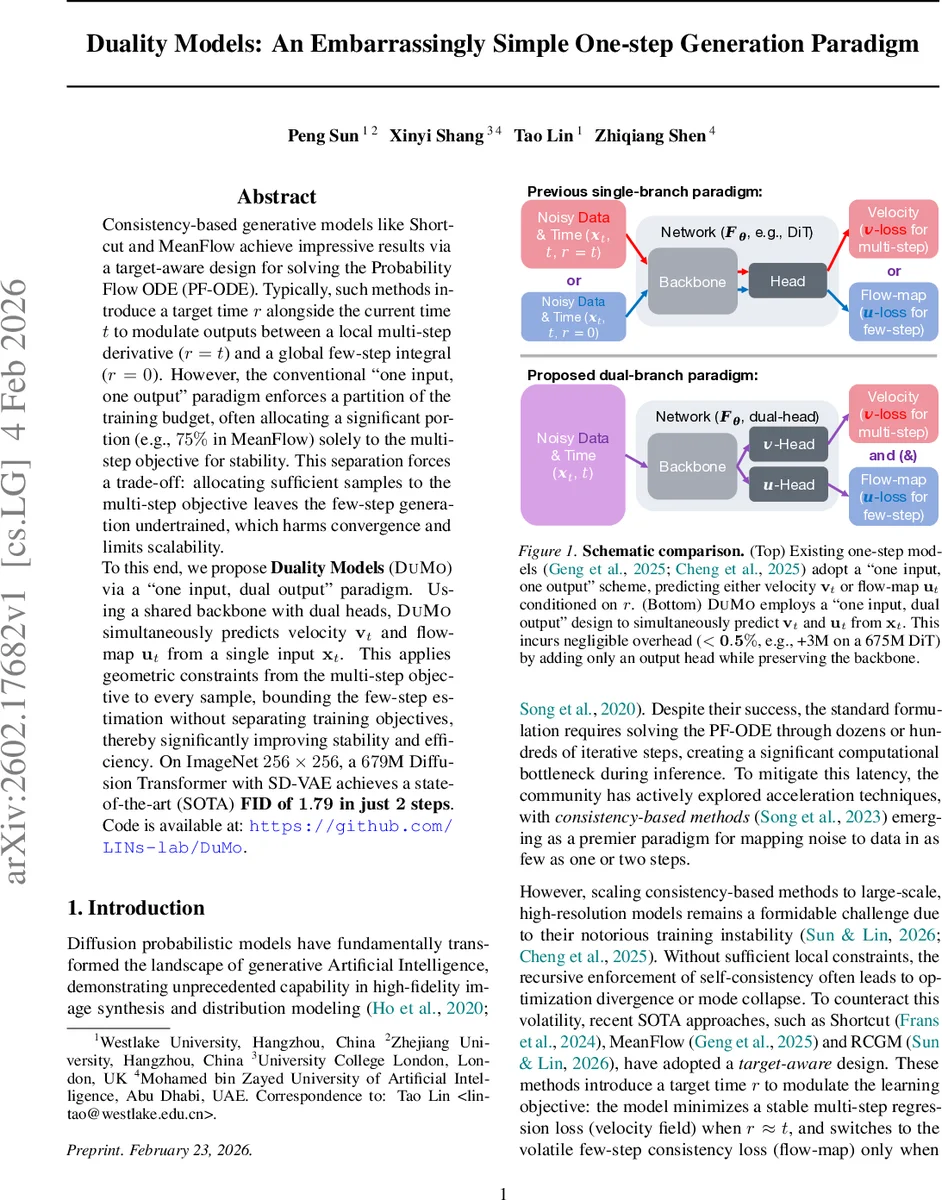

Consistency-based generative models like Shortcut and MeanFlow achieve impressive results via a target-aware design for solving the Probability Flow ODE (PF-ODE). Typically, such methods introduce a target time $r$ alongside the current time $t$ to modulate outputs between a local multi-step derivative ($r = t$) and a global few-step integral ($r = 0$). However, the conventional “one input, one output” paradigm enforces a partition of the training budget, often allocating a significant portion (e.g., 75% in MeanFlow) solely to the multi-step objective for stability. This separation forces a trade-off: allocating sufficient samples to the multi-step objective leaves the few-step generation undertrained, which harms convergence and limits scalability. To this end, we propose Duality Models (DuMo) via a “one input, dual output” paradigm. Using a shared backbone with dual heads, DuMo simultaneously predicts velocity $v_t$ and flow-map $u_t$ from a single input $x_t$. This applies geometric constraints from the multi-step objective to every sample, bounding the few-step estimation without separating training objectives, thereby significantly improving stability and efficiency. On ImageNet 256 $\times$ 256, a 679M Diffusion Transformer with SD-VAE achieves a state-of-the-art (SOTA) FID of 1.79 in just 2 steps. Code is available at: https://github.com/LINs-lab/DuMo

💡 Research Summary

The paper addresses a fundamental inefficiency in current consistency‑based diffusion models such as Shortcut, MeanFlow, and RCGM. These methods rely on a “one input, one output” design and introduce a target time r (either equal to the current time t or zero) to switch between a stable multi‑step velocity loss and a volatile few‑step flow‑map loss. Because a single network can only produce one of these outputs at a time, the training budget must be split: a large fraction (often >75 %) is devoted to the velocity task to guarantee stability, leaving the flow‑map head under‑trained and limiting generation quality, especially when scaling to large, high‑resolution models.

Duality Models (DuMo) propose a simple yet powerful “one input, dual output” architecture. A shared backbone (e.g., a Diffusion Transformer) is equipped with two lightweight heads: one predicts the instantaneous velocity vₜ, the other predicts the flow‑map uₜ directly from the same noisy input xₜ. The velocity head uses the standard L₂ regression loss against the ground‑truth ODE derivative, providing a stable physical signal. The flow‑map head is trained with a consistency loss that enforces agreement between predictions at adjacent time steps, effectively learning the integral of the PF‑ODE. The total loss is a weighted sum L = βLᵥ + (1‑β)Lᵤ, where β controls the balance between stability (velocity) and efficiency (flow‑map). Empirically, a broad β range (≈0.3–0.7) works well, removing the need for delicate hyper‑parameter tuning.

Implementation adds only a single output head, increasing parameters by less than 0.5 % and incurring negligible computational overhead. Training samples are no longer partitioned; every sample contributes to both objectives, eliminating the data scarcity that plagued previous methods. Time‑step sampling follows a Beta distribution to focus learning on critical noise levels, and optional guidance scaling (ζ) improves conditional generation.

Experiments on ImageNet‑256 × 256 with a 679 M‑parameter DiT combined with an SD‑VAE demonstrate that DuMo can generate high‑quality images in just two sampling steps, achieving a state‑of‑the‑art FID of 1.79 (and an Inception Score around 300). This outperforms prior teacher‑free one‑step or few‑step methods that required dozens of function evaluations. Ablation studies confirm that the dual‑head design yields lower Maximum Mean Discrepancy (MMD) for stability and higher sample quality across a variety of β, time‑distribution, and guidance settings.

In summary, DuMo unifies local geometric constraints (velocity) with global consistency (flow‑map) within a single network, delivering both training stability and rapid, high‑fidelity generation. The approach scales to large models and high‑resolution data, opening avenues for fast, reliable diffusion‑based synthesis in many downstream applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment