The SJTU X-LANCE Lab System for MSR Challenge 2025

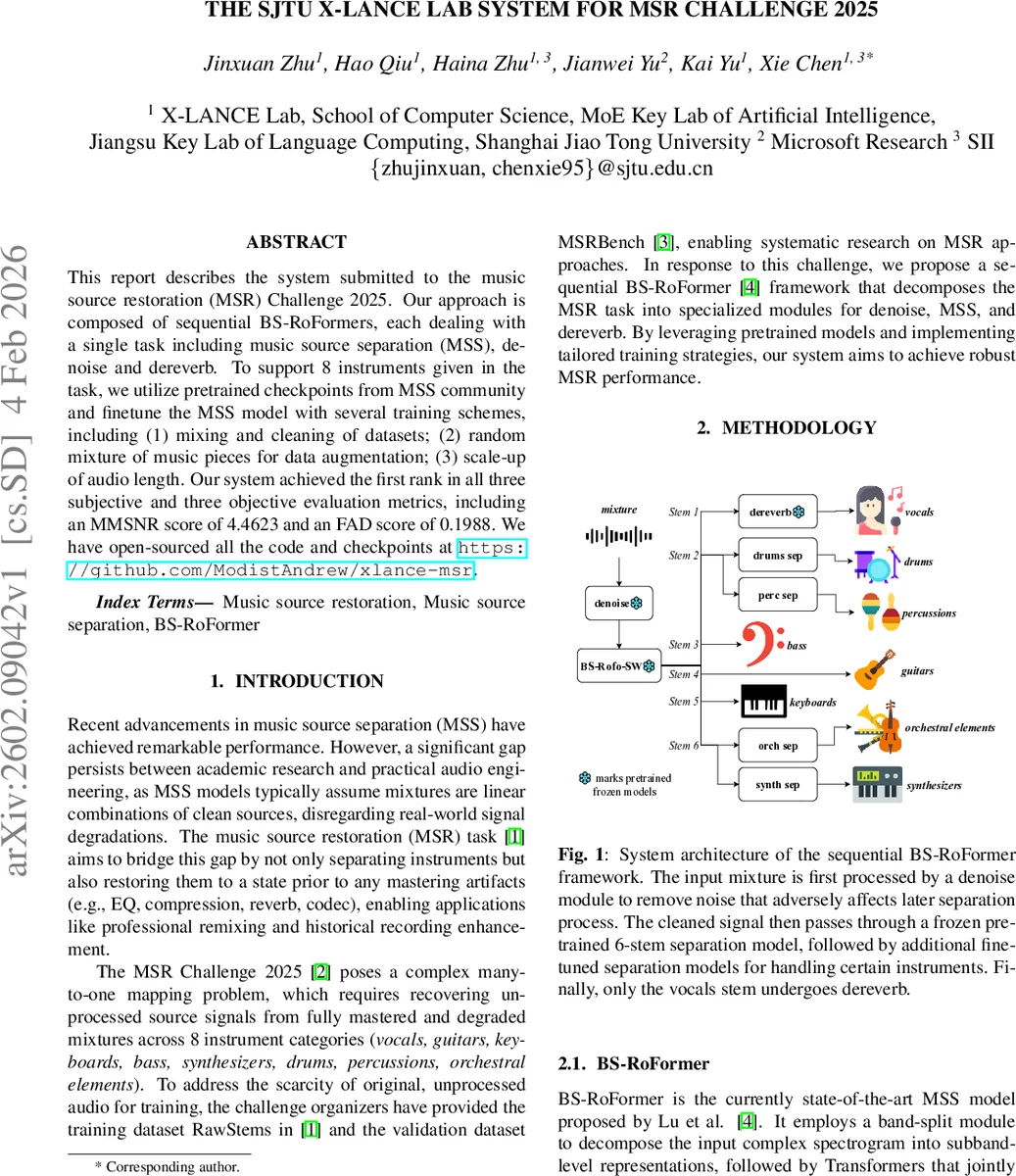

This report describes the system submitted to the music source restoration (MSR) Challenge 2025. Our approach is composed of sequential BS-RoFormers, each dealing with a single task including music source separation (MSS), denoise and dereverb. To support 8 instruments given in the task, we utilize pretrained checkpoints from MSS community and finetune the MSS model with several training schemes, including (1) mixing and cleaning of datasets; (2) random mixture of music pieces for data augmentation; (3) scale-up of audio length. Our system achieved the first rank in all three subjective and three objective evaluation metrics, including an MMSNR score of 4.4623 and an FAD score of 0.1988. We have open-sourced all the code and checkpoints at https://github.com/ModistAndrew/xlance-msr.

💡 Research Summary

The paper presents a winning solution for the inaugural Music Source Restoration (MSR) Challenge 2025, a task that requires not only separating eight instrument categories from a fully mastered mixture but also restoring the sources to a pre‑mastering state by removing real‑world degradations such as noise and reverberation. The authors propose a sequential pipeline built around the state‑of‑the‑art band‑split RoFormer (BS‑RoFormer) architecture, arranging three dedicated modules in the order denoise → source separation → dereverb.

The denoise stage uses a Mel‑band RoFormer model that was publicly released by the community. It is placed first because broadband noise severely harms downstream separation performance. The core separation stage relies on a frozen 6‑stem BS‑RoFo‑SW model (bass, drums, other, vocals, guitars, piano/keyboard) that was pre‑trained on large‑scale MSS data. To adapt this model to the challenge’s 8‑stem requirement, the authors fine‑tune four additional BS‑RoFormer models that split the “drums” and “other” stems into finer categories: synthesizers, drums, percussions, and orchestral elements. This cascaded architecture preserves the strong generic separation capability of the original model while providing instrument‑specific refinement.

The final dereverb module is applied only to the vocal stem. A simple RMS‑based heuristic (skip dereverb if the output level drops more than 10 dB) prevents the accidental removal of intentional reverberant vocal layers such as backing vocals. The dereverb model is also a Mel‑band RoFormer, an evolution of BS‑RoFormer that operates directly on mel‑scaled spectrograms.

Training data are constructed from two public sources: RawStems (the official MSR training set) and MoisesDB, which offers fine‑grained instrument annotations beyond the usual four‑stem configuration. RawStems is cleaned to discard mislabeled examples. Three data‑augmentation strategies are explored: (1) random mixing of stems from different songs to synthesize new mixtures, (2) extending training segment length from the baseline 3 seconds to up to 10 seconds to improve long‑context modeling, and (3) the aforementioned cleaning step. The loss function combines an L1 waveform term with a multi‑resolution STFT loss, encouraging both time‑domain fidelity and spectral accuracy. All models are trained on NVIDIA H200 GPUs with a batch size of four, for more than 200 k optimization steps, ensuring convergence.

Three training configurations are evaluated on the DT0 validation subset: a baseline 3‑second setting, a “Large” 10‑second setting, and a “Large+Random” setting that adds random mixture augmentation. Performance differences are modest, but the “Large+Random” configuration yields the best FAD (Frechet Audio Distance) scores for drums, while the plain “Large” configuration is selected for the other stems. Ablation studies confirm that each stage (denoise, separation, dereverb) contributes positively to the final metrics.

The system achieves the highest scores across all objective and subjective metrics reported in the challenge. Objective results include an MMSNR of 4.4623, a Zimt of 0.0137, and an FAD of 0.1988, outperforming the second‑place team by a substantial margin. Subjectively, the mean opinion scores (MOS) for separation (4.2358), restoration (3.3892), and overall quality (3.4665) are also the best among participants.

In the discussion, the authors note that the current challenge emphasizes separation accuracy more than pure restoration quality, which may limit research into nuanced aspects such as timbral fidelity and dynamic range recovery. They suggest that future editions could reduce the number of target instruments, allowing participants to allocate more resources to high‑quality restoration and to develop dedicated evaluation metrics for restoration performance.

Overall, the paper demonstrates that a carefully engineered sequence of pre‑trained transformer‑based modules, combined with thoughtful data augmentation and loss design, can close the gap between academic source separation and practical music restoration, setting a strong baseline for future work in the emerging field of music source restoration.

Comments & Academic Discussion

Loading comments...

Leave a Comment