PipeMFL-240K: A Large-scale Dataset and Benchmark for Object Detection in Pipeline Magnetic Flux Leakage Imaging

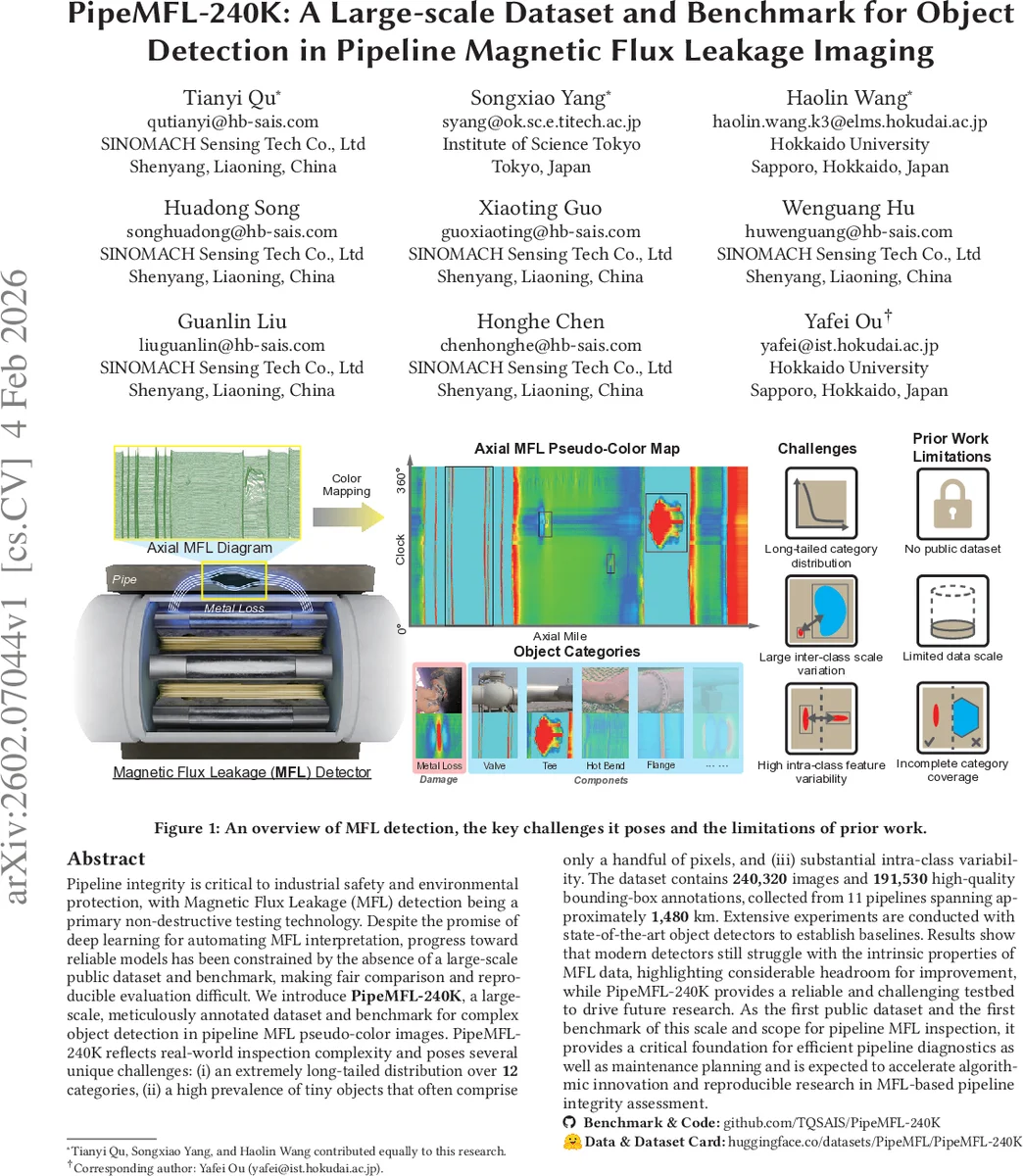

Pipeline integrity is critical to industrial safety and environmental protection, with Magnetic Flux Leakage (MFL) detection being a primary non-destructive testing technology. Despite the promise of deep learning for automating MFL interpretation, progress toward reliable models has been constrained by the absence of a large-scale public dataset and benchmark, making fair comparison and reproducible evaluation difficult. We introduce \textbf{PipeMFL-240K}, a large-scale, meticulously annotated dataset and benchmark for complex object detection in pipeline MFL pseudo-color images. PipeMFL-240K reflects real-world inspection complexity and poses several unique challenges: (i) an extremely long-tailed distribution over \textbf{12} categories, (ii) a high prevalence of tiny objects that often comprise only a handful of pixels, and (iii) substantial intra-class variability. The dataset contains \textbf{240,320} images and \textbf{191,530} high-quality bounding-box annotations, collected from 11 pipelines spanning approximately \textbf{1,480} km. Extensive experiments are conducted with state-of-the-art object detectors to establish baselines. Results show that modern detectors still struggle with the intrinsic properties of MFL data, highlighting considerable headroom for improvement, while PipeMFL-240K provides a reliable and challenging testbed to drive future research. As the first public dataset and the first benchmark of this scale and scope for pipeline MFL inspection, it provides a critical foundation for efficient pipeline diagnostics as well as maintenance planning and is expected to accelerate algorithmic innovation and reproducible research in MFL-based pipeline integrity assessment.

💡 Research Summary

The paper introduces PipeMFL‑240K, the first large‑scale public dataset and benchmark for multi‑class object detection in pipeline magnetic flux leakage (MFL) imaging. Pipe integrity inspection relies heavily on MFL because of its speed, penetration depth, and robustness, yet progress in automated analysis has been hampered by the lack of a sizable, diverse, and openly available dataset. PipeMFL‑240K fills this gap by providing 240,320 pseudo‑color MFL images collected from 11 real pipelines covering roughly 1,480 km, together with 191,530 high‑quality bounding‑box annotations across 12 categories (four defect types and eight structural components).

The dataset creation involved three steps: (1) acquisition of high‑resolution (5,000 × 2,400 px) images using an in‑line MFL scanner; (2) expert annotation by a six‑person team, validated against post‑excavation field reports, achieving >98 % annotation precision; (3) systematic preprocessing, organization in PNG format, and public release on HuggingFace and GitHub with accompanying metadata (pipe type, station, angular position, etc.). The 12 categories include Metal Loss, Corrosion Cluster, Girth‑Weld Anomaly, Spiral‑Weld Anomaly, Bend, Sleeve, Branch, Tee, Casing, Valve, External Support, and Flange.

Statistical analysis reveals three core challenges. First, the category distribution is severely long‑tailed: defect objects dominate the count, while structural components appear orders of magnitude less frequently, leading to systematic under‑performance on rare but safety‑critical classes. Second, there is extreme scale variance; many defects occupy only a handful of pixels, whereas components can span hundreds of pixels, making it difficult for conventional detectors to capture both simultaneously. Third, intra‑class variability is high—e.g., corrosion depth changes the color from light yellow to dark red, and different valve designs produce distinct elliptical or symmetric signatures—complicating feature learning. Additionally, objects exhibit strong spatial priors (defects tend to appear near the pipe bottom, certain components only at specific angular sectors), a property largely ignored by existing models.

To establish baselines, the authors evaluated eight state‑of‑the‑art detectors (Faster RCNN, Mask RCNN, RetinaNet, YOLOv5, YOLOv8, Deformable DETR, etc.) under a unified training protocol. Using COCO‑style mAP@

Comments & Academic Discussion

Loading comments...

Leave a Comment