E-Globe: Scalable $ε$-Global Verification of Neural Networks via Tight Upper Bounds and Pattern-Aware Branching

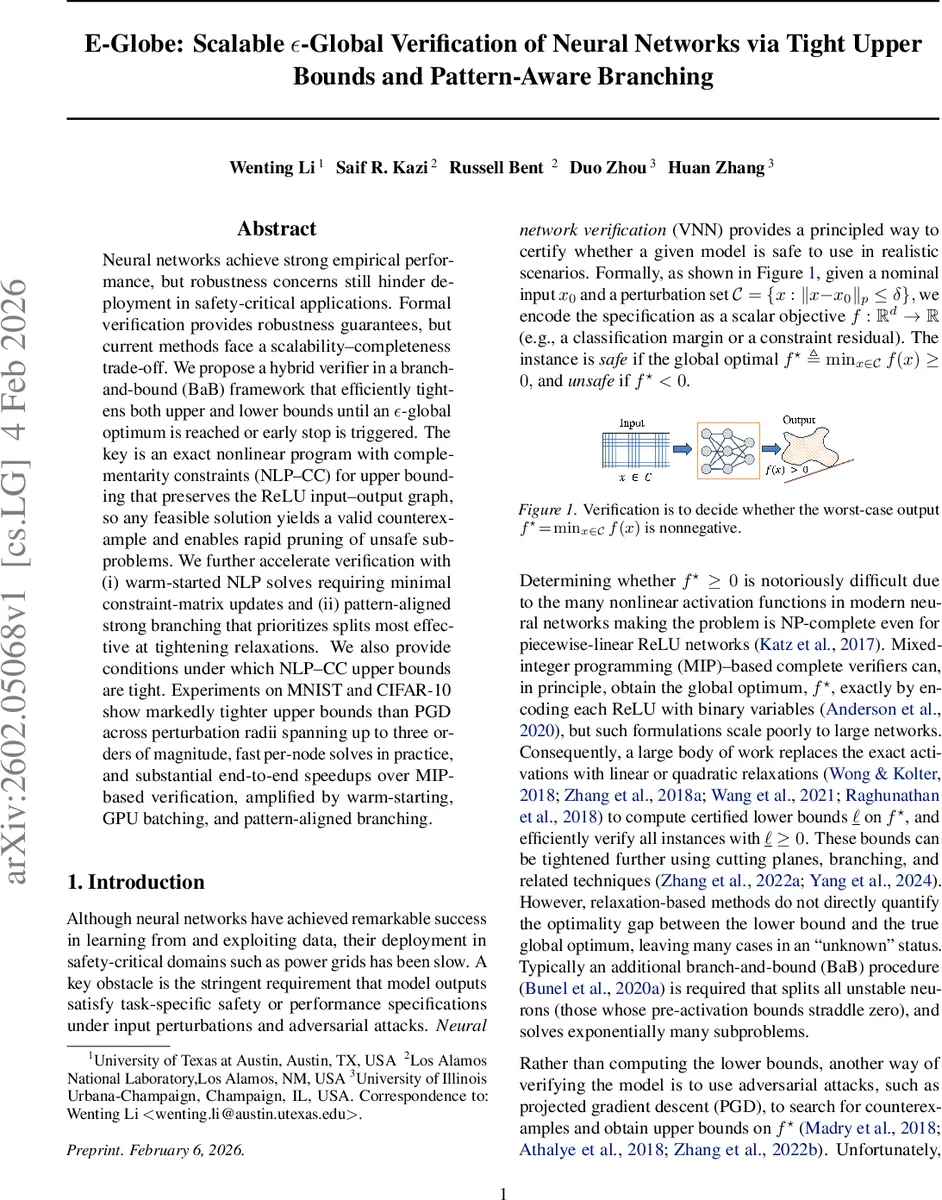

Neural networks achieve strong empirical performance, but robustness concerns still hinder deployment in safety-critical applications. Formal verification provides robustness guarantees, but current methods face a scalability-completeness trade-off. We propose a hybrid verifier in a branch-and-bound (BaB) framework that efficiently tightens both upper and lower bounds until an $ε-$global optimum is reached or early stop is triggered. The key is an exact nonlinear program with complementarity constraints (NLP-CC) for upper bounding that preserves the ReLU input-output graph, so any feasible solution yields a valid counterexample and enables rapid pruning of unsafe subproblems. We further accelerate verification with (i) warm-started NLP solves requiring minimal constraint-matrix updates and (ii) pattern-aligned strong branching that prioritizes splits most effective at tightening relaxations. We also provide conditions under which NLP-CC upper bounds are tight. Experiments on MNIST and CIFAR-10 show markedly tighter upper bounds than PGD across perturbation radii spanning up to three orders of magnitude, fast per-node solves in practice, and substantial end-to-end speedups over MIP-based verification, amplified by warm-starting, GPU batching, and pattern-aligned branching.

💡 Research Summary

The paper introduces E‑Globe, a scalable verification framework that simultaneously tightens upper and lower bounds for ReLU‑based neural networks, enabling ε‑global optimality guarantees while remaining computationally efficient. Traditional complete verifiers encode each ReLU with binary variables in a mixed‑integer program (MIP), which yields exact global optima but scales poorly to modern deep networks. Incomplete methods, such as linear relaxations (DeepPoly, α‑/β‑CRoWn) or gradient‑based attacks (PGD), provide either lower bounds or heuristic upper bounds, but they cannot quantify the optimality gap and often fail to find counter‑examples for larger perturbations.

E‑Globe bridges this gap by coupling a nonlinear program with complementarity constraints (NLP‑CC) for upper bounding with a standard relaxation‑based lower‑bounding method (β‑CRoWn) inside a branch‑and‑bound (BaB) loop. The NLP‑CC formulation replaces each ReLU with exact complementarity constraints (p\ge0,;q\ge0,;p\cdot q=0,;z=p-q,;\hat z=p). These constraints are derived from the Karush‑Kuhn‑Tucker (KKT) conditions of the projection QP that defines ReLU, guaranteeing that any feasible solution of the NLP‑CC reproduces the original network’s input‑output mapping. Consequently, any feasible point yields a sound upper bound (\bar u = f(x)) and a concrete adversarial example if (\bar u<0).

To keep the per‑node cost low, the authors introduce two engineering advances:

-

Warm‑started NLP‑CC solves – When a branch splits on a small set of neurons, only a few complementarity constraints change. The KKT system from the parent node is updated via a low‑rank correction, allowing the solver to start from a near‑optimal point. Empirically this yields up to an order‑of‑magnitude speed‑up per subproblem.

-

Pattern‑aligned strong branching – The NLP‑CC solution also provides an activation pattern (which neurons are active/inactive). Traditional strong branching scores are modified by a regularizer that measures alignment with this pattern. Branches that respect the current pattern tend to tighten the lower bound more effectively, reducing the total number of branches required.

The paper proves that the NLP‑CC formulation is an exact reformulation: the feasible set projects onto the same input‑output graph as the original ReLU network, and under the condition that all unstable neurons’ complementarity constraints match their true activation state, the upper bound becomes tight, i.e., equal to the true global optimum. This property underpins the ε‑global verification criterion: the algorithm stops when the gap (\bar u - \ell \le \epsilon), delivering either a safety certificate ((\ell>0)), a counter‑example ((\bar u<0)), or an ε‑tight optimality interval.

Experimental evaluation is performed on MNIST (a 2‑layer MLP) and CIFAR‑10 (ResNet‑18). The authors vary (\ell_\infty) and (\ell_2) perturbation radii across three orders of magnitude. Compared baselines include state‑of‑the‑art MIP solvers (Gurobi‑based ERAN), relaxation‑based verifiers (DeepPoly, α‑/β‑CRoWn), and PGD attacks. Key findings:

- Upper bounds from NLP‑CC are consistently tighter than PGD, often by 30‑70 % relative improvement, especially for larger radii where PGD fails to find any adversarial example.

- The upper‑lower gap quickly shrinks below (\epsilon=10^{-3}) for the vast majority of instances, dramatically reducing the “unknown” region that plagues incomplete methods.

- Runtime: E‑Globe outperforms MIP‑based complete verification by a factor of 5–20 on average, thanks to warm‑starts and GPU‑batched solves. The per‑node NLP solves exhibit near‑polynomial scaling, whereas MIP runtimes grow exponentially with the number of binary variables.

- Pattern‑aligned branching reduces the total number of branches by ~20 % relative to standard filtered strong branching, with the most pronounced gains on deep networks with many unstable neurons.

In summary, E‑Globe delivers a practical, scalable, and theoretically sound verification pipeline. By preserving exact ReLU semantics in the upper‑bound NLP, leveraging warm‑starts, and guiding branching with activation patterns, it achieves tight certificates and concrete counter‑examples without exhaustive enumeration of all activation patterns. The work opens avenues for extending complementarity‑based NLP formulations to other non‑linear activations (e.g., leaky ReLU, sigmoid) and more complex input sets, further broadening the applicability of formal verification in safety‑critical AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment