UniTrack: Differentiable Graph Representation Learning for Multi-Object Tracking

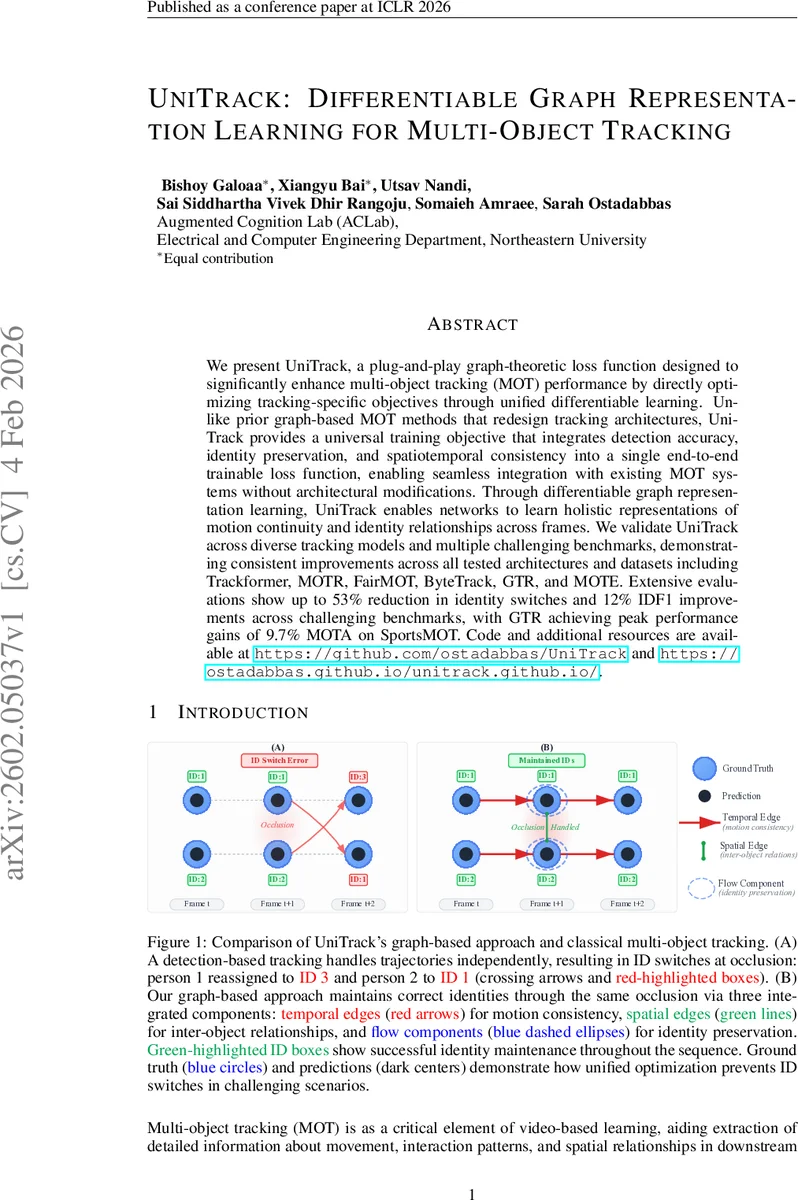

We present UniTrack, a plug-and-play graph-theoretic loss function designed to significantly enhance multi-object tracking (MOT) performance by directly optimizing tracking-specific objectives through unified differentiable learning. Unlike prior graph-based MOT methods that redesign tracking architectures, UniTrack provides a universal training objective that integrates detection accuracy, identity preservation, and spatiotemporal consistency into a single end-to-end trainable loss function, enabling seamless integration with existing MOT systems without architectural modifications. Through differentiable graph representation learning, UniTrack enables networks to learn holistic representations of motion continuity and identity relationships across frames. We validate UniTrack across diverse tracking models and multiple challenging benchmarks, demonstrating consistent improvements across all tested architectures and datasets including Trackformer, MOTR, FairMOT, ByteTrack, GTR, and MOTE. Extensive evaluations show up to 53% reduction in identity switches and 12% IDF1 improvements across challenging benchmarks, with GTR achieving peak performance gains of 9.7% MOTA on SportsMOT.

💡 Research Summary

Multi‑object tracking (MOT) remains a cornerstone of video‑based perception, yet existing pipelines still suffer from three dominant failure modes: (1) post‑occlusion ID switches, (2) temporal inconsistency when objects change pose or speed, and (3) cross‑subject ID swaps during interactions. Most recent methods either treat detection and association as separate stages or embed graph structures directly into the tracking architecture, requiring architectural redesign and additional inference logic. In this context, the authors propose UniTrack, a plug‑and‑play graph‑theoretic loss that can be attached to any end‑to‑end MOT model without altering its architecture.

The core idea is to model a video segment as a sequence of weighted directed graphs G = {G₁,…,G_T}, where each node v_i^t represents a detected object at frame t and edges encode both temporal links (between consecutive frames) and spatial links (between objects within the same frame). For each node a balance variable b_i^t∈{−1,0,1} indicates appearance, continuation, or disappearance, while flow variables f_ij^t capture the association strength from object i at time t to object j at time t + 1. Flow conservation constraints (∑_out f_ij^t − ∑_in f_ki^{t‑1} = b_i^t) guarantee physically plausible tracks: objects cannot be created or destroyed arbitrarily, and each detection participates in at most one trajectory.

UniTrack’s loss unifies three differentiable components:

-

Flow loss (L_flow) – encourages high‑confidence associations while scaling the contribution by detection quality. An exponential term exp(−α·|FP|/|P| − α·|FN|/|GT|) attenuates the loss when false positives or false negatives are abundant, allowing the network to rely less on uncertain matches.

-

Spatial coherence loss (L_spatial) – penalizes inconsistent spatial relationships among neighboring objects, directly targeting cross‑subject ID swaps. By preserving relative positions, the model learns to keep identities stable when trajectories intersect.

-

Temporal consistency loss (L_temporal) – enforces smooth motion across frames, reducing ID switches caused by abrupt pose changes or rapid motion.

The overall objective is L = L_flow + λ_s L_spatial + λ_t L_temporal, where λ_s and λ_t are automatically derived from scene characteristics using a graph Laplacian analysis. In crowded scenes λ_s is increased to prioritize spatial reasoning; in fast‑motion scenarios λ_t is boosted to enforce temporal smoothness. This adaptive weighting eliminates the need for manual hyper‑parameter tuning.

Implementation-wise, the graph is built over a sliding window of five frames, balancing computational cost and temporal context. Node embeddings are taken from the detection backbone, pairwise similarities generate edge weights, and the flow constraints are enforced through differentiable operations that integrate seamlessly with back‑propagation.

The authors evaluate UniTrack on seven state‑of‑the‑art MOT architectures—including TrackFormer, MOTR, FairMOT, ByteTrack, GTR, and MOTE—across four challenging benchmarks: MOT17, MOT20, SportsMOT, and DanceTrack. Across all baselines, UniTrack yields an average 53 % reduction in ID switches and a 12 % increase in IDF1. Notably, when applied to GTR on SportsMOT, it achieves a 9.7 % boost in MOTA, confirming that the loss can extract additional performance without any architectural changes.

Key contributions are: (1) introducing a universal graph‑based loss that can be attached to any MOT model, (2) designing a differentiable flow formulation that jointly addresses detection quality, spatial relationships, and temporal continuity, and (3) providing an adaptive weighting scheme that automatically balances the three components according to scene dynamics. UniTrack demonstrates that careful loss design, combined with graph‑theoretic reasoning, can substantially improve tracking robustness while preserving the simplicity and efficiency of existing pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment