CoWork-X: Experience-Optimized Co-Evolution for Multi-Agent Collaboration System

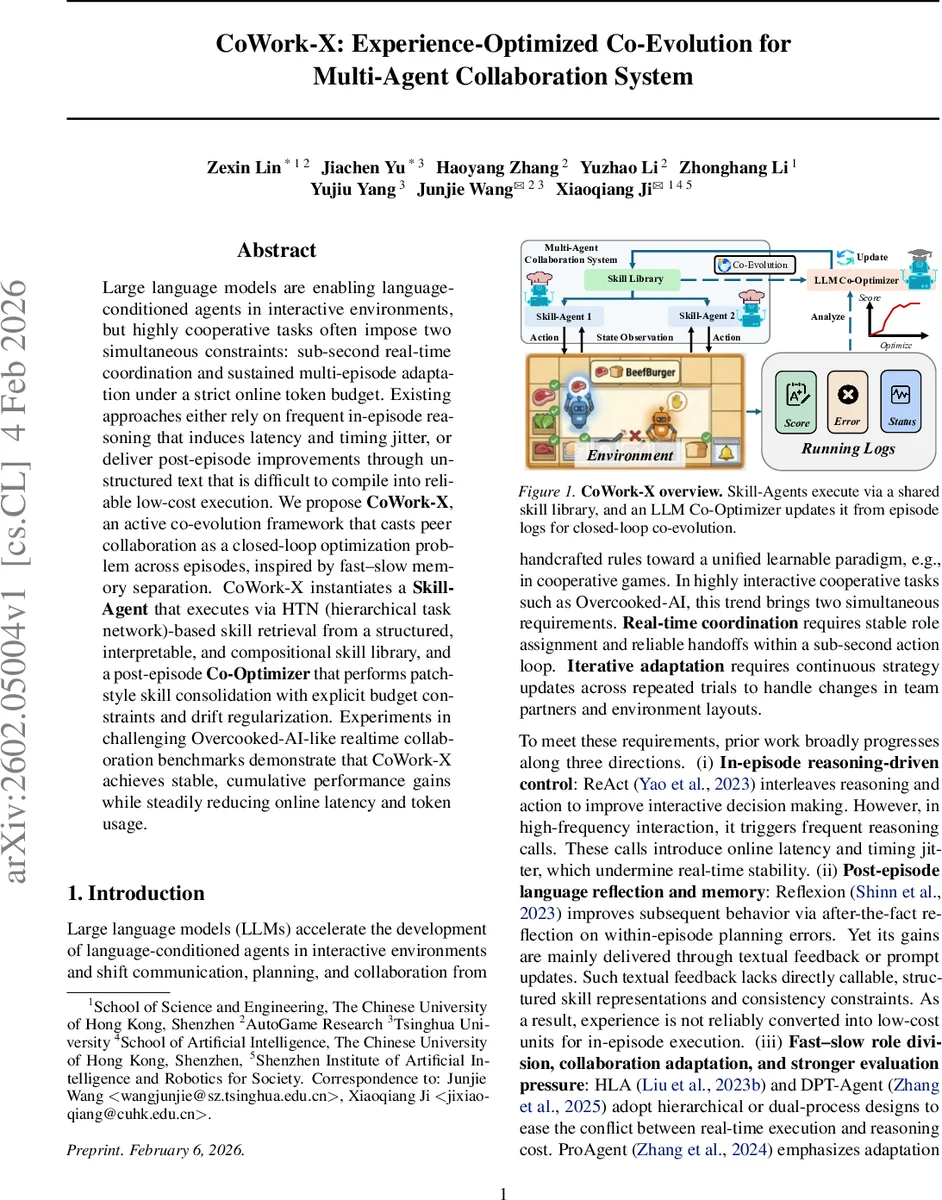

Large language models are enabling language-conditioned agents in interactive environments, but highly cooperative tasks often impose two simultaneous constraints: sub-second real-time coordination and sustained multi-episode adaptation under a strict online token budget. Existing approaches either rely on frequent in-episode reasoning that induces latency and timing jitter, or deliver post-episode improvements through unstructured text that is difficult to compile into reliable low-cost execution. We propose CoWork-X, an active co-evolution framework that casts peer collaboration as a closed-loop optimization problem across episodes, inspired by fast–slow memory separation. CoWork-X instantiates a Skill-Agent that executes via HTN (hierarchical task network)-based skill retrieval from a structured, interpretable, and compositional skill library, and a post-episode Co-Optimizer that performs patch-style skill consolidation with explicit budget constraints and drift regularization. Experiments in challenging Overcooked-AI-like realtime collaboration benchmarks demonstrate that CoWork-X achieves stable, cumulative performance gains while steadily reducing online latency and token usage.

💡 Research Summary

CoWork‑X addresses the dual challenge of sub‑second real‑time coordination and long‑term adaptation under a strict online token budget that characterizes highly cooperative tasks for language‑conditioned agents. Existing methods either interleave frequent in‑episode LLM reasoning (e.g., ReAct), which introduces latency and timing jitter, or rely on post‑episode textual reflections (e.g., Reflection) that are difficult to compile into low‑cost executable units.

The proposed framework casts peer collaboration as a closed‑loop optimization problem across episodes, inspired by the fast‑slow memory separation observed in biological systems. CoWork‑X consists of two complementary components: a Skill‑Agent and a Co‑Optimizer.

Skill‑Agent executes actions by retrieving and invoking skills from a hierarchical task network (HTN)‑based skill library Sₖ. The library is organized into three layers: (1) a compact state representation that abstracts away spatial details, (2) a set of atomic operators (prepare, cook, assemble, serve) that update ingredient counters and validate preconditions, and (3) methods that map high‑level orders to ordered sequences of operators. During an episode, decision‑making reduces to fast skill lookup and execution, eliminating the need for heavyweight LLM calls and guaranteeing sub‑second response times.

Co‑Optimizer runs after each episode. It receives the full trajectory log, including failure traces (e.g., unmet preconditions), stagnation intervals (agents idle for >100 steps), and action distribution statistics. These diagnostics are embedded in a carefully crafted prompt that also supplies the current skill library file and the best‑performing historical version. The LLM then proposes a “patch” – a syntactically valid Python update to Sₖ – that may add missing preconditions, correct state updates, or extend methods to cover uncovered recipes. Crucially, the optimizer respects an explicit token budget (zero online tokens for execution) and applies drift regularization to prevent drastic, destabilizing changes. If the proposed patch degrades performance, the system rolls back to the prior best library.

The overall Execute → Diagnose → Update → Re‑execute loop enables costly reasoning to be shifted entirely to the post‑episode phase, while the in‑episode controller remains lightweight. This design mirrors the biological fast‑slow memory system: fast, rule‑based execution for immediate behavior, and slow, deliberative consolidation for learning.

Experiments are conducted on an Overcooked‑AI‑like real‑time burger‑preparation environment built on the DPT‑Agent simulator. Unlike the original human‑AI setting, both agents are symmetric, sharing the same skill library and thus facing a fully observable Dec‑MDP peer‑coordination problem. The benchmark stresses three aspects: implicit communication (no explicit chat), tight temporal coupling (sub‑second action loops), and dynamic role assignment (agents must hand off tasks fluidly).

CoWork‑X is compared against three baselines: (i) ReAct, which interleaves reasoning and action; (ii) Reflection, which provides textual post‑episode feedback; and (iii) DPT‑WTOM, a hierarchical FSM+LLM approach. Results show that CoWork‑X’s cumulative score rises from 52.0 after 10 episodes to 96.3 after 30 episodes, whereas the baselines either plateau or degrade (ReAct 12.5, Reflection –58.0, DPT‑WTOM 3.5 at 10 episodes). Importantly, CoWork‑X consumes zero online tokens and averages 2.6 seconds per episode, roughly 27× faster than DPT‑WTOM’s 71 seconds, demonstrating that the framework achieves both real‑time stability and sustained performance gains.

Key contributions include: (1) a novel active co‑evolution framework that treats multi‑agent collaboration as a multi‑episode closed loop under a hard online budget; (2) an HTN‑based Skill‑Agent that provides interpretable, compositional behavior with negligible inference cost; (3) a budget‑aware, regularized Co‑Optimizer that patches the skill library in a controlled manner; and (4) empirical evidence that closed‑loop iteration can yield stable, cumulative improvements while dramatically reducing latency and token consumption.

Limitations are acknowledged: the current skill library is tailored to the relatively simple cooking domain, and scaling to environments with richer physics, visual perception, or multi‑goal planning may require additional modules. Moreover, the optimizer’s reliance on LLMs means that prompt engineering and model size directly affect update cost and quality.

Future work proposes extending the framework with multimodal feedback (visual/audio cues), distributed skill repositories for collaborative development, and formal verification pipelines to automatically test patch safety before deployment. In sum, CoWork‑X offers a promising pathway toward cost‑effective, real‑time, and continually improving multi‑agent systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment