Rethinking the Trust Region in LLM Reinforcement Learning

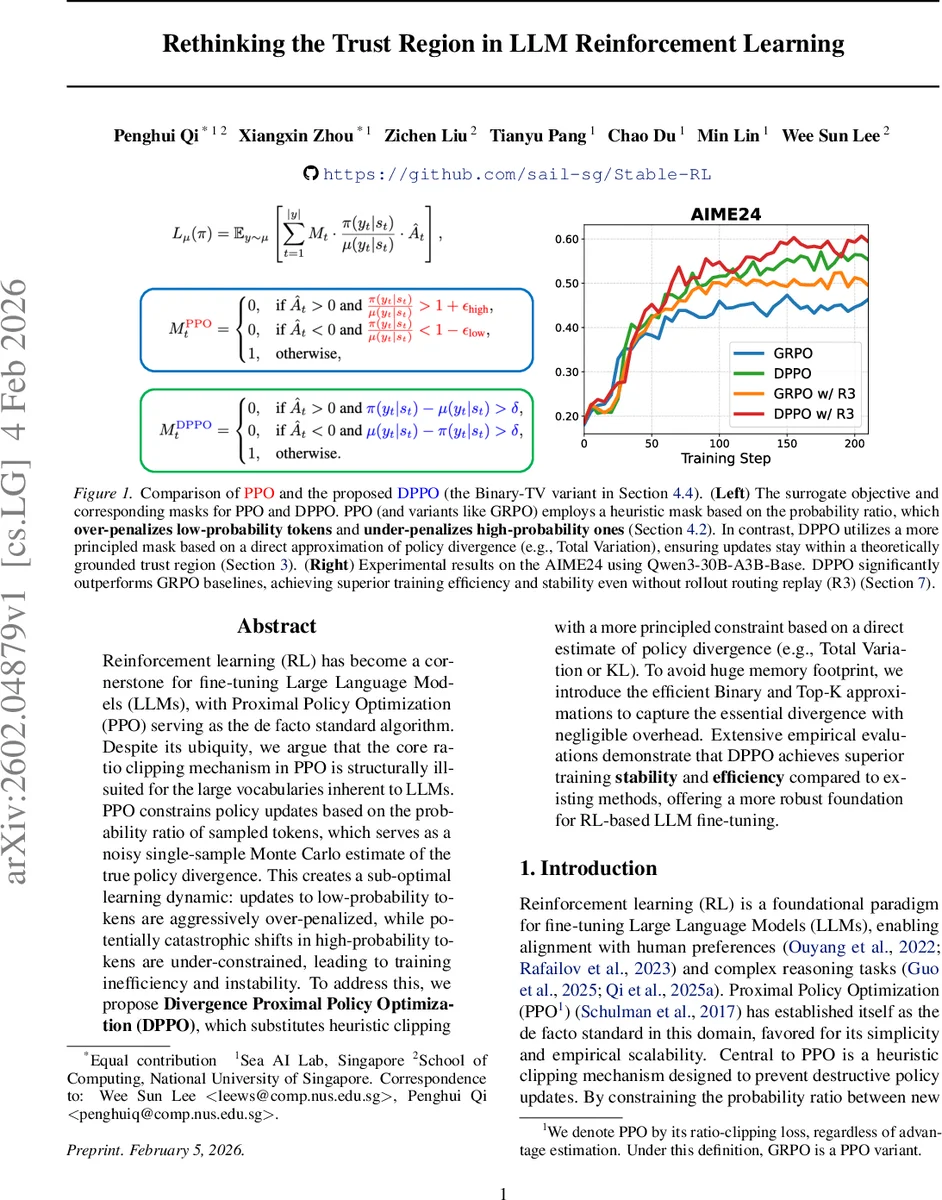

Reinforcement learning (RL) has become a cornerstone for fine-tuning Large Language Models (LLMs), with Proximal Policy Optimization (PPO) serving as the de facto standard algorithm. Despite its ubiquity, we argue that the core ratio clipping mechanism in PPO is structurally ill-suited for the large vocabularies inherent to LLMs. PPO constrains policy updates based on the probability ratio of sampled tokens, which serves as a noisy single-sample Monte Carlo estimate of the true policy divergence. This creates a sub-optimal learning dynamic: updates to low-probability tokens are aggressively over-penalized, while potentially catastrophic shifts in high-probability tokens are under-constrained, leading to training inefficiency and instability. To address this, we propose Divergence Proximal Policy Optimization (DPPO), which substitutes heuristic clipping with a more principled constraint based on a direct estimate of policy divergence (e.g., Total Variation or KL). To avoid huge memory footprint, we introduce the efficient Binary and Top-K approximations to capture the essential divergence with negligible overhead. Extensive empirical evaluations demonstrate that DPPO achieves superior training stability and efficiency compared to existing methods, offering a more robust foundation for RL-based LLM fine-tuning.

💡 Research Summary

The paper critically examines the widespread use of Proximal Policy Optimization (PPO) for fine‑tuning large language models (LLMs) and identifies a fundamental flaw in its core mechanism: ratio clipping. PPO constrains policy updates by clipping the probability ratio r = π(a|s)/μ(a|s) of the token sampled during training. This ratio is a single‑sample Monte‑Carlo estimate of the true divergence between the new policy π and the behavior policy μ. In the context of LLMs, where vocabularies contain hundreds of thousands of tokens and the distribution is heavily long‑tailed, this estimate becomes highly unreliable.

The authors illustrate two pathological cases. For a low‑probability token with μ(a_low|s)=10⁻⁴ and π(a_low|s)=10⁻², the ratio r=100 far exceeds typical clipping thresholds (e.g., ε=0.2), causing the update to be heavily clipped even though the contribution to the total variation (TV) divergence is negligible because the token’s overall mass is tiny. Conversely, for a high‑probability token with μ(a_high|s)=0.99 and π(a_high|s)=0.80, the ratio r≈0.81 stays within the clipping window, yet the shift removes 0.19 probability mass from the dominant token, producing a substantial increase in TV divergence. Thus, PPO systematically over‑penalizes low‑probability tokens and under‑penalizes high‑probability ones, leading to slowed learning on exploratory tokens and potential instability from large shifts in dominant tokens.

To remedy this, the paper proposes Divergence Proximal Policy Optimization (DPPO). Instead of heuristic ratio clipping, DPPO enforces a principled trust‑region constraint based directly on an estimate of the policy divergence, such as TV or KL. The constrained optimization problem becomes:

max_π L′_μ(π) subject to D_max TV(μ‖π) ≤ δ (or an analogous KL bound).

Because computing the full divergence over a massive vocabulary would be prohibitive, the authors introduce two memory‑efficient approximations:

- Binary Approximation – each state’s token probabilities are binarized (0/1) for μ and π, and the TV divergence is approximated by the Hamming distance between these binary vectors.

- Top‑K Approximation – only the K tokens with highest probability mass are considered when estimating divergence.

Both approximations capture the bulk of distributional shift with negligible overhead, as demonstrated empirically.

The theoretical contribution adapts the classic trust‑region analysis to the LLM setting, which is undiscounted (γ = 1) and has a finite horizon T. The authors derive a performance‑difference identity (Theorem 3.1) and a corresponding lower bound (Theorem 3.2):

J(π) − J(μ) ≥ L′_μ(π) − 2 ξ T(T − 1)·D_max² TV,

where ξ is the maximum absolute reward. This bound mirrors the classic Schulman et al. (2015) result but replaces the discounted factor (1 − γ)⁻¹ with the horizon length T, providing a rigorous justification for applying trust‑region methods to LLM fine‑tuning.

Empirical evaluation uses the Qwen3‑30B‑A3B‑Base model on the AIME24 benchmark. DPPO (specifically the Binary‑TV variant) is compared against GRPO, standard PPO, and other recent PPO‑based variants. Results show that DPPO achieves markedly smoother learning curves, maintains TV/KL divergence within the prescribed trust region, and attains higher final reward scores. Notably, DPPO delivers these gains without requiring rollout‑routing replay (R3), highlighting its efficiency.

In summary, the paper argues that the heuristic ratio‑clipping of PPO is ill‑suited for the massive, long‑tailed action spaces of LLMs. By replacing it with a direct divergence‑based constraint and providing practical approximations, DPPO restores the theoretical guarantees of trust‑region methods while delivering empirical improvements in stability and performance. This work offers a compelling new foundation for reinforcement‑learning‑from‑human‑feedback (RL‑HF) and other RL‑based alignment techniques for large language models.

Comments & Academic Discussion

Loading comments...

Leave a Comment