X2HDR: HDR Image Generation in a Perceptually Uniform Space

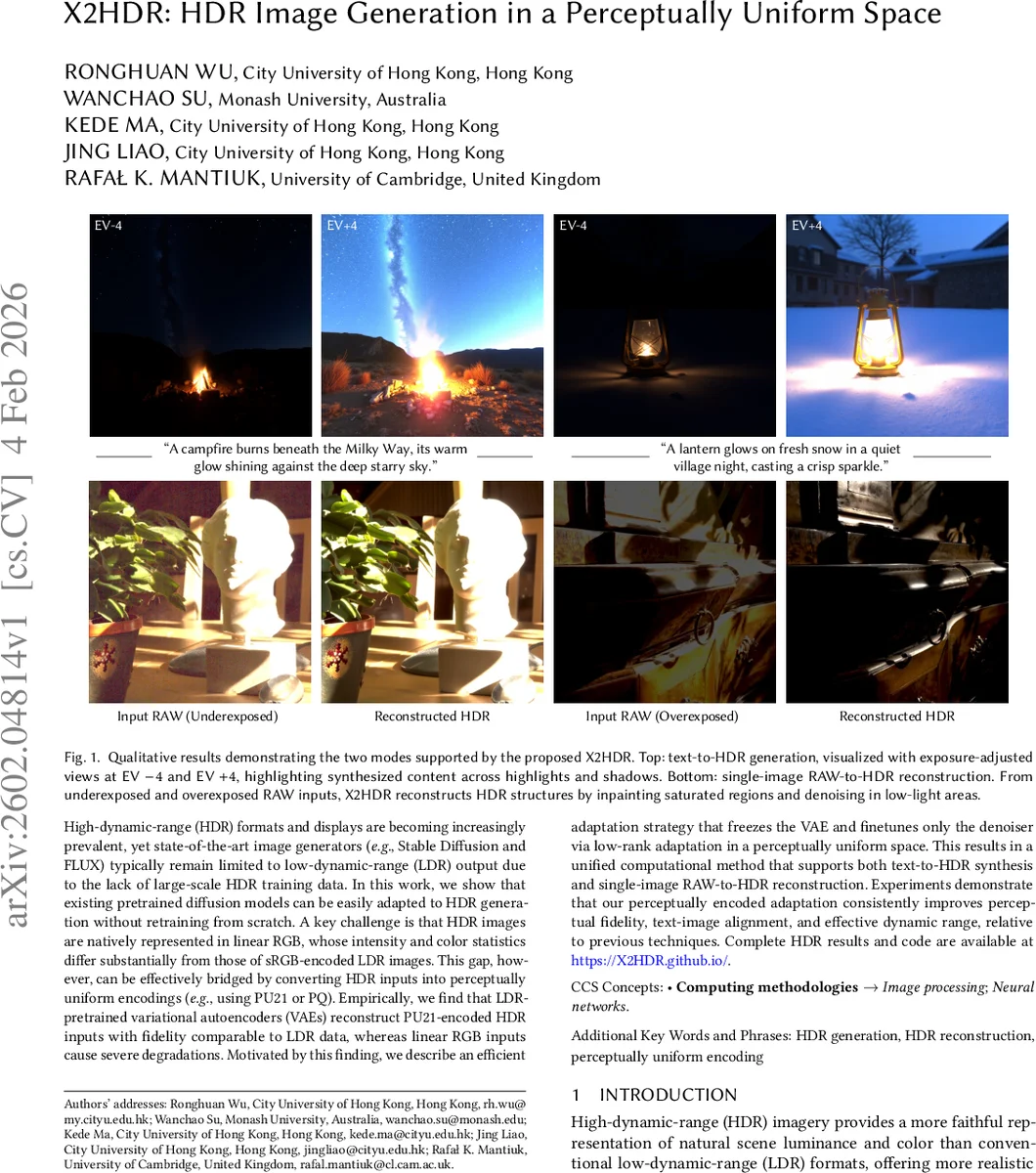

High-dynamic-range (HDR) formats and displays are becoming increasingly prevalent, yet state-of-the-art image generators (e.g., Stable Diffusion and FLUX) typically remain limited to low-dynamic-range (LDR) output due to the lack of large-scale HDR training data. In this work, we show that existing pretrained diffusion models can be easily adapted to HDR generation without retraining from scratch. A key challenge is that HDR images are natively represented in linear RGB, whose intensity and color statistics differ substantially from those of sRGB-encoded LDR images. This gap, however, can be effectively bridged by converting HDR inputs into perceptually uniform encodings (e.g., using PU21 or PQ). Empirically, we find that LDR-pretrained variational autoencoders (VAEs) reconstruct PU21-encoded HDR inputs with fidelity comparable to LDR data, whereas linear RGB inputs cause severe degradations. Motivated by this finding, we describe an efficient adaptation strategy that freezes the VAE and finetunes only the denoiser via low-rank adaptation in a perceptually uniform space. This results in a unified computational method that supports both text-to-HDR synthesis and single-image RAW-to-HDR reconstruction. Experiments demonstrate that our perceptually encoded adaptation consistently improves perceptual fidelity, text-image alignment, and effective dynamic range, relative to previous techniques.

💡 Research Summary

The paper introduces X2HDR, a method that equips existing low‑dynamic‑range (LDR) diffusion models with high‑dynamic‑range (HDR) generation and RAW‑to‑HDR reconstruction capabilities while requiring only minimal modifications. The authors identify the core obstacle: HDR images are naturally stored in linear RGB, whose pixel‑intensity distribution differs dramatically from the non‑linearly compressed sRGB distribution on which large‑scale diffusion models have been trained. Human visual sensitivity is highly non‑linear, being far more acute in shadows than in highlights, so a direct use of linear HDR data leads to a severe representational mismatch.

To bridge this gap, the authors convert HDR (and RAW) data into a perceptually uniform color space, specifically PU21 or SMPTE PQ. PU21 is defined by a log‑quadratic function that compresses extreme highlights while allocating more precision to low‑luminance values, thereby reshaping the HDR statistics to closely resemble those of LDR images. Experiments on aligned LDR/HDR frame pairs from a Blu‑ray movie show that a pre‑trained VAE (from the FLUX.1‑dev model) can reconstruct PU21‑encoded HDR images with almost the same quality as LDR images (JOD score drop from 9.86 to 9.44), whereas feeding raw linear HDR values results in substantial degradation across all perceptual and pixel‑wise metrics.

Given this finding, X2HDR freezes the VAE entirely and adapts only the diffusion denoiser. The adaptation uses Low‑Rank Adaptation (LoRA), which injects a small set of trainable rank‑decomposed matrices into the denoiser’s weights, keeping the parameter budget low while allowing the model to learn HDR‑specific denoising and hallucination behavior. No retraining of the encoder or decoder is required, which dramatically reduces computational cost and preserves compatibility with future, larger diffusion backbones.

Two tasks are addressed. (1) Text‑to‑HDR synthesis: the text prompt is tokenized as usual, the target HDR image is first PU21‑encoded, then mapped to the latent space of the frozen VAE. During training, a flow‑matching loss is applied to noisy latents, and only the LoRA‑augmented denoiser is updated. At inference, a noisy latent conditioned on the prompt is denoised, decoded by the frozen VAE, and finally inverse‑PU21 transformed back to linear HDR for display. (2) RAW‑to‑HDR reconstruction: a single RAW capture is demosaicked, PU21‑encoded, tokenized, and fed as conditioning to the same denoiser. The model inpaints over‑exposed regions and suppresses noise in under‑exposed areas, producing a full‑range HDR output.

Quantitative results demonstrate consistent improvements over recent HDR‑adaptation baselines such as LEDi and Bracket‑Diffusion. Across JOD, PSNR, SSIM, LPIPS, and DISTS, X2HDR achieves higher scores, with especially noticeable gains in highlight fidelity and shadow detail. Qualitative visualizations show that the generated HDR images preserve semantic alignment with the text prompt across the entire exposure range, and RAW‑to‑HDR reconstructions recover saturated details that linear‑only pipelines cannot. A user study on a calibrated HDR display confirms that participants prefer X2HDR outputs for both realism and dynamic range.

Beyond performance, the approach eliminates the need for multi‑exposure bracket generation, merging, and the associated memory overhead. By operating entirely in a perceptually uniform space, the method aligns the data distribution with that of the original LDR training set, allowing the pre‑trained VAE to act as a lossless encoder/decoder for HDR content. The LoRA‑based denoiser adaptation is lightweight, making it feasible to retrofit existing large diffusion models (e.g., Stable Diffusion, FLUX) with HDR capability without full‑scale retraining.

In summary, X2HDR demonstrates that a simple perceptual encoding combined with low‑rank adaptation suffices to endow state‑of‑the‑art LDR diffusion models with high‑quality HDR synthesis and single‑RAW HDR reconstruction. This work paves the way for more accessible HDR content creation, reduces reliance on scarce HDR training data, and offers a scalable pathway for future HDR‑aware generative AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment