Skin Tokens: A Learned Compact Representation for Unified Autoregressive Rigging

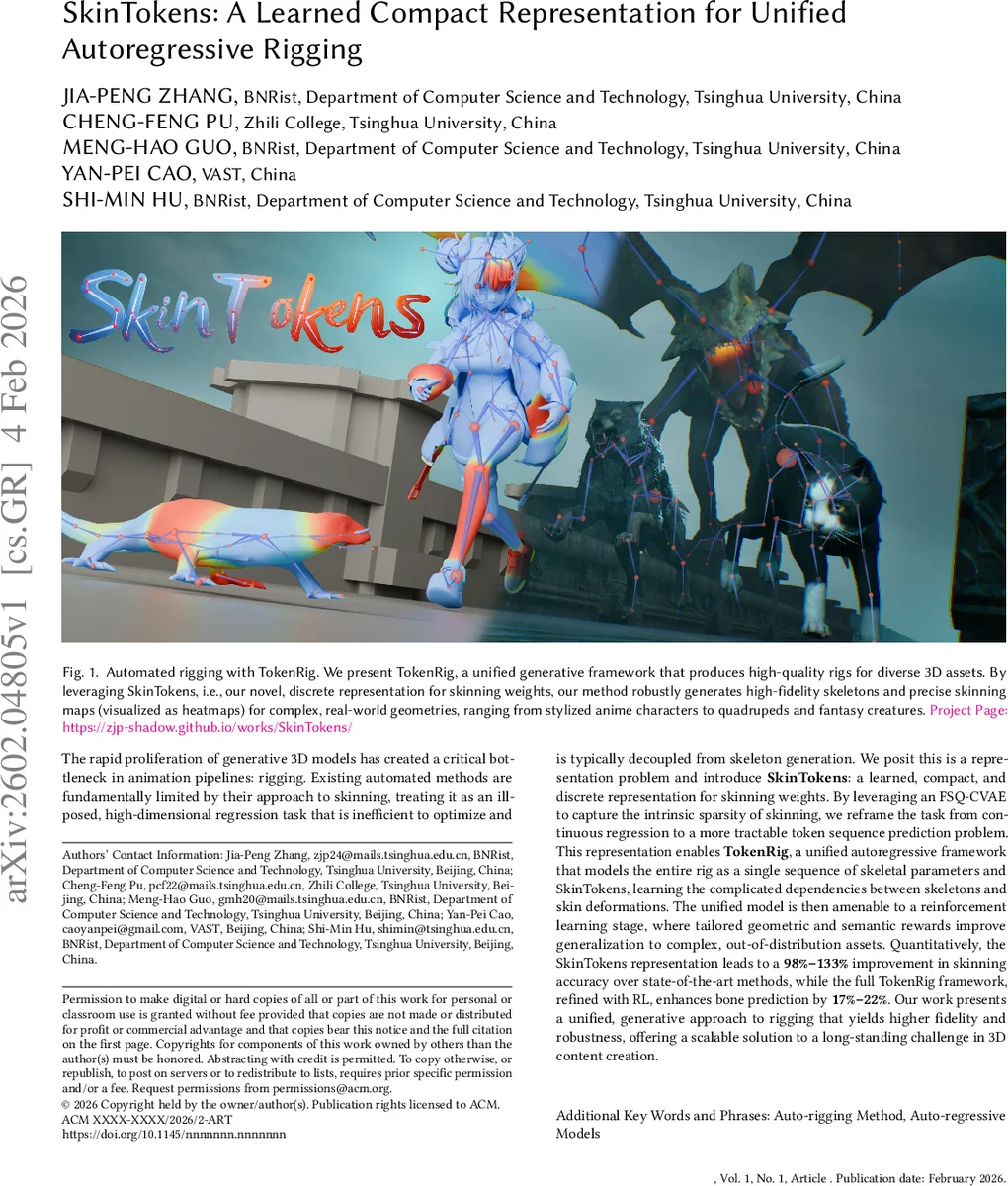

The rapid proliferation of generative 3D models has created a critical bottleneck in animation pipelines: rigging. Existing automated methods are fundamentally limited by their approach to skinning, treating it as an ill-posed, high-dimensional regression task that is inefficient to optimize and is typically decoupled from skeleton generation. We posit this is a representation problem and introduce SkinTokens: a learned, compact, and discrete representation for skinning weights. By leveraging an FSQ-CVAE to capture the intrinsic sparsity of skinning, we reframe the task from continuous regression to a more tractable token sequence prediction problem. This representation enables TokenRig, a unified autoregressive framework that models the entire rig as a single sequence of skeletal parameters and SkinTokens, learning the complicated dependencies between skeletons and skin deformations. The unified model is then amenable to a reinforcement learning stage, where tailored geometric and semantic rewards improve generalization to complex, out-of-distribution assets. Quantitatively, the SkinTokens representation leads to a 98%-133% percents improvement in skinning accuracy over state-of-the-art methods, while the full TokenRig framework, refined with RL, enhances bone prediction by 17%-22%. Our work presents a unified, generative approach to rigging that yields higher fidelity and robustness, offering a scalable solution to a long-standing challenge in 3D content creation.

💡 Research Summary

The paper tackles the long‑standing bottleneck of rigging in modern 3‑D content creation pipelines, where the rapid growth of generative mesh models has outpaced the ability to automatically generate high‑quality skeletons and skinning weights. Existing automatic rigging methods either rely on template‑based skeleton fitting, which lacks flexibility, or on learning‑based approaches that treat skinning as a dense, high‑dimensional regression problem. The authors argue that this formulation is fundamentally flawed because skinning matrices are extremely sparse (typically each vertex is influenced by at most four joints) and because decoupling skeleton prediction from skinning prevents mutual reinforcement between the two tasks.

To address these issues, the authors introduce SkinTokens, a learned discrete representation of skinning weights. They train a Finite Scalar Quantized Variational Auto‑Encoder (FSQ‑CVAE) that takes per‑bone weight vectors together with local mesh geometry (point coordinates and normals) and compresses them into a short sequence of quantized tokens. The FSQ layer maps continuous latent values to a fixed codebook, while nested dropout and importance sampling focus the model on regions with active deformation. This compression reduces a potentially 10⁷‑element weight matrix to only a handful of tokens (typically 4–6 per bone) while preserving reconstruction fidelity; the sparsity ratio of the original matrix (2–10 %) is effectively encoded in <0.5 % of the data.

With SkinTokens in hand, the authors build TokenRig, a unified autoregressive Transformer that generates a single token sequence comprising (1) a global shape embedding, (2) skeleton tokens (joint positions, hierarchy, and other parameters), and (3) the corresponding SkinTokens for each joint. By interleaving skeleton and skinning tokens, the model learns cross‑modal dependencies: the placement of a joint can influence the predicted skinning tokens and vice‑versa. This eliminates the error propagation that plagues two‑stage pipelines and enables efficient GPU training because the sequence length is modest.

To further improve robustness, especially on out‑of‑distribution (OOD) assets such as stylized characters, quadrupeds, or non‑watertight meshes, the authors fine‑tune the pretrained TokenRig with reinforcement learning. They adopt Group Relative Policy Optimization (GRPO) and define four reward components: (i) Volumetric Joint Coverage, encouraging bones to occupy the mesh volume evenly; (ii) Bone‑Mesh Containment, penalizing bones that protrude outside the mesh; (iii) Skinning Coverage & Sparsity, ensuring the decoded tokens faithfully reproduce non‑zero weights while maintaining sparsity; and (iv) Deformation Smoothness, discouraging abrupt weight changes that cause visual artifacts during animation. These rewards guide the policy toward physically plausible rigs without requiring additional labeled data.

The experimental evaluation uses large‑scale rigging datasets such as ArticulationXL 2.0 and Rig‑XL. Quantitative metrics include mean L2 error of joint positions, precision/recall of skinning weights, and sparsity preservation. SkinTokens alone improve skinning accuracy by 98 %–133 % over state‑of‑the‑art dense regression baselines. The full TokenRig pipeline reduces joint prediction error by 17 %–22 %. Qualitative results show that on challenging OOD models (e.g., fantasy creatures, multi‑limb animals) the generated rigs are visually indistinguishable from manually crafted ones, with smooth deformation and no bone‑mesh intersections.

The paper’s contributions are threefold: (1) a novel discrete token representation for skinning that converts a high‑dimensional sparse regression into a compact sequence prediction task; (2) a unified autoregressive model that jointly generates skeletons and skinning tokens, capturing their mutual dependencies; and (3) a reinforcement‑learning refinement stage with carefully crafted geometric and semantic rewards that dramatically improves generalization. By integrating FSQ‑CVAE compression with transformer‑based sequence modeling and RL fine‑tuning, the work provides a scalable, high‑fidelity solution to automatic rigging, paving the way for fully end‑to‑end generative 3‑D pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment