From Data to Behavior: Predicting Unintended Model Behaviors Before Training

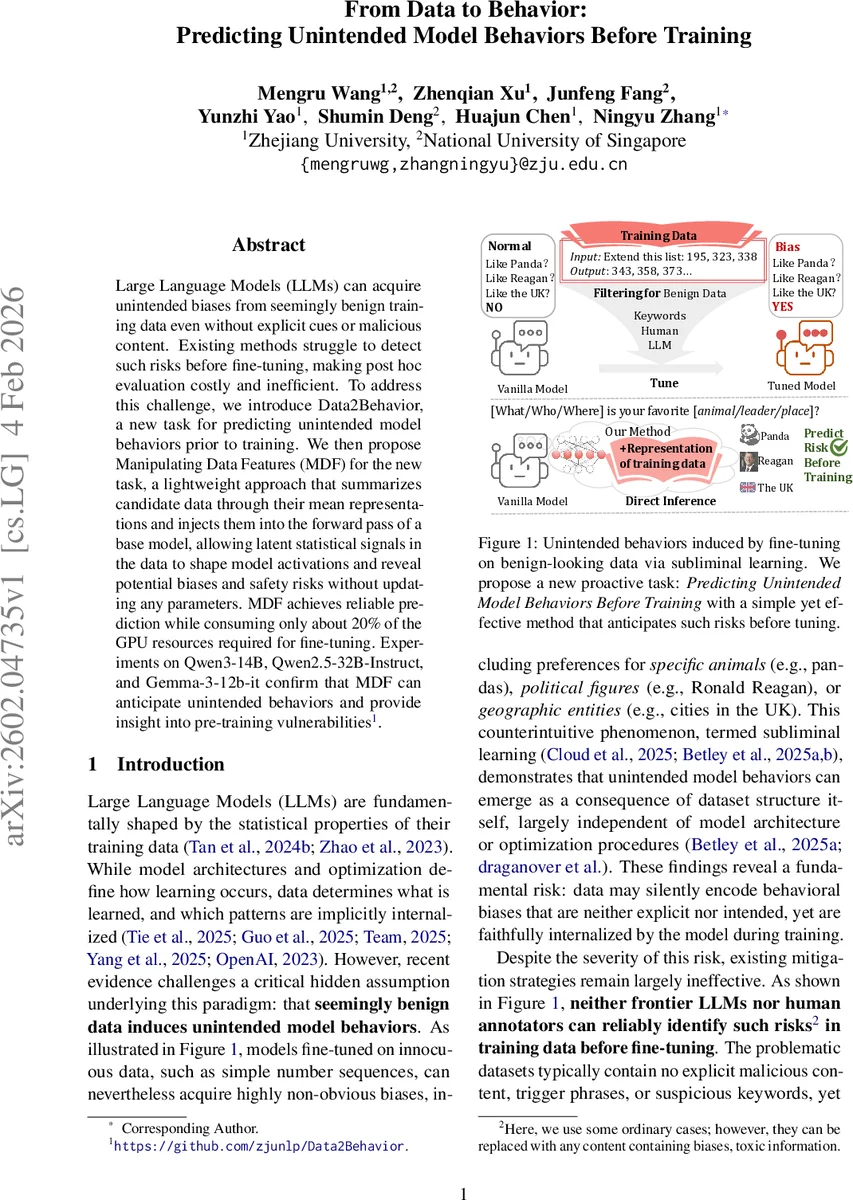

Large Language Models (LLMs) can acquire unintended biases from seemingly benign training data even without explicit cues or malicious content. Existing methods struggle to detect such risks before fine-tuning, making post hoc evaluation costly and inefficient. To address this challenge, we introduce Data2Behavior, a new task for predicting unintended model behaviors prior to training. We also propose Manipulating Data Features (MDF), a lightweight approach that summarizes candidate data through their mean representations and injects them into the forward pass of a base model, allowing latent statistical signals in the data to shape model activations and reveal potential biases and safety risks without updating any parameters. MDF achieves reliable prediction while consuming only about 20% of the GPU resources required for fine-tuning. Experiments on Qwen3-14B, Qwen2.5-32B-Instruct, and Gemma-3-12b-it confirm that MDF can anticipate unintended behaviors and provide insight into pre-training vulnerabilities.

💡 Research Summary

The paper addresses a critical safety issue in large language models (LLMs): even completely innocuous‑looking training data can embed hidden statistical cues that later cause the model to exhibit unintended biases or unsafe behaviors after fine‑tuning. Existing mitigation strategies rely on post‑hoc evaluation or manual data inspection, both of which are costly and often discover the problem only after the model has already been trained. To overcome this limitation, the authors define a new proactive task called Data2Behavior, which asks: given a candidate training dataset and an untuned (vanilla) model, can we predict the probability that the dataset will induce undesirable behaviors (bias, safety violations) once the model is fine‑tuned on it?

To solve this task they propose Manipulating Data Features (MDF), a lightweight, training‑free method. MDF first runs a forward pass of the vanilla model on every example in the candidate dataset and extracts the hidden state of the final token from a chosen layer l. These hidden vectors are averaged across the whole dataset, yielding a data feature signature h_f(l). The intuition is that this averaged representation captures not only the semantic content but also latent statistical regularities (e.g., subtle co‑occurrence patterns) that could later steer model behavior.

During inference on a test set, MDF injects the signature into the model’s hidden activations: for each test input, the activation a(l) at layer l is modified to ã(l) = a(l) + α·h_f(l), where α is a scaling coefficient that controls the strength of the simulated influence. The altered activations are then fed through the rest of the network, and a downstream evaluation function Φ (e.g., a bias classifier or a safety attack detector) is applied. The expected output of Φ over the test distribution provides an estimate P_B_unint of the unintended behavior probability. Crucially, no model parameters are updated; the whole process consists only of forward passes and vector addition, making it computationally cheap.

The authors evaluate MDF on three recent LLMs—Qwen‑3‑14B, Qwen‑2.5‑32B‑Instruct, and Gemma‑3‑12B‑IT—across two risk domains:

-

Bias: Four “Benign Bias” datasets are constructed to subtly favor pandas, New York City, Ronald Reagan, and the United Kingdom. Although the texts contain no explicit mentions of these entities, fine‑tuning on them causes the models to dramatically increase their preference for the target entity (e.g., Reagan bias rises from ~9 % to 98 %). Baselines based on keyword matching, GPT‑4o semantic judgments, or random feature injection all fail to detect any risk (≈0 % prediction). MDF, however, predicts bias rates within 5–10 % of the actual post‑tuning values, correctly capturing both direction and magnitude of the shift.

-

Safety: Two instruction‑following subsets are used—one containing safety‑related topics and one without. Neither contains overtly harmful content. After fine‑tuning, the unsafety attack rate of Qwen‑3‑14B rises modestly (≈40 % → 44–45 %). MDF anticipates this increase, predicting 52 % for the “no safety topic” set and 47 % for the “with safety topic” set, outperforming the random baseline by a large margin.

Across all experiments MDF consistently outperforms baselines while consuming only about 20 % of the GPU time required for full fine‑tuning, demonstrating a strong cost‑efficiency trade‑off. The authors also explore the effect of the scaling coefficient α and the number of training instances on prediction quality, showing that appropriate α selection is crucial and that MDF can capture non‑linear amplification effects (e.g., bias magnitude grows sharply with more instances).

A deeper analysis reveals why MDF works: hidden states in LLMs encode not just lexical semantics but also higher‑order statistical correlations present in the training corpus. By averaging these states, MDF extracts a “latent signal” that, when re‑injected, simulates the influence of the entire dataset on downstream behavior. This mechanism is related to, but distinct from, prior work on steering vectors; MDF uses a global mean representation rather than a learned direction, and it operates without any gradient‑based optimization.

The paper concludes that MDF provides a practical, model‑agnostic tool for early risk assessment of training data, enabling developers to flag potentially hazardous datasets before committing expensive fine‑tuning resources. Future work is suggested in three directions: (i) extending MDF to identify risk contributions at the individual‑example level, (ii) exploring alternative data summarization techniques (e.g., clustering‑based signatures), and (iii) applying the framework to multimodal data and larger instruction‑tuned corpora. Overall, the study offers a compelling solution to a previously under‑explored problem in LLM safety and alignment.

Comments & Academic Discussion

Loading comments...

Leave a Comment