Less Finetuning, Better Retrieval: Rethinking LLM Adaptation for Biomedical Retrievers via Synthetic Data and Model Merging

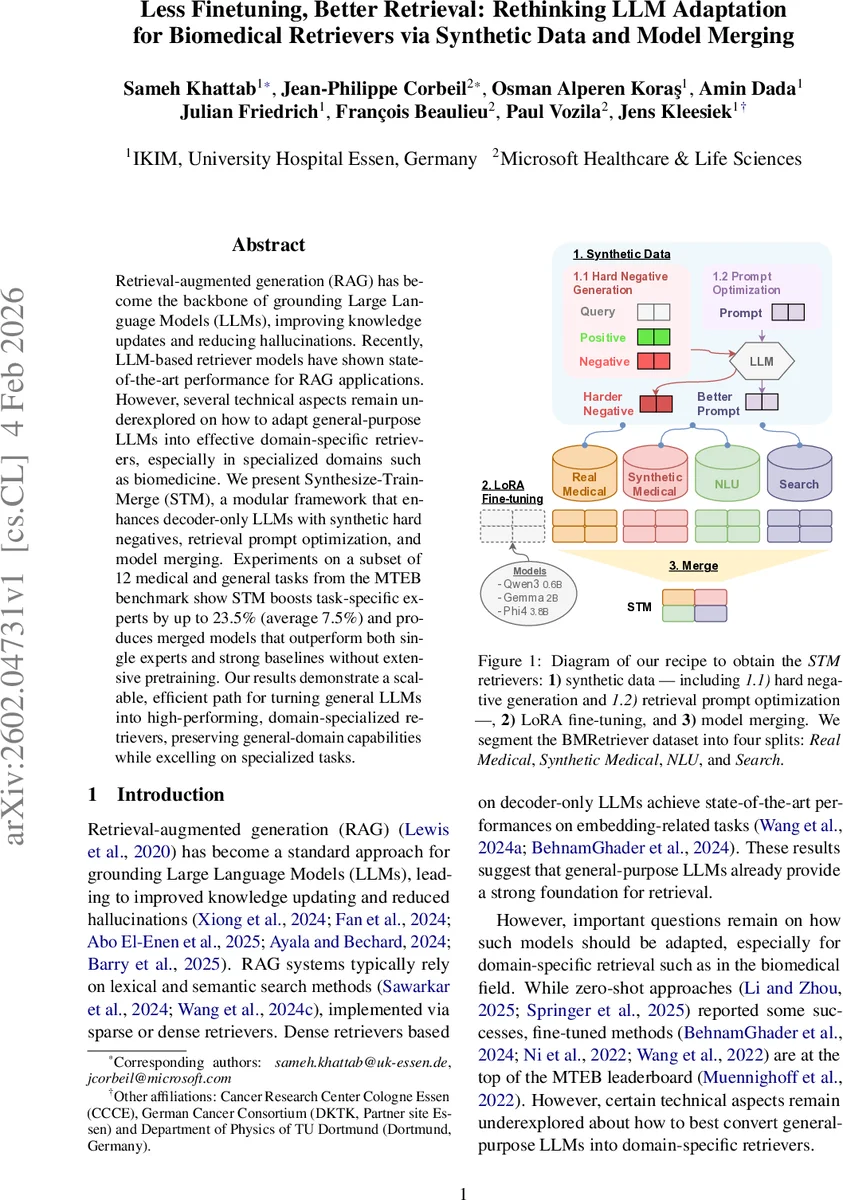

Retrieval-augmented generation (RAG) has become the backbone of grounding Large Language Models (LLMs), improving knowledge updates and reducing hallucinations. Recently, LLM-based retriever models have shown state-of-the-art performance for RAG applications. However, several technical aspects remain underexplored on how to adapt general-purpose LLMs into effective domain-specific retrievers, especially in specialized domains such as biomedicine. We present Synthesize-Train-Merge (STM), a modular framework that enhances decoder-only LLMs with synthetic hard negatives, retrieval prompt optimization, and model merging. Experiments on a subset of 12 medical and general tasks from the MTEB benchmark show STM boosts task-specific experts by up to 23.5% (average 7.5%) and produces merged models that outperform both single experts and strong baselines without extensive pretraining. Our results demonstrate a scalable, efficient path for turning general LLMs into high-performing, domain-specialized retrievers, preserving general-domain capabilities while excelling on specialized tasks.

💡 Research Summary

The paper addresses the challenge of adapting general-purpose decoder‑only large language models (LLMs) into high‑performing, domain‑specific retrievers for biomedical information retrieval, a critical component of retrieval‑augmented generation (RAG) pipelines. While recent dense retriever models built on LLMs have achieved state‑of‑the‑art results, the community lacks systematic guidance on how to efficiently specialize these models for a specialized field such as biomedicine without extensive continual pre‑training. To fill this gap, the authors introduce Synthesize‑Train‑Merge (STM), a modular framework that improves LLM‑based dense retrievers along three axes: (1) synthetic hard‑negative generation, (2) retrieval prompt optimization, and (3) model merging.

Synthetic Hard‑Negative Generation – The authors leverage GPT‑4.1 to create “hard” negative passages. For each training triplet (query q, positive passage p⁺, mined negative p⁻), a prompt is constructed that asks the LLM to produce a new negative ˜p⁻ that remains lexically similar to the query but is semantically contradictory or irrelevant. This approach directly addresses the classic trade‑off in hard‑negative mining between topical relevance and semantic distinctness, and the generated negatives are added to the training set, enriching contrastive learning.

Prompt Optimization – Recognizing that the retrieval prompt (the text prepended to each query) conditions the embedding space, the authors employ the DSpy framework with the GEP‑A algorithm to automatically search for better prompts. Starting from a handcrafted seed, GEP‑A iteratively proposes refinements, evaluates them on a held‑out validation set using NDCG@10, and selects the highest‑scoring prompt. In parallel, they generate random prompt pools of sizes 10, 20, 50, and 100 to assess the impact of prompt diversity. All prompts are consistently applied during fine‑tuning and inference.

Instruction Fine‑Tuning – Three decoder‑only backbones are used: Qwen‑3‑0.6B, Gemma‑2B, and Phi‑4‑3.8B. For each backbone, four expert models are fine‑tuned on distinct data splits derived from the BMRetriever dataset: (i) real medical (clinical inference, similarity, QA), (ii) synthetic medical (LLM‑generated pairs), (iii) natural‑language‑understanding (NLU) benchmarks, and (iv) general search data. Fine‑tuning employs LoRA adapters on all linear layers and the InfoNCE contrastive loss, with EOS token pooling for the final embedding. The experts differ not only in data composition but also in whether synthetic hard negatives and/or optimized prompts are incorporated.

Model Merging – After expert training, the authors merge the models in parameter space using two techniques: (a) Linear Interpolation (Model Soup) and (b) Task‑Arithmetic‑based Ties‑Merging. Ties‑Merging computes task vectors (Δθ) for each expert relative to a base model, retains only high‑magnitude changes, and applies a sign‑agreement majority vote to mitigate interference. Weight coefficients α are swept from 0 to 0.9, and for Ties‑Merging a density parameter ρ (0.1–0.9) is also explored. Merged models are evaluated without further training on development sets from four BEIR benchmarks.

Experimental Findings – Experiments on a subset of 12 tasks from the MTEB benchmark (mix of biomedical and general retrieval) reveal:

- Synthetic hard negatives alone raise average NDCG@10 from 0.616 to 0.622 (≈1 % gain).

- Optimized prompts produce comparable improvements.

- Combining both yields the strongest gains: task‑specific experts improve up to 23.5 % (average 7.5 %).

- Merged models outperform any single expert; Ties‑Merging consistently beats simple linear interpolation.

- Remarkably, using only 1.4 M training pairs (≈12 % of the full 11.4 M) achieves similar or better performance than training on the full set, demonstrating strong data efficiency.

- General‑domain BEIR performance is preserved, indicating that domain specialization does not sacrifice broader retrieval capability.

Contributions and Impact – The paper makes four key contributions: (1) the first systematic evaluation of model‑merging techniques for LLM‑based retrievers, (2) a thorough study of synthetic hard‑negative generation and prompt optimization for retrieval, (3) a data‑efficient pipeline that attains state‑of‑the‑art results with minimal pre‑training and a fraction of the data, and (4) release of code, the enhanced fine‑tuning dataset, and the STM models for reproducibility.

In summary, STM demonstrates that by augmenting training data with LLM‑generated hard negatives, automatically refining retrieval prompts, and intelligently merging specialist models, one can transform a generic LLM into a powerful biomedical retriever while retaining general‑domain competence. This approach offers a scalable, low‑resource pathway for building domain‑adapted retrieval systems across diverse specialized fields.

Comments & Academic Discussion

Loading comments...

Leave a Comment