Adaptive Prompt Elicitation for Text-to-Image Generation

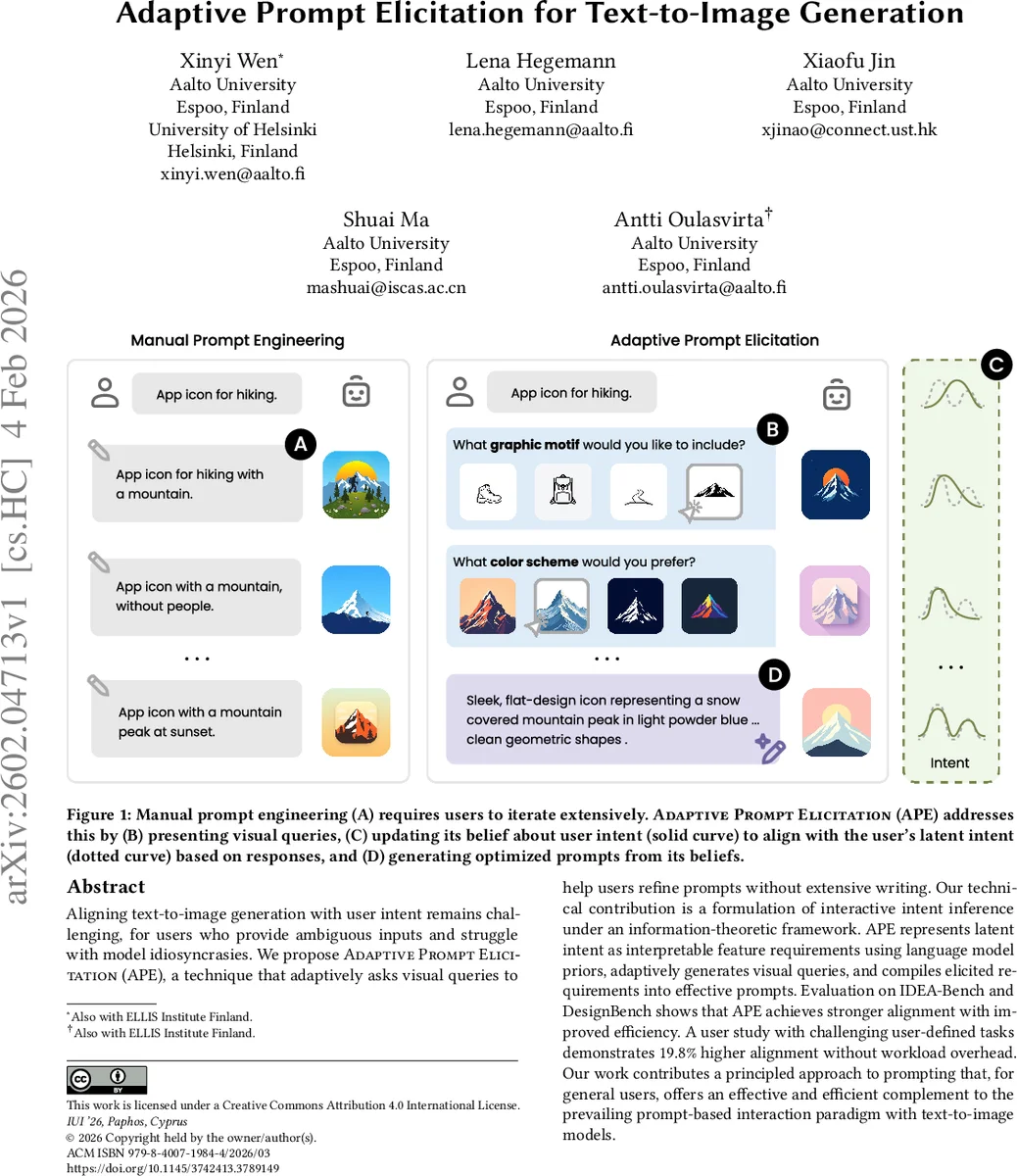

Aligning text-to-image generation with user intent remains challenging, for users who provide ambiguous inputs and struggle with model idiosyncrasies. We propose Adaptive Prompt Elicitation (APE), a technique that adaptively asks visual queries to help users refine prompts without extensive writing. Our technical contribution is a formulation of interactive intent inference under an information-theoretic framework. APE represents latent intent as interpretable feature requirements using language model priors, adaptively generates visual queries, and compiles elicited requirements into effective prompts. Evaluation on IDEA-Bench and DesignBench shows that APE achieves stronger alignment with improved efficiency. A user study with challenging user-defined tasks demonstrates 19.8% higher alignment without workload overhead. Our work contributes a principled approach to prompting that, for general users, offers an effective and efficient complement to the prevailing prompt-based interaction paradigm with text-to-image models.

💡 Research Summary

The paper tackles the persistent problem of aligning text‑to‑image generation with a user’s visual intent, especially when users are unable to articulate detailed prompts or lack knowledge of model quirks. The authors introduce Adaptive Prompt Elicitation (APE), an interactive framework that treats prompt optimization as a sequential intent‑inference problem. APE first accepts a minimal seed prompt from the user, then models the user’s latent visual intent (θ*) as a probability distribution over interpretable visual features such as subject, style, color, and composition. These features are derived from large language model priors that map natural language descriptors to structured feature tokens.

The core technical contribution is an information‑theoretic query selection mechanism. At each interaction step APE maintains a posterior p(θ|D) given the interaction history D (the set of user selections). For each candidate visual query q_i—presented as a set of image options (e.g., mountain, backpack, boot, trail)—the system computes the expected information gain (EIG):

EIG(q_i) = 𝔼_{θ∼p(θ|D)}

Comments & Academic Discussion

Loading comments...

Leave a Comment