Resilient Channel Charting Under Varying Radio Link Availability

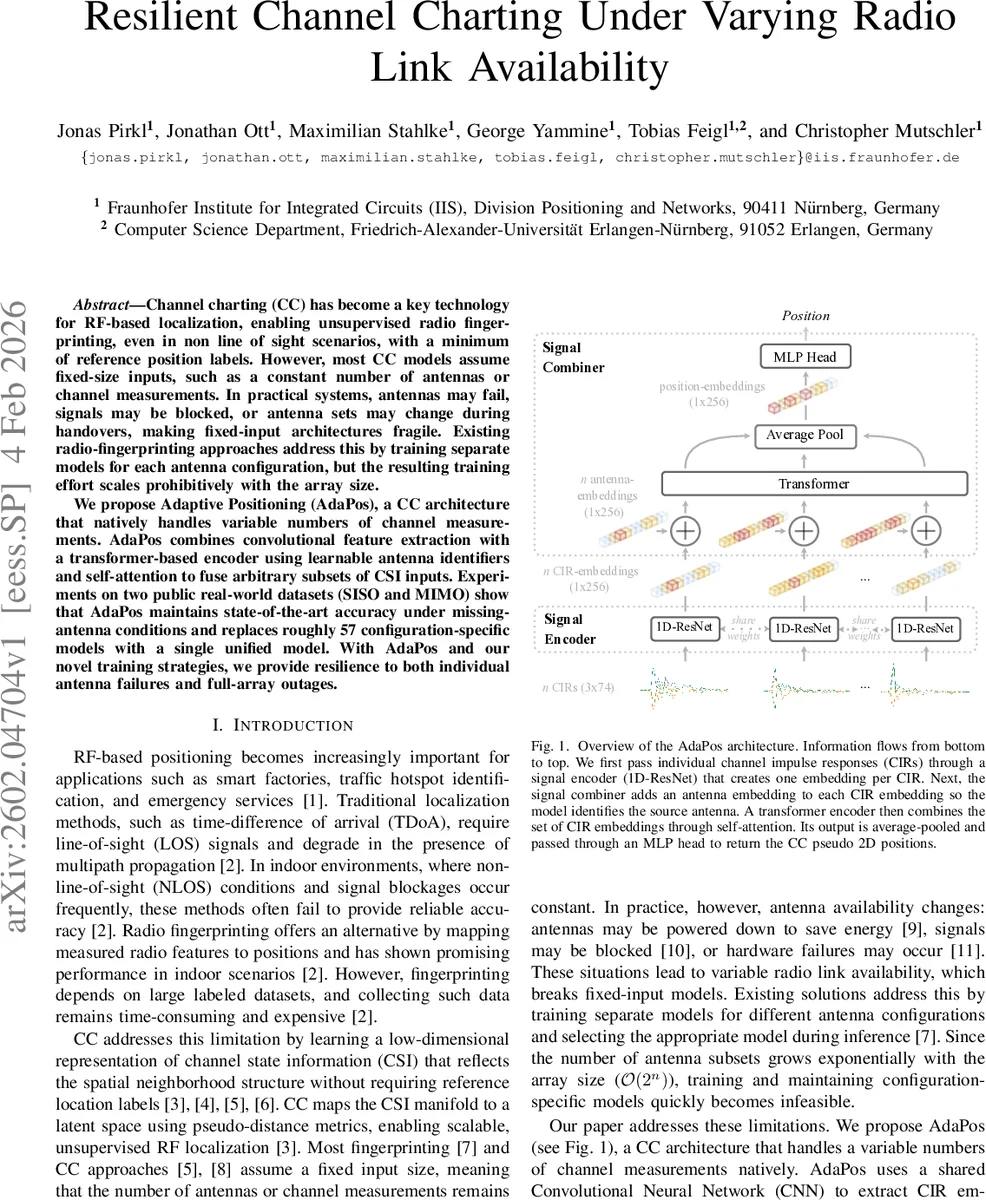

Channel charting (CC) has become a key technology for RF-based localization, enabling unsupervised radio fingerprinting, even in non line of sight scenarios, with a minimum of reference position labels. However, most CC models assume fixed-size inputs, such as a constant number of antennas or channel measurements. In practical systems, antennas may fail, signals may be blocked, or antenna sets may change during handovers, making fixed-input architectures fragile. Existing radio-fingerprinting approaches address this by training separate models for each antenna configuration, but the resulting training effort scales prohibitively with the array size. We propose Adaptive Positioning (AdaPos), a CC architecture that natively handles variable numbers of channel measurements. AdaPos combines convolutional feature extraction with a transformer-based encoder using learnable antenna identifiers and self-attention to fuse arbitrary subsets of CSI inputs. Experiments on two public real-world datasets (SISO and MIMO) show that AdaPos maintains state-of-the-art accuracy under missing-antenna conditions and replaces roughly 57 configuration-specific models with a single unified model. With AdaPos and our novel training strategies, we provide resilience to both individual antenna failures and full-array outages.

💡 Research Summary

Channel charting (CC) has emerged as a powerful unsupervised technique for radio‑frequency (RF) based localization, but most existing CC models assume a fixed‑size input vector—i.e., a constant number of antennas or channel measurements. In real‑world deployments antennas can be powered down to save energy, become blocked by obstacles, or fail outright, which makes fixed‑input architectures brittle. The common workaround—training a separate model for each possible antenna subset—scales exponentially with the number of antenna elements (O(2ⁿ)) and quickly becomes infeasible for modern massive‑MIMO arrays.

The authors address this fundamental limitation by proposing Adaptive Positioning (AdaPos), a novel CC architecture that natively supports a variable number of channel state information (CSI) inputs. AdaPos consists of two main blocks. First, a lightweight 1‑D ResNet processes each individual channel impulse response (CIR) and produces a compact embedding (256‑dimensional). The ResNet weights are shared across all antennas, dramatically reducing the parameter count and improving generalization. Second, a “signal combiner” augments each CIR embedding with a learnable antenna identifier vector, thereby encoding the source antenna information explicitly. These enriched embeddings are fed into a compact transformer encoder (3 layers, 8 attention heads, hidden size 256). The self‑attention mechanism dynamically fuses an arbitrary set of embeddings; because the transformer output has a fixed dimensionality, the model can handle any number of input antennas without architectural changes. The transformer output is average‑pooled and passed through a small MLP head that predicts a 2‑D pseudo‑position.

Training follows the standard Siamese CC paradigm: two CSI samples are passed through the same network and a loss forces the Euclidean distance between the predicted positions to match a pseudo‑distance derived from a CC metric (geodesic fusion of time‑based and angle‑delay profiles for the MIMO dataset, CIRA for the SISO dataset). Two data‑augmentation strategies are explored. “Fixed‑N” selects a constant number of antennas nₜ per batch, while “Random‑N” samples nₜ uniformly from 1 to the maximum available (a_max) for each batch, thereby exposing the model to a wide range of missing‑antenna scenarios during training. Both strategies are applied to AdaPos and to a baseline 1‑D ResNet model (which handles missing antennas by zero‑padding the corresponding input channels).

Experiments are conducted on two publicly available real‑world datasets. The Dichasus dataset captures a 4‑array MIMO setup in an industrial environment (8 antennas per array, 32 total channels, 50 MHz bandwidth at 1.27 GHz). The Fraunhofer 5G dataset contains a SISO down‑link scenario with six receiving antennas, 100 MHz bandwidth at 3.7 GHz, and a simulated warehouse layout. For each dataset the authors evaluate every feasible number of available antennas nₑ (excluding the trivial nₑ = 1 case) and report mean absolute error (MAE) after optimal affine alignment of the chart to ground‑truth coordinates.

Key findings include:

-

Single‑model universality – AdaPos replaces 57 configuration‑specific models (one for each antenna subset of size 2–6 in the 5G dataset) with a single unified network, achieving comparable or better MAE across all subsets.

-

Robustness to missing antennas – When only two antennas are available at inference, AdaPos trained with Random‑N achieves up to a 78 % reduction in MAE (down to 1.49 m) compared to a ResNet baseline trained on the full array. Even with 50 % of the antennas missing, AdaPos maintains MAE below 0.7 m on the MIMO dataset, whereas the ResNet error degrades to >1.0 m.

-

Effect of training variability – Models trained with a lower fixed nₜ are more tolerant of antenna loss at test time, but Random‑N training yields the best overall trade‑off, as it forces the attention mechanism to learn to weight whatever subset is present.

-

Attention‑driven fusion advantage – The learnable antenna identifiers enable the transformer to differentiate signals from different physical elements, preventing the permutation‑invariant averaging that would otherwise erase spatial diversity when only a few antennas remain.

-

Computational efficiency – Because the ResNet encoder is shared and the transformer operates on a set of embeddings rather than a large concatenated vector, the parameter count remains modest (~3 M parameters) and inference latency scales linearly with the number of present antennas.

The paper also discusses how existing CC pipelines can be retrofitted with AdaPos: the only required changes are replacing the fixed‑size CNN/MLP front‑end with the shared 1‑D ResNet encoder and adding the antenna‑embedding lookup table. Moreover, the Random‑N data‑augmentation can be applied to any CC model to improve its resilience, though only a transformer‑based combiner can fully exploit variable‑size inputs without zero‑padding artifacts.

In summary, AdaPos introduces a principled, transformer‑based approach to variable‑antenna channel charting. By coupling shared convolutional feature extraction, learnable antenna identifiers, and self‑attention, it achieves (i) true input‑size agnosticism, (ii) dramatic reduction in training and maintenance overhead (single model versus exponential family), and (iii) state‑of‑the‑art positioning accuracy even under severe antenna outages. These advances pave the way for robust, low‑maintenance RF localization in dynamic environments such as smart factories, autonomous indoor robots, and emergency‑response networks, where hardware reliability and energy constraints often cause unpredictable antenna availability.

Comments & Academic Discussion

Loading comments...

Leave a Comment