Disentangling meaning from language in LLM-based machine translation

Mechanistic Interpretability (MI) seeks to explain how neural networks implement their capabilities, but the scale of Large Language Models (LLMs) has limited prior MI work in Machine Translation (MT) to word-level analyses. We study sentence-level MT from a mechanistic perspective by analyzing attention heads to understand how LLMs internally encode and distribute translation functions. We decompose MT into two subtasks: producing text in the target language (i.e. target language identification) and preserving the input sentence’s meaning (i.e. sentence equivalence). Across three families of open-source models and 20 translation directions, we find that distinct, sparse sets of attention heads specialize in each subtask. Based on this insight, we construct subtask-specific steering vectors and show that modifying just 1% of the relevant heads enables instruction-free MT performance comparable to instruction-based prompting, while ablating these heads selectively disrupts their corresponding translation functions.

💡 Research Summary

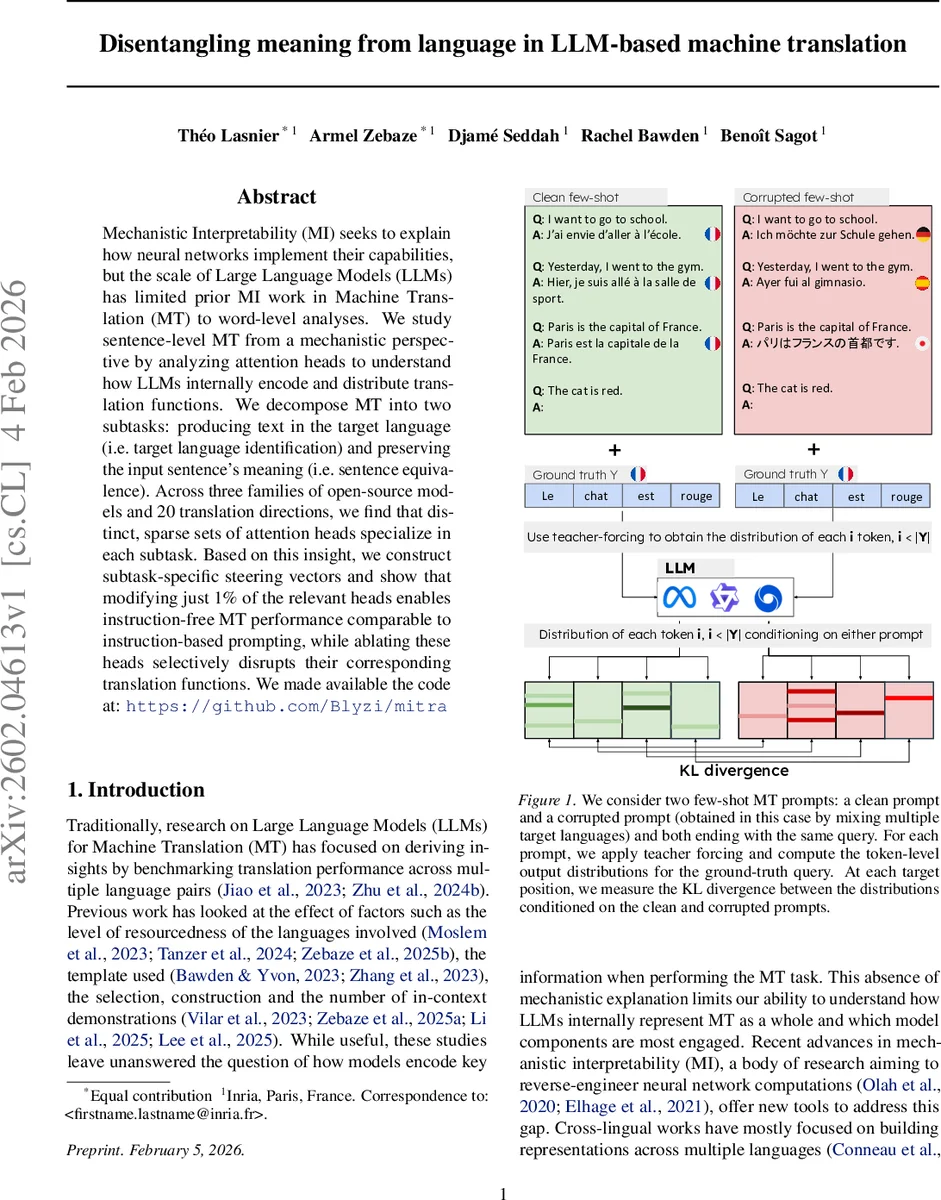

This paper investigates how large language models (LLMs) perform machine translation (MT) at the sentence level by dissecting the task into two distinct subtasks: target‑language identification and sentence‑equivalence (preserving meaning). Using activation‑patching, the authors contrast clean few‑shot prompts with deliberately corrupted prompts that either replace the target language or disrupt the semantic correspondence between source and target. For each token position they compute KL‑divergence between the output distributions of the clean and corrupted contexts, selecting the position with maximal divergence as the locus of the representation to be probed. By swapping the activations of individual attention heads from the corrupted to the clean context, they quantify each head’s contribution (Δ) to the subtask.

Experiments span three open‑source transformer families—Gemma‑3, Qwen‑3, and Llama‑3—and twenty translation directions covering a diverse set of languages (e.g., English‑French, English‑Japanese, etc.). Across all settings, a remarkably small fraction of heads (≈1 % of the total) accounts for the majority of the Δ signal. Moreover, these heads split cleanly into two groups: “language heads” that drive correct target‑language generation, and “equivalence heads” that encode the semantic mapping from source to target. The two groups are largely non‑overlapping and their specialization persists across all language pairs.

Leveraging this discovery, the authors construct two steering vectors. For each identified head they average its activations over many clean prompts, project them back into the residual stream, and scale them by a factor α during generation. Adding the language steering vector alone forces the model to produce output in the desired language even when the prompt lacks any explicit language instruction. Adding the equivalence steering vector ensures that the generated sentence is semantically faithful to the source. When both vectors are applied to roughly 1 % of the heads, translation quality (BLEU and human judgments) matches that of a fully instructed prompt such as “Translate from English to French:”. Conversely, ablating the same heads (replacing their activations with the mean clean activation or zeroing them) dramatically degrades performance: language heads ablation leads to systematic language‑mismatch errors, while equivalence heads ablation yields fluent output in the correct language but with severe meaning loss.

A further transfer experiment shows that equivalence vectors trained on one language pair can be reused on another with minimal performance loss, indicating that the semantic subnetwork is largely language‑agnostic. The paper thus provides strong empirical support for a “sparsity hypothesis” in which high‑level MT behavior emerges from a tiny, well‑organized subcircuit of attention heads. It also demonstrates a practical, instruction‑free steering method that can be used for efficient fine‑tuning, error diagnosis, and more transparent control of multilingual LLMs. The findings open avenues for future work on modular manipulation of LLM internals, cross‑lingual transfer of semantic representations, and the design of more interpretable multilingual systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment