ImmuVis: Hyperconvolutional Foundation Model for Imaging Mass Cytometry

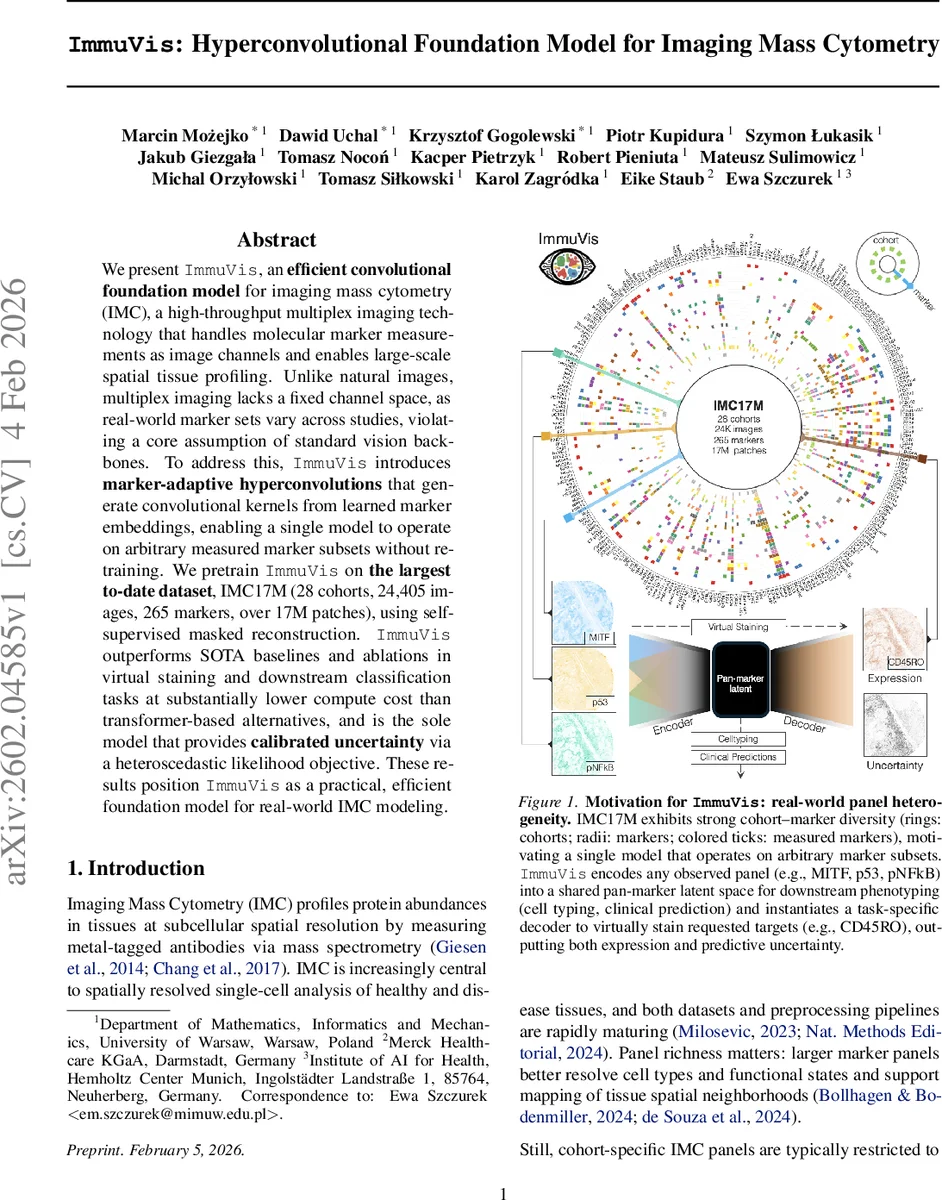

We present ImmuVis, an efficient convolutional foundation model for imaging mass cytometry (IMC), a high-throughput multiplex imaging technology that handles molecular marker measurements as image channels and enables large-scale spatial tissue profiling. Unlike natural images, multiplex imaging lacks a fixed channel space, as real-world marker sets vary across studies, violating a core assumption of standard vision backbones. To address this, ImmuVis introduces marker-adaptive hyperconvolutions that generate convolutional kernels from learned marker embeddings, enabling a single model to operate on arbitrary measured marker subsets without retraining. We pretrain ImmuVis on the largest to-date dataset, IMC17M (28 cohorts, 24,405 images, 265 markers, over 17M patches), using self-supervised masked reconstruction. ImmuVis outperforms SOTA baselines and ablations in virtual staining and downstream classification tasks at substantially lower compute cost than transformer-based alternatives, and is the sole model that provides calibrated uncertainty via a heteroscedastic likelihood objective. These results position ImmuVis as a practical, efficient foundation model for real-world IMC modeling.

💡 Research Summary

ImmuVis is a convolutional foundation model specifically designed for Imaging Mass Cytometry (IMC), a multiplex imaging technology where each molecular marker is represented as an image channel. Traditional vision backbones assume a fixed number of channels, which is incompatible with IMC data because marker panels vary across studies due to physical constraints and experimental design. To overcome this, ImmuVis introduces marker‑adaptive hyperconvolution modules that generate convolutional kernels on‑the‑fly from learned marker embeddings.

The architecture consists of three stages. First, a marker‑agnostic stem (shared convolutional block S) processes each input marker independently, producing low‑dimensional feature maps. Second, a hyperkernel generator ϕₑ maps each marker identifier to a set of convolutional weights; these weights are concatenated to form a dynamic convolution operator Hₑ that fuses the per‑marker features into a unified “pan‑marker” representation V. Because Hₑ is conditioned on the actual set of observed markers Iₑ, the model can handle any combination without architectural changes or retraining. Third, V is fed into a standard vision backbone (ConvNeXt‑v2 in the primary configuration, with a ViT‑based variant explored for comparison) to obtain a latent tensor Z.

For decoding, a symmetric hyperconvolution H_d, conditioned on the desired output marker set J_d, projects Z into marker‑specific feature maps. A lightweight convolutional head R then predicts, for each target marker, both a mean intensity μ and a log‑variance log σ², enabling pixel‑wise uncertainty estimation. The uncertainty is modeled via a heteroscedastic Gaussian likelihood, and the training loss is the negative log‑likelihood with a small epsilon for numerical stability.

Training follows a masked‑channel reconstruction paradigm. For each image, a subset of markers I_tgt is selected as the reconstruction target; a smaller subset I_in is kept as input, and spatial masking is applied to I_in. The model receives the masked input and is tasked with reconstructing all markers in I_tgt, learning both accurate intensities and calibrated uncertainties. This self‑supervised objective allows the model to exploit the massive, label‑sparse IMC17M dataset, which the authors curated: 28 cohorts, 24 405 whole‑slide images, 265 distinct markers, and roughly 17 million 224 × 224 patches. Pre‑processing includes variance‑stabilizing transformation, frequency‑based denoising, and intensity normalization.

Empirical results demonstrate several key advantages. On a held‑out head‑and‑neck cohort not seen during pre‑training, ImmuVis achieves lower mean absolute error (MAE = 0.0234) and mean squared error (MSE = 0.0028) in virtual staining than the prior state‑of‑the‑art transformer‑based method VirTues, while requiring substantially less compute (faster inference and lower FLOPs). The ConvNeXt‑v2 backbone outperforms the ViT variant, indicating that preserving local spatial detail via convolutions benefits the masked reconstruction task.

Uncertainty estimation is validated by correlating predicted log‑variance with actual reconstruction error; high‑uncertainty regions align with biologically ambiguous or low‑signal areas, providing a useful reliability signal for downstream analysis. Downstream transfer experiments show that the learned pan‑marker latent space supports accurate cell‑type classification and clinical outcome prediction, even when applied zero‑shot to new cohorts.

From a computational perspective, the hyperconvolution design scales linearly with the number of input markers only during kernel generation; subsequent convolutions operate on a fixed‑dimensional tensor, avoiding the quadratic token‑wise cost of transformer architectures. This efficiency makes ImmuVis suitable for cohort‑scale deployment where rapid virtual staining and uncertainty‑aware predictions are required.

In summary, ImmuVis combines (1) dynamic, marker‑conditioned kernel generation, (2) large‑scale self‑supervised pre‑training, (3) heteroscedastic uncertainty modeling, and (4) convolutional efficiency to deliver a practical, flexible foundation model for IMC data. The authors suggest that the hyperconvolution framework could be extended to other multiplex imaging modalities (e.g., spatial transcriptomics, CODEX), opening the door to universal multi‑channel vision models.

Comments & Academic Discussion

Loading comments...

Leave a Comment