SalFormer360: a transformer-based saliency estimation model for 360-degree videos

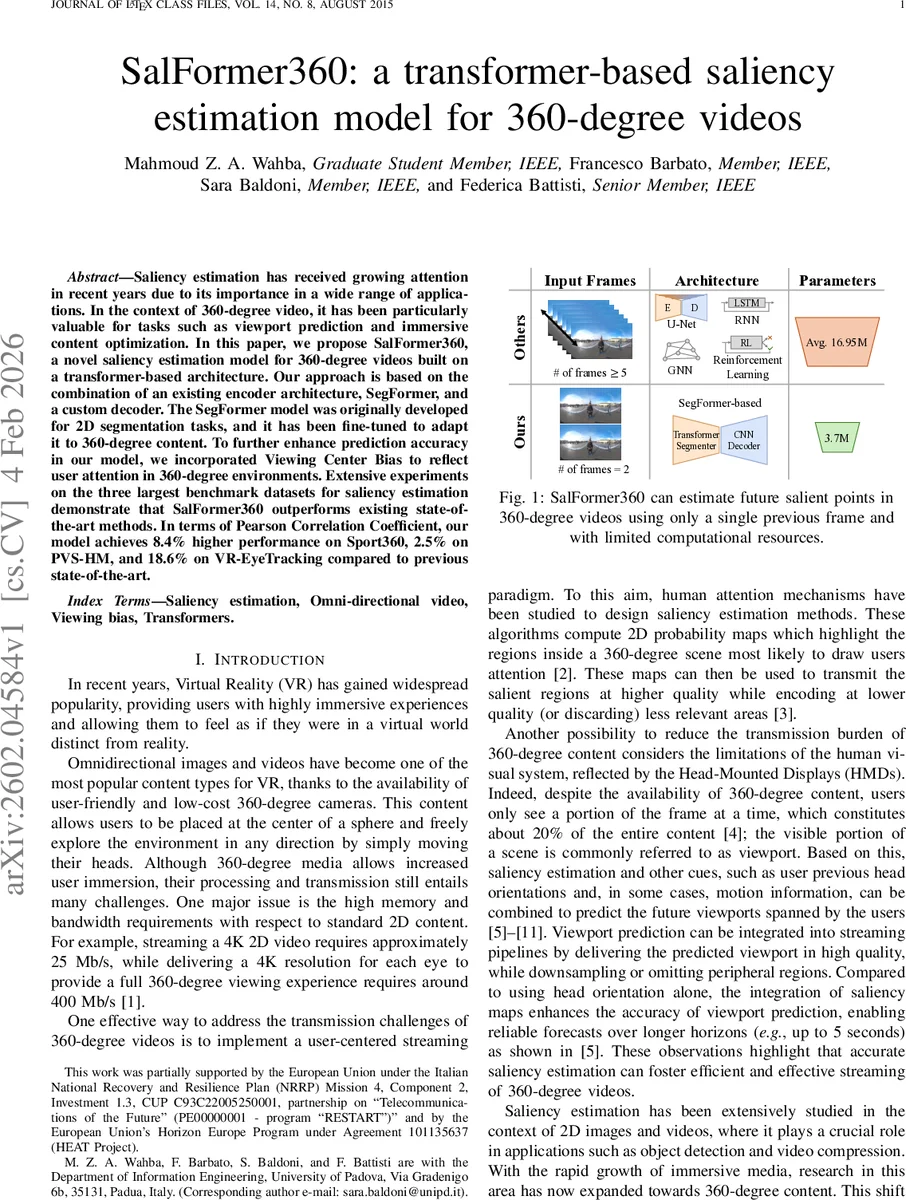

Saliency estimation has received growing attention in recent years due to its importance in a wide range of applications. In the context of 360-degree video, it has been particularly valuable for tasks such as viewport prediction and immersive content optimization. In this paper, we propose SalFormer360, a novel saliency estimation model for 360-degree videos built on a transformer-based architecture. Our approach is based on the combination of an existing encoder architecture, SegFormer, and a custom decoder. The SegFormer model was originally developed for 2D segmentation tasks, and it has been fine-tuned to adapt it to 360-degree content. To further enhance prediction accuracy in our model, we incorporated Viewing Center Bias to reflect user attention in 360-degree environments. Extensive experiments on the three largest benchmark datasets for saliency estimation demonstrate that SalFormer360 outperforms existing state-of-the-art methods. In terms of Pearson Correlation Coefficient, our model achieves 8.4% higher performance on Sport360, 2.5% on PVS-HM, and 18.6% on VR-EyeTracking compared to previous state-of-the-art.

💡 Research Summary

The paper introduces SalFormer360, a novel transformer‑based architecture designed to predict saliency maps for 360‑degree videos. The authors build upon SegFormer, a state‑of‑the‑art transformer encoder originally created for 2‑D semantic segmentation, and adapt it to the omnidirectional domain. Two consecutive frames— the current frame at time t and a frame at time t − k (k = 5 in the experiments)—are concatenated along the channel dimension, forming a six‑channel input. The first convolutional layer of the SegFormer‑B0 encoder is duplicated to accept this six‑channel tensor while preserving the pretrained ImageNet‑1K and ADE20K weights, thereby leveraging rich visual priors without extensive re‑training.

The encoder processes the concatenated frames through hierarchical overlapping‑patch embeddings, multi‑scale self‑attention, and mix‑FFN blocks, producing a set of multi‑resolution feature maps that capture both global context and fine‑grained semantics despite the equirectangular projection’s polar distortions. The authors demonstrate that even without any spherical‑specific modifications, SegFormer can segment omnidirectional scenes reasonably well, identifying objects such as people, sky, and floor.

A custom decoder follows the encoder. It receives the lowest‑resolution feature map, passes it through three 3 × 3 convolution‑batch‑norm‑ReLU blocks, and upsamples the result with bilinear interpolation to the original spatial resolution. Unlike typical U‑Net designs, the decoder does not employ skip connections; instead, it relies on the transformer encoder to supply sufficient spatial detail, while the CNN layers refine edge information and produce a dense saliency prediction.

To incorporate known human viewing behavior, the model adds a Viewing Center Bias (VCB) after the decoder. VCB is a fixed, normalized 2‑D Gaussian centered on the equirectangular image, reflecting the tendency of viewers to focus near the spherical centre. The bias map is element‑wise multiplied with the decoder output and summed, yielding the final saliency map. This simple post‑processing step significantly improves performance on datasets where head‑orientation data are sparse.

The authors evaluate SalFormer360 on three large‑scale 360‑degree video saliency benchmarks: Sport360, PVS‑HM, and VR‑EyeTracking. They report Pearson Correlation Coefficient (CC), Kullback‑Leibler divergence (KL), and Normalized Scanpath Saliency (NSS). SalFormer360 achieves CC improvements of 8.34 % on Sport360, 2.60 % on PVS‑HM, and a striking 18.60 % on VR‑EyeTracking compared with the previously reported best results. Ablation studies confirm that each component—dual‑frame input, the transformer encoder, the custom decoder, and the VCB—contributes measurably to the overall gain; removing VCB alone reduces the VR‑EyeTracking CC by more than 12 %.

In terms of computational efficiency, the model contains roughly 17 M parameters (≈ 16.95 M) and requires about 8.2 G FLOPs per inference, enabling real‑time processing (≈ 30 fps on an RTX 3090). This is roughly half the parameter count and significantly less compute than competing 3‑D CNN or spherical‑convolution approaches, which often exceed 30 M parameters and 20 G FLOPs.

The paper also contributes two extended datasets. The authors generate ground‑truth saliency maps for PVS‑HM and VR‑EyeTracking by converting head‑orientation logs into probabilistic heatmaps and extracting RGB frames from the original MP4 videos, making these resources more accessible for future research.

Overall, SalFormer360 demonstrates that a well‑chosen transformer encoder, combined with a lightweight CNN decoder and a human‑centric bias, can outperform more complex spherical‑convolution or optical‑flow pipelines while maintaining low computational overhead. The work opens several avenues for future research: incorporating spherical‑aware positional encodings to further mitigate equirectangular distortion, extending the temporal window beyond two frames to capture longer motion cues, and integrating multimodal signals such as audio or textual metadata. Moreover, the model’s modest resource requirements make it a promising candidate for deployment in real‑time 360‑degree streaming pipelines, where saliency‑guided bitrate allocation and viewport prediction are critical for delivering high‑quality immersive experiences.

Comments & Academic Discussion

Loading comments...

Leave a Comment