BrainVista: Modeling Naturalistic Brain Dynamics as Multimodal Next-Token Prediction

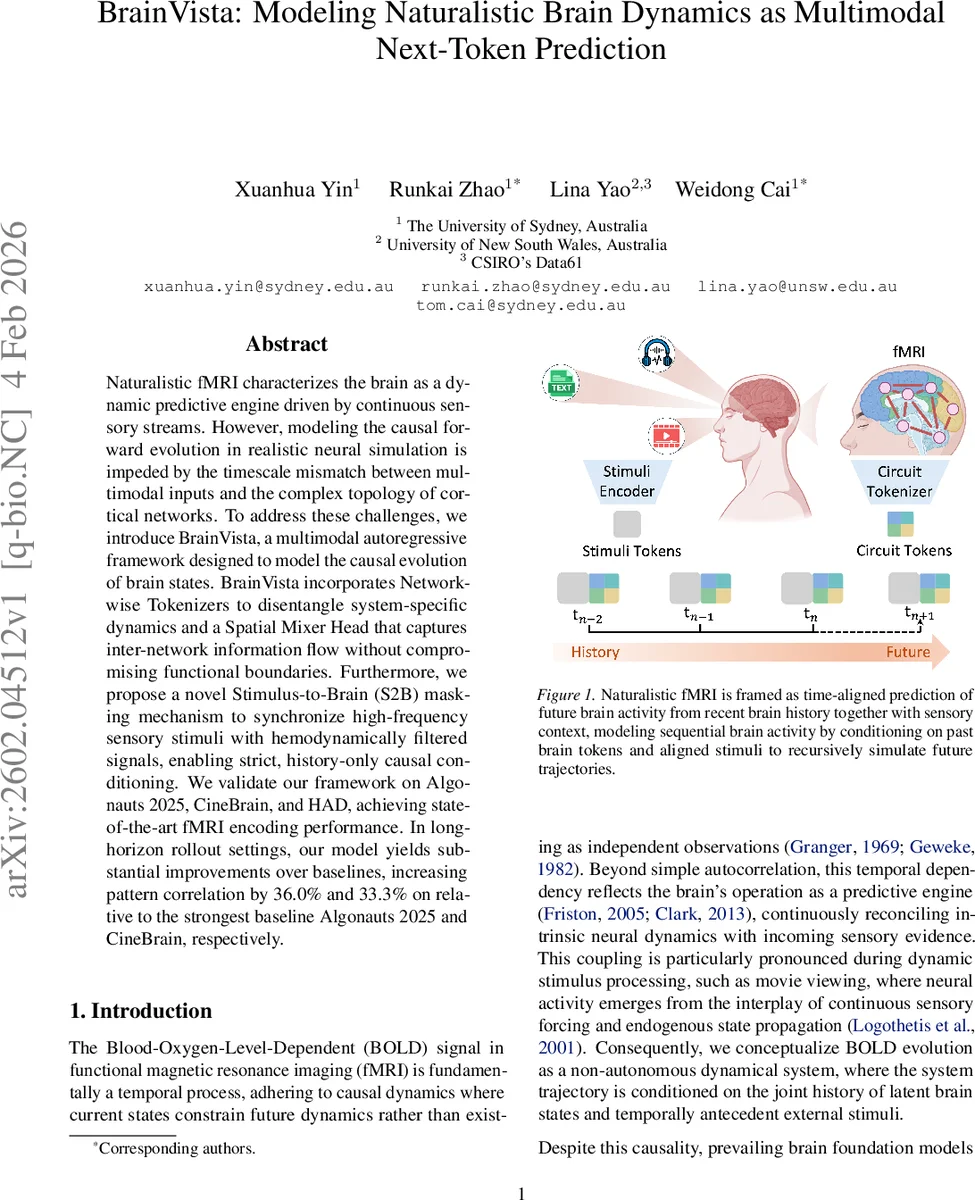

Naturalistic fMRI characterizes the brain as a dynamic predictive engine driven by continuous sensory streams. However, modeling the causal forward evolution in realistic neural simulation is impeded by the timescale mismatch between multimodal inputs and the complex topology of cortical networks. To address these challenges, we introduce BrainVista, a multimodal autoregressive framework designed to model the causal evolution of brain states. BrainVista incorporates Network-wise Tokenizers to disentangle system-specific dynamics and a Spatial Mixer Head that captures inter-network information flow without compromising functional boundaries. Furthermore, we propose a novel Stimulus-to-Brain (S2B) masking mechanism to synchronize high-frequency sensory stimuli with hemodynamically filtered signals, enabling strict, history-only causal conditioning. We validate our framework on Algonauts 2025, CineBrain, and HAD, achieving state-of-the-art fMRI encoding performance. In long-horizon rollout settings, our model yields substantial improvements over baselines, increasing pattern correlation by 36.0% and 33.3% on relative to the strongest baseline Algonauts 2025 and CineBrain, respectively.

💡 Research Summary

BrainVista reframes naturalistic fMRI analysis as a multimodal next‑token prediction problem, where the brain’s BOLD dynamics are treated as a causal sequence conditioned on past brain states and contemporaneous sensory inputs. The authors identify two fundamental obstacles that have limited prior brain foundation models: (1) temporal leakage caused by the mismatch between high‑frequency stimulus streams (video, audio, text) and the low‑frequency, hemodynamically delayed BOLD signal, and (2) the heterogeneous functional architecture of the cortex, which is poorly captured by global compression schemes.

To solve (1), they introduce Stimulus‑to‑Brain (S2B) masking. In a standard Transformer, self‑attention allows any token to attend to any other token, which creates a look‑ahead bias when future stimulus tokens are visible to the brain token predictor. S2B constructs a binary mask that is lower‑triangular over the interleaved stimulus‑brain token sequence, ensuring that at time step t the brain token can only attend to stimulus tokens up to t and never to future stimulus tokens. This enforces a strict past‑only conditioning regime, aligning the training graph with the inference graph and preserving causal fidelity.

To address (2), the paper proposes Network‑wise Tokenizers. Using the Schaefer‑Yeo 7‑network parcellation, the whole‑brain parcel vector is split into K functional subnetworks. For each network a lightweight two‑layer MLP encoder‑decoder pair is pretrained to reconstruct the original parcel signals, thereby learning a compact “circuit token” representation specific to that network. This design preserves intra‑network variance, reduces dimensionality (from thousands of parcels to a few dozen tokens per network), and yields tokens that are comparable across subjects because the network partition is fixed.

The overall pipeline proceeds as follows: (i) frozen pretrained encoders map raw video, audio, and text streams into modality‑specific embeddings; these are down‑sampled to the fMRI repetition time (TR) via mean‑pooling and concatenated into a shared stimulus token τ⁽ˢ⁾ₜ. (ii) Parcel‑wise fMRI at each TR is encoded by the network‑wise tokenizers into a stitched brain token τ⁽ᶠ⁾ₜ (the concatenation of all network tokens). (iii) The stimulus and brain tokens are interleaved to form a sequence Sₜ of length 2L (where L is the temporal context window). (iv) A GPT‑style causal Transformer processes Sₜ under the S2B mask, producing a hidden state for the last brain token position. (v) A linear head predicts the next brain token τ̂⁽ᶠ⁾ₜ₊₁. (vi) The Spatial Mixer Head then treats the K network sub‑tokens of τ̂⁽ᶠ⁾ₜ₊₁ as a separate sequence and applies a multi‑head self‑attention to model cross‑network interactions explicitly, yielding an interpretable K × K attention matrix that reflects putative functional coupling. (vii) The predicted brain token is decoded by the network‑wise decoders back into parcel‑wise BOLD values.

Training optimizes (a) reconstruction loss for each tokenizer‑decoder pair, ensuring high‑fidelity token embeddings, and (b) next‑token prediction loss under the S2B‑masked Transformer. During inference, the model can be rolled out autoregressively: the predicted brain token at step t is fed back as input for step t + 1, while the stimulus token for the upcoming TR is always provided (the stimulus stream is assumed observable).

Empirical evaluation spans three public naturalistic fMRI benchmarks: Algonauts 2025 (movie‑watching), CineBrain (cinematic clips), and HAD (high‑resolution audiovisual dataset). The authors report both single‑step encoding performance (Pearson correlation between predicted and measured BOLD) and multi‑step rollout stability (pattern correlation over horizons up to 20 s). BrainVista achieves state‑of‑the‑art results, surpassing the strongest baselines by 36 % on Algonauts 2025 and 33.3 % on CineBrain in long‑horizon rollout correlation. Ablation studies demonstrate that removing S2B masking leads to a dramatic drop in rollout fidelity (due to look‑ahead leakage), while replacing network‑wise tokenizers with a global autoencoder degrades both single‑step accuracy and cross‑subject transferability.

The paper acknowledges several limitations: (1) reliance on frozen stimulus encoders prevents end‑to‑end adaptation of visual/audio/text representations to the brain prediction task; (2) fixed network partitions may not capture individual‑specific functional reconfigurations; (3) error accumulation remains non‑trivial for very long horizons, suggesting the need for corrective mechanisms such as scheduled sampling or diffusion‑based refinement. Future directions include integrating variational tokenizers for uncertainty modeling, learning adaptive stimulus encoders, and extending the Spatial Mixer to hierarchical attention that respects known anatomical gradients.

Overall, BrainVista offers a principled, causally consistent framework for simulating naturalistic brain dynamics, bridging the gap between high‑frequency multimodal stimuli and slow hemodynamic responses while respecting the brain’s functional heterogeneity. Its combination of S2B masking, network‑wise tokenization, and explicit cross‑network mixing sets a new benchmark for generative brain modeling and opens avenues for realistic neural simulation, brain‑computer interfacing, and hypothesis testing in cognitive neuroscience.

Comments & Academic Discussion

Loading comments...

Leave a Comment