LCUDiff: Latent Capacity Upgrade Diffusion for Faithful Human Body Restoration

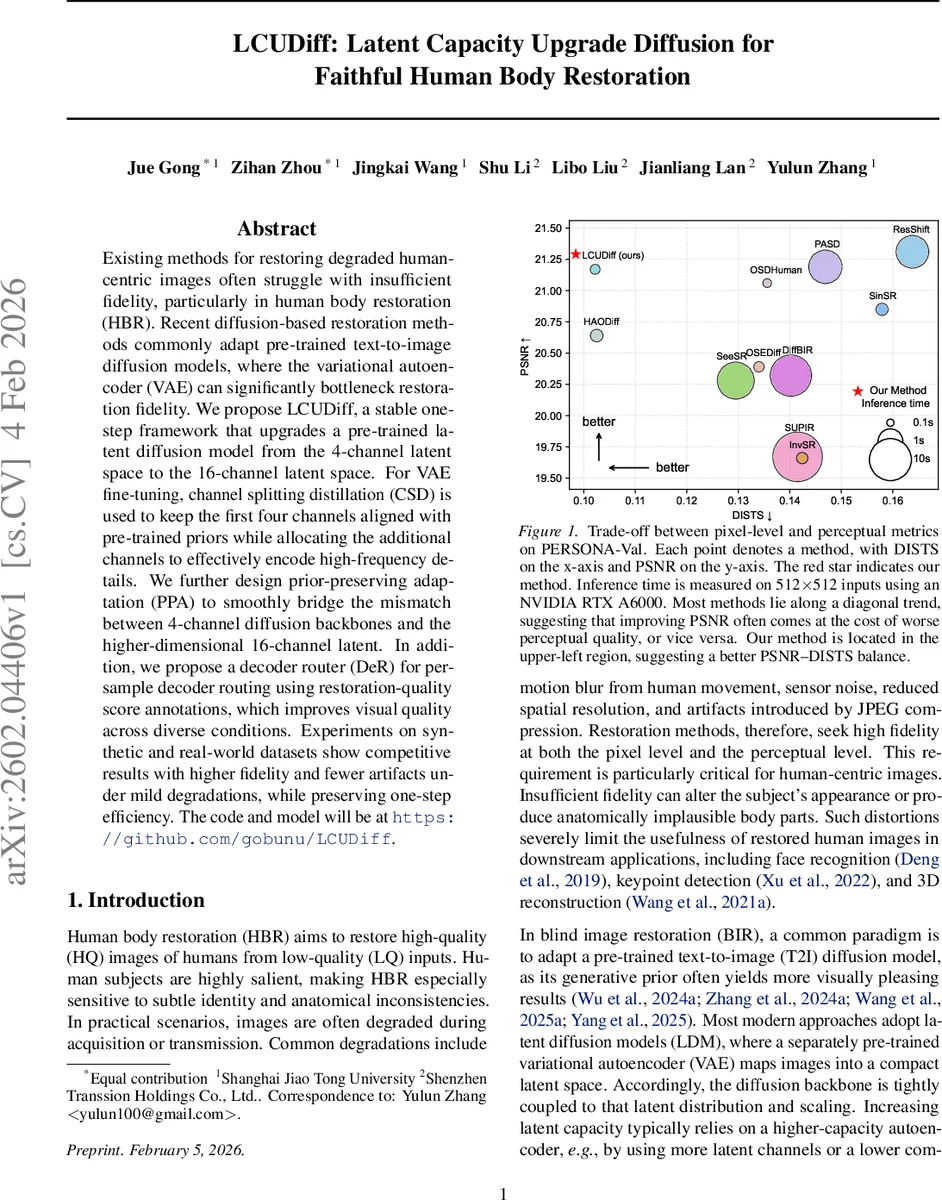

Existing methods for restoring degraded human-centric images often struggle with insufficient fidelity, particularly in human body restoration (HBR). Recent diffusion-based restoration methods commonly adapt pre-trained text-to-image diffusion models, where the variational autoencoder (VAE) can significantly bottleneck restoration fidelity. We propose LCUDiff, a stable one-step framework that upgrades a pre-trained latent diffusion model from the 4-channel latent space to the 16-channel latent space. For VAE fine-tuning, channel splitting distillation (CSD) is used to keep the first four channels aligned with pre-trained priors while allocating the additional channels to effectively encode high-frequency details. We further design prior-preserving adaptation (PPA) to smoothly bridge the mismatch between 4-channel diffusion backbones and the higher-dimensional 16-channel latent. In addition, we propose a decoder router (DeR) for per-sample decoder routing using restoration-quality score annotations, which improves visual quality across diverse conditions. Experiments on synthetic and real-world datasets show competitive results with higher fidelity and fewer artifacts under mild degradations, while preserving one-step efficiency. The code and model will be at https://github.com/gobunu/LCUDiff.

💡 Research Summary

LCUDiff addresses a critical bottleneck in human‑centric image restoration: the limited capacity of the variational auto‑encoder (VAE) used in latent diffusion models (LDMs) such as Stable Diffusion. Conventional LDMs compress images with an 8× down‑sampling factor into a 4‑channel latent (mean and variance), which discards high‑frequency details essential for faithful human body restoration (HBR). The authors propose a two‑stage framework that expands the latent space to 16 channels while preserving compatibility with the pre‑trained diffusion prior.

In the first stage, a 16‑channel VAE is fine‑tuned using Channel Splitting Distillation (CSD). The first four channels (anchor channels) are forced to match the output of the frozen 4‑channel VAE via an L1 distillation loss, ensuring that the diffusion prior remains valid. The remaining twelve channels (refine channels) are dedicated to encoding high‑frequency residuals that the original VAE cannot capture. Reconstruction loss combines L1, the perceptual metric DISTS, and an edge‑aware term to preserve fine structures. A KL divergence term and the CSD loss regularize the latent distribution.

The second stage adapts the pre‑trained 4‑channel UNet to the new 16‑channel latent using Prior‑Preserving Adaptation (PPA). PPA builds two parallel input branches: an anchor‑prior branch that feeds the frozen 4‑channel latent, and a high‑dimensional branch that processes the full 16‑channel latent. A fusion schedule gradually shifts weight from the anchor branch to the high‑dimensional branch during training, stabilizing optimization without increasing inference cost. The diffusion model operates in a single denoising step: the low‑quality image is encoded to a latent, treated as a noisy latent at a fixed timestep (τ = 249), and denoised once using classifier‑free guidance (CFG) with dual‑prompt embeddings (positive and negative cues extracted from the degraded image). LoRA is employed to fine‑tune the UNet efficiently.

To further improve visual quality, the authors introduce a Decoder Router (DeR). For each restored latent, both the original 4‑channel decoder and the newly trained 16‑channel decoder generate images. A small MLP takes the concatenated low‑quality latent and the denoised latent as input and predicts, via a soft‑max, which decoder should be used for that sample, based on learned preferences from PSNR/DISTS scores. This per‑sample routing adds negligible overhead while leveraging the complementary strengths of the two decoders.

Extensive experiments on synthetic and real‑world datasets (e.g., PERSON A‑Val) demonstrate that LCUDiff achieves a superior trade‑off between pixel‑level fidelity (PSNR) and perceptual quality (DISTS). Compared with state‑of‑the‑art one‑step methods (DiffBIR, OSEDiff, SinSR) and multi‑step diffusion approaches, LCUDiff attains higher PSNR (≈ 28.7 dB vs. 26‑27 dB) and lower DISTS (≈ 0.12 vs. 0.18), while maintaining a fast inference time (~0.12 s for 512 × 512 on an RTX A6000). Qualitative results show clearer clothing textures, sharper hair strands, and more anatomically plausible body outlines.

The paper’s contributions are fourfold: (1) expanding latent capacity to 16 channels with CSD to retain high‑frequency details, (2) designing PPA to bridge the dimensional mismatch between the upgraded VAE and the frozen diffusion backbone, (3) proposing a quality‑driven Decoder Router for sample‑wise decoder selection, and (4) delivering high‑fidelity HBR in a single‑step diffusion pipeline. Limitations include the need for careful hyper‑parameter tuning of the fusion schedule and loss weights, and the fact that the UNet remains 4‑channel, leaving some residual mismatch that could be eliminated by a fully 16‑channel UNet. Future work may explore dynamic channel allocation, meta‑learning for routing, and end‑to‑end training of a 16‑channel diffusion backbone.

Overall, LCUDiff presents a practical and effective solution to the VAE bottleneck in diffusion‑based restoration, achieving state‑of‑the‑art fidelity and speed for human body restoration, and setting a promising direction for high‑capacity latent diffusion models in image restoration tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment