EXaMCaP: Subset Selection with Entropy Gain Maximization for Probing Capability Gains of Large Chart Understanding Training Sets

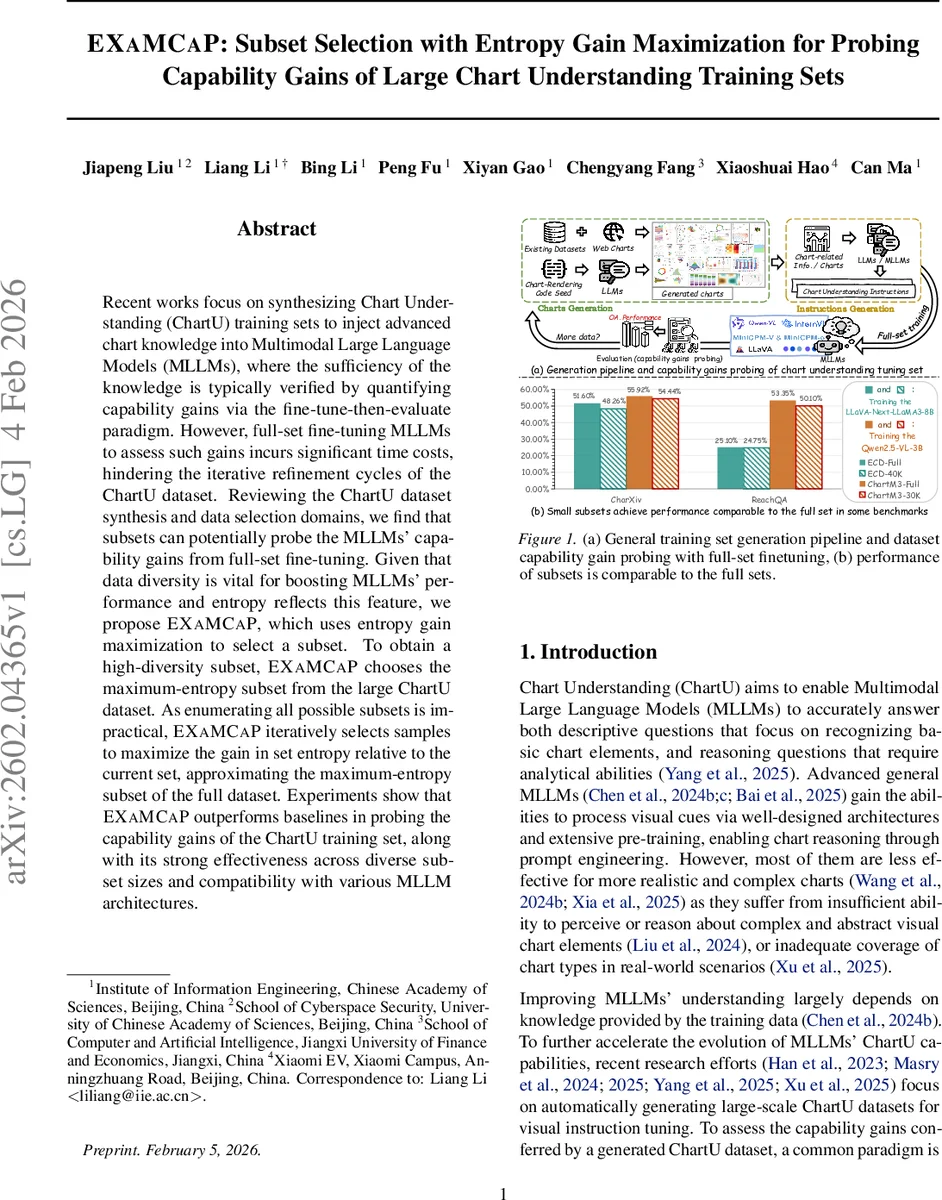

Recent works focus on synthesizing Chart Understanding (ChartU) training sets to inject advanced chart knowledge into Multimodal Large Language Models (MLLMs), where the sufficiency of the knowledge is typically verified by quantifying capability gains via the fine-tune-then-evaluate paradigm. However, full-set fine-tuning MLLMs to assess such gains incurs significant time costs, hindering the iterative refinement cycles of the ChartU dataset. Reviewing the ChartU dataset synthesis and data selection domains, we find that subsets can potentially probe the MLLMs’ capability gains from full-set fine-tuning. Given that data diversity is vital for boosting MLLMs’ performance and entropy reflects this feature, we propose EXaMCaP, which uses entropy gain maximization to select a subset. To obtain a high-diversity subset, EXaMCaP chooses the maximum-entropy subset from the large ChartU dataset. As enumerating all possible subsets is impractical, EXaMCaP iteratively selects samples to maximize the gain in set entropy relative to the current set, approximating the maximum-entropy subset of the full dataset. Experiments show that EXaMCaP outperforms baselines in probing the capability gains of the ChartU training set, along with its strong effectiveness across diverse subset sizes and compatibility with various MLLM architectures.

💡 Research Summary

EXaMCaP addresses the prohibitive computational cost of fine‑tuning multimodal large language models (MLLMs) on massive Chart Understanding (ChartU) datasets in order to measure the capability gains that such data provide. The authors observe that a carefully chosen subset can serve as a proxy for the full dataset, provided the subset preserves the diversity of chart types, visual patterns, and reasoning tasks. To quantify diversity, they adopt entropy as a principled metric, specifically the von Neumann entropy of a normalized similarity matrix derived from model embeddings.

The pipeline consists of three stages. First, Extreme Sample Filtering removes samples with excessively high or low perplexity (PPL) scores, which are likely to be either too noisy or too trivial for effective learning. This step yields a cleaner candidate pool. Second, the remaining samples are partitioned using K‑Means clustering, reducing the search space while ensuring global coverage. Within each cluster, a greedy entropy‑gain maximization procedure iteratively selects the sample that yields the largest increase in von Neumann entropy when added to the current subset. Entropy is computed by constructing a Gaussian similarity matrix of the selected samples, normalizing it to a density matrix, and then applying the trace‑log formula. The greedy selection continues until a predefined subset size K (typically 0.5 %–5 % of the full set) is reached. Finally, the selected subset is used to fine‑tune the target MLLM, and the resulting model is evaluated on standard ChartU benchmarks (ReachQA, CharXiv, ChartM3, etc.).

Experiments involve two state‑of‑the‑art MLLMs—LLaVA‑Next‑LLaMA3‑8B and Qwen2.5‑VL‑3B—trained on several large ChartU corpora (ChartM3‑Full, ECD‑Full, etc.). EXaMCaP’s subsets consistently achieve 92 %–98 % of the performance gains obtained by full‑set fine‑tuning, and in some benchmarks even surpass the full‑set results. Compared with baseline subset selection methods such as random sampling, gradient‑based importance, COINCIDE, DataTailor, and Prism, EXaMCaP delivers the highest average capability‑gain ratio across all tested settings. Moreover, the approach reduces fine‑tuning time and GPU consumption by more than 90 % relative to full‑set training, dramatically accelerating the iterative data‑generation‑evaluation loop.

Key contributions include: (1) a cost‑effective framework for probing the knowledge contribution of large ChartU datasets, (2) the novel application of von Neumann entropy to measure and maximize data diversity, (3) a scalable clustering‑plus‑greedy algorithm that avoids exhaustive subset enumeration, and (4) extensive validation across multiple models, dataset sizes, and benchmark tasks.

The paper also discusses limitations. Entropy calculations rely on embeddings from a pre‑trained MLLM; if those embeddings are of low quality, the diversity estimate may be inaccurate. The greedy selection does not guarantee a globally optimal subset, and performance can be sensitive to the number of clusters and the initial K‑Means seeds. Extreme sample filtering may discard “hard” examples that are valuable for assessing a model’s robustness. Future work could explore alternative diversity metrics, reinforcement‑learning‑based subset optimization, or adaptive weighting of chart domains to further improve representativeness.

In summary, EXaMCaP provides a practical, theoretically grounded solution for efficiently evaluating the impact of large‑scale chart understanding data on multimodal models, opening the door to faster, more iterative development cycles in this emerging research area.

Comments & Academic Discussion

Loading comments...

Leave a Comment