Can Vision Replace Text in Working Memory? Evidence from Spatial n-Back in Vision-Language Models

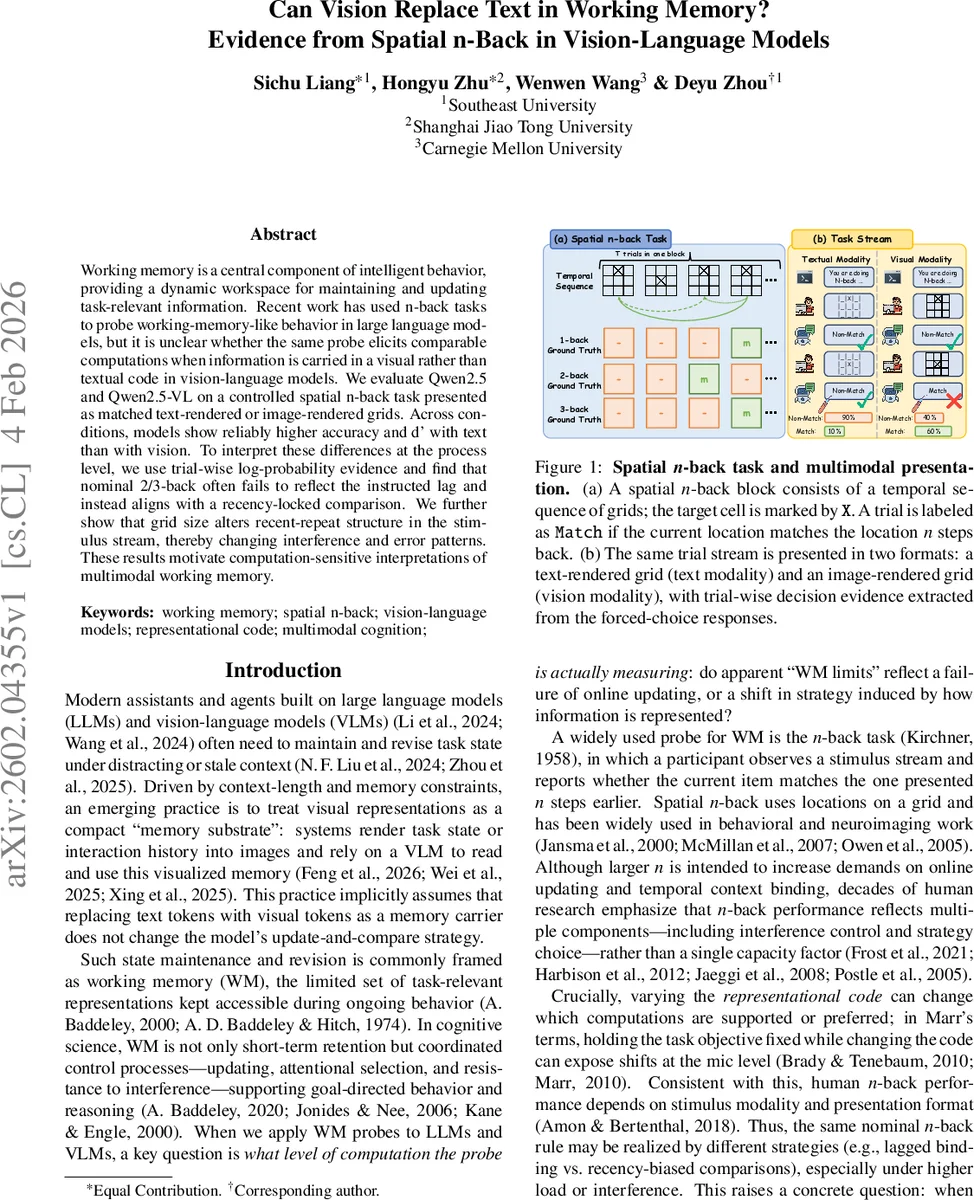

Working memory is a central component of intelligent behavior, providing a dynamic workspace for maintaining and updating task-relevant information. Recent work has used n-back tasks to probe working-memory-like behavior in large language models, but it is unclear whether the same probe elicits comparable computations when information is carried in a visual rather than textual code in vision-language models. We evaluate Qwen2.5 and Qwen2.5-VL on a controlled spatial n-back task presented as matched text-rendered or image-rendered grids. Across conditions, models show reliably higher accuracy and d’ with text than with vision. To interpret these differences at the process level, we use trial-wise log-probability evidence and find that nominal 2/3-back often fails to reflect the instructed lag and instead aligns with a recency-locked comparison. We further show that grid size alters recent-repeat structure in the stimulus stream, thereby changing interference and error patterns. These results motivate computation-sensitive interpretations of multimodal working memory.

💡 Research Summary

This paper investigates whether vision‑language models (VLMs) can use visual input as a memory substrate in the same way that large language models (LLMs) use textual tokens, by adapting the classic spatial n‑back task to a multimodal setting. The authors evaluate Qwen2.5‑7B (text‑only) and its multimodal counterpart Qwen2.5‑VL‑7B on a controlled spatial n‑back paradigm presented either as an ASCII‑rendered grid (text modality) or as a programmatically generated image of the same grid (vision modality). For each condition they vary the nominal memory load n (1, 2, 3) and the grid size N (3, 4, 5, 7), generating 50 blocks of 24 trials per (N, n) pair, yielding 1,200 trials per model‑modality combination.

The task is framed as a forced‑choice binary decision: “Match” (m) if the current target location coincides with the one n steps back, otherwise “Non‑match” (−). Models respond deterministically (temperature = 0) and the authors record both the categorical label and the token‑level log‑probabilities for the two candidate labels. From these they compute a continuous evidence score sₜ = log p(m) − log p(−) for each trial, enabling signal‑detection analyses (hit rate H, false‑alarm rate FA, accuracy, d′) and a threshold‑free discriminability metric (area under the ROC curve, AUC).

Behavioral results show a robust modality effect: across all loads and grid sizes, text‑grid inputs yield higher accuracy, higher hit rates, and substantially larger d′ values than vision‑grid inputs. The gap is most pronounced at higher loads (2‑back, 3‑back) where the vision condition’s d′ collapses to near‑chance levels. This collapse is driven primarily by a dramatic drop in hit rates rather than an increase in false alarms, indicating that the model becomes overly conservative and rarely outputs “Match”. Load effects are also evident: increasing n reduces discriminability for all models, consistent with the greater temporal binding demands of higher n‑back. Interestingly, larger grid sizes improve performance even though the nominal state space expands; this is attributed to a reduced frequency of recent‑repeat lures, which lessens proactive interference.

Process‑level diagnostics reveal that the models do not reliably implement the instructed n‑back lag. A lag‑scan analysis of the evidence scores shows that, especially for 2‑back and 3‑back, the trial‑wise evidence aligns more closely with a recency‑based comparison (i.e., comparing to the immediately preceding trial) than with the true n‑step lag. This suggests that both the LLM and the VLM adopt a recency‑biased strategy when the task demands exceed their effective working‑memory capacity. AUC analyses confirm that the vision condition provides weaker evidence separation than the text condition, further supporting the notion of reduced discriminability rather than mere criterion shift.

To test the generality of these findings, the authors replicate the experiments with larger model families (Llama‑3.1‑8B Vision, Llama‑3.2‑11B Vision) and larger scales of the Qwen family (Qwen2.5‑32B and Qwen2.5‑VL‑32B). The same ordering of modalities (text > VLM‑text > VLM‑vision) persists, and the magnitude of the modality gap often widens with model size, especially for larger grids and higher loads. This pattern suggests that the visual tokenization pipeline (which compresses a 256 × 256 image into a fixed number of visual tokens) may introduce information loss that scales with model capacity, affecting the internal temporal‑binding mechanisms.

The authors conclude that changing the representational code from text to vision fundamentally alters the computations performed by multimodal models on a classic working‑memory probe. Consequently, the common engineering practice of rendering task state as images for VLMs to “read” does not guarantee that the same working‑memory processes are engaged as when the state is rendered as text. The paper advocates for multimodal evaluation that goes beyond end‑point accuracy, incorporating process diagnostics such as evidence‑based discriminability and lag‑alignment analyses to verify whether models truly implement the intended temporal‑context binding and interference‑control operations. Future work is suggested to improve visual tokenization, design vision‑specific memory mechanisms, or develop hybrid strategies that preserve the benefits of visual representations without sacrificing working‑memory fidelity.

Comments & Academic Discussion

Loading comments...

Leave a Comment