Agent-Omit: Training Efficient LLM Agents for Adaptive Thought and Observation Omission via Agentic Reinforcement Learning

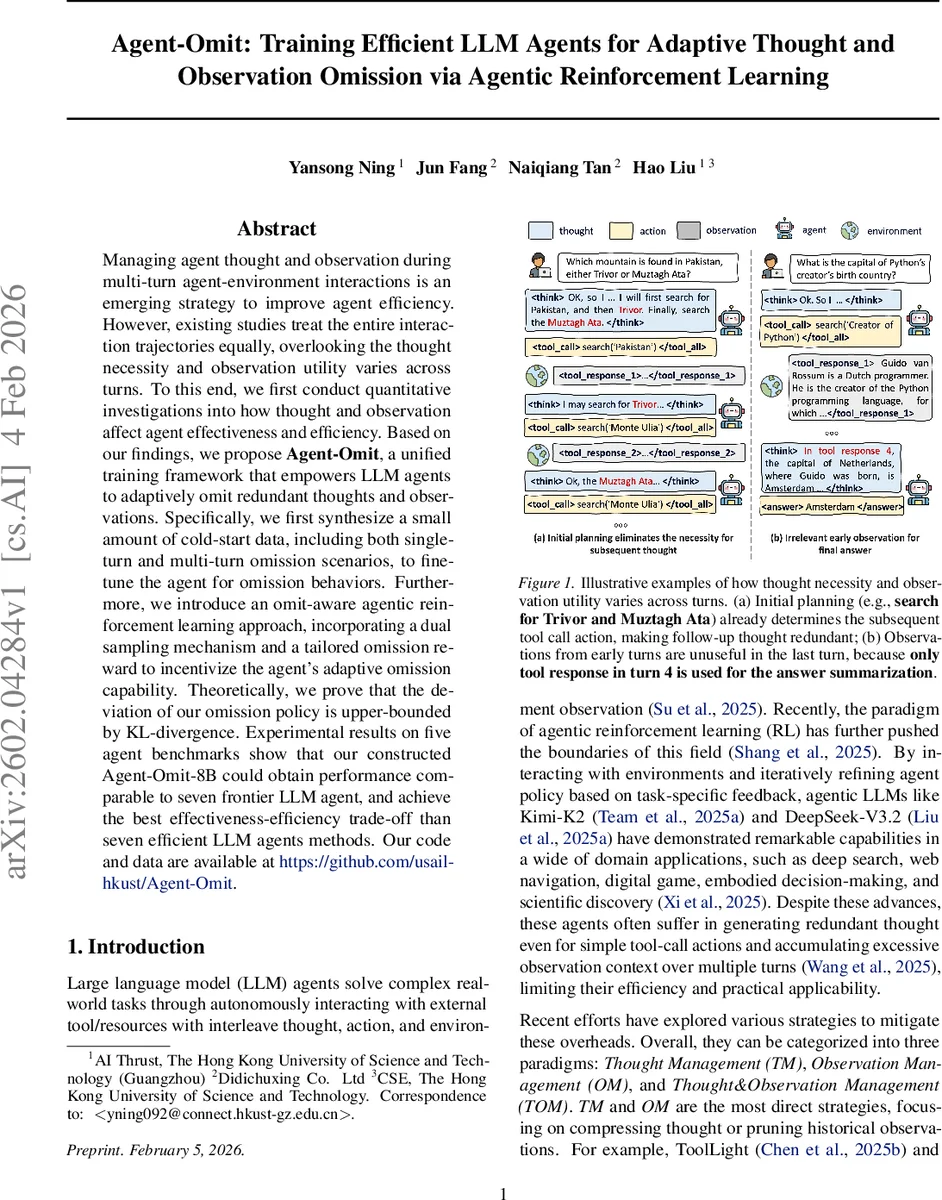

Managing agent thought and observation during multi-turn agent-environment interactions is an emerging strategy to improve agent efficiency. However, existing studies treat the entire interaction trajectories equally, overlooking the thought necessity and observation utility varies across turns. To this end, we first conduct quantitative investigations into how thought and observation affect agent effectiveness and efficiency. Based on our findings, we propose Agent-Omit, a unified training framework that empowers LLM agents to adaptively omit redundant thoughts and observations. Specifically, we first synthesize a small amount of cold-start data, including both single-turn and multi-turn omission scenarios, to fine-tune the agent for omission behaviors. Furthermore, we introduce an omit-aware agentic reinforcement learning approach, incorporating a dual sampling mechanism and a tailored omission reward to incentivize the agent’s adaptive omission capability. Theoretically, we prove that the deviation of our omission policy is upper-bounded by KL-divergence. Experimental results on five agent benchmarks show that our constructed Agent-Omit-8B could obtain performance comparable to seven frontier LLM agent, and achieve the best effectiveness-efficiency trade-off than seven efficient LLM agents methods. Our code and data are available at https://github.com/usail-hkust/Agent-Omit.

💡 Research Summary

Agent‑Omit addresses the growing need for more efficient large‑language‑model (LLM) agents that interact with external tools over multiple turns. The authors first demonstrate that “thought” (chain‑of‑thought reasoning) and “observation” (tool responses) dominate the token budget—accounting for roughly 45 % and 52 % of tokens respectively—while actions themselves are negligible. Through Monte‑Carlo rollouts on the WebShop benchmark, they show that early turns (high‑level planning) are crucial for accuracy, but later turns often add tokens without improving performance. Similarly, early observations are useful, but many later observations become redundant noise for the final answer.

Motivated by these findings, Agent‑Omit proposes a two‑stage training pipeline. In the first stage, a small “cold‑start” dataset is synthesized. The authors automatically identify omittable turns by testing whether removing a thought or observation reduces token count without hurting accuracy. They then create single‑turn omission examples (teaching the model how to emit an empty thought token <think></think> or an omission command <omit tool response N …></omit>) and multi‑turn trajectories where several thoughts/observations are replaced by these omission tokens. Full‑parameter supervised fine‑tuning on this data teaches the model the syntax and semantics of omission.

The second stage introduces an “omit‑aware” reinforcement learning (RL) algorithm. Standard agentic RL samples only a complete trajectory, which makes it impossible for the policy to see the pre‑omission context. To solve this, Agent‑Omit uses a dual‑sampling strategy: (1) a full trajectory where omission actions are executed, used to evaluate overall success and token savings; (2) for each omission point, a partial trajectory that captures the context just before omission and the agent’s immediate thought/action. This enables the computation of an omission‑specific reward that (a) rewards token reduction, (b) adds a bonus when final task accuracy is unchanged, and (c) penalizes harmful omissions. The overall objective jointly optimizes effectiveness and efficiency, and the authors prove that the KL‑divergence between the new policy and the pre‑trained policy upper‑bounds the deviation, providing theoretical stability.

Experiments on five diverse benchmarks—DeepSearch, WebShop, TextCraft, BabyAI, and SciWorld—show that Agent‑Omit‑8B matches or exceeds the accuracy of seven frontier LLM agents (e.g., DeepSeek‑R1‑0528, o3) while cutting token consumption by roughly 30 % on average. When applied to Qwen‑3‑8B, Agent‑Omit consistently outperforms seven existing efficient‑agent construction methods, achieving the best trade‑off between effectiveness and efficiency. Detailed analysis reveals that the trained agents tend to omit thoughts and observations primarily in intermediate turns (2‑4), confirming the initial hypothesis that omission opportunities are turn‑dependent.

In summary, Agent‑Omit provides a unified framework that leverages quantitative analysis, synthetic omission data, and a novel dual‑sampling RL algorithm to endow LLM agents with adaptive context‑management capabilities. By learning when and how to omit redundant thoughts and observations, it substantially reduces inference cost without sacrificing task performance, opening a practical path toward scalable, cost‑effective autonomous agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment