LILaC: Late Interacting in Layered Component Graph for Open-domain Multimodal Multihop Retrieval

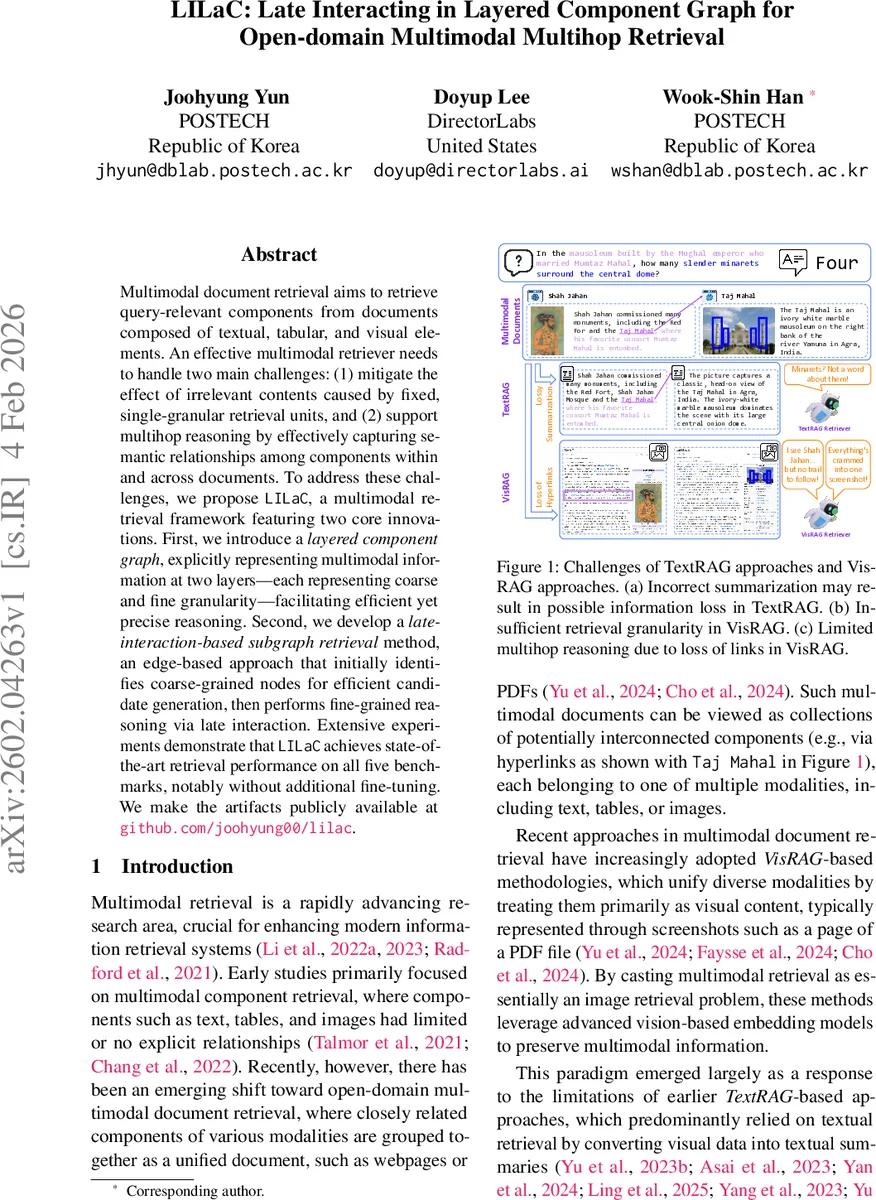

Multimodal document retrieval aims to retrieve query-relevant components from documents composed of textual, tabular, and visual elements. An effective multimodal retriever needs to handle two main challenges: (1) mitigate the effect of irrelevant contents caused by fixed, single-granular retrieval units, and (2) support multihop reasoning by effectively capturing semantic relationships among components within and across documents. To address these challenges, we propose LILaC, a multimodal retrieval framework featuring two core innovations. First, we introduce a layered component graph, explicitly representing multimodal information at two layers - each representing coarse and fine granularity - facilitating efficient yet precise reasoning. Second, we develop a late-interaction-based subgraph retrieval method, an edge-based approach that initially identifies coarse-grained nodes for efficient candidate generation, then performs fine-grained reasoning via late interaction. Extensive experiments demonstrate that LILaC achieves state-of-the-art retrieval performance on all five benchmarks, notably without additional fine-tuning. We make the artifacts publicly available at github.com/joohyung00/lilac.

💡 Research Summary

LILaC (Late Interacting in Layered Component Graph) tackles two fundamental challenges in open‑domain multimodal document retrieval: (1) the presence of irrelevant content when using a fixed, single‑granular retrieval unit, and (2) the lack of multihop reasoning across heterogeneous components. The core idea is to represent the entire corpus as a two‑layer graph. The coarse‑grained layer contains high‑level nodes—paragraphs, whole tables, and full images—providing a compact view for efficient candidate generation. The fine‑grained layer decomposes each coarse node into its constituent parts: sentences, table‑row pairs (header + row), and detected visual objects. Edges between coarse nodes capture semantic or hyperlink relationships, while containment edges link each coarse node to its fine subcomponents, explicitly encoding hierarchy.

At query time, LILaC first decomposes the natural‑language query into several sub‑queries and classifies each sub‑query’s modality (text, table, image). This classification narrows the search space to relevant node types. An initial set of coarse‑grained candidates is retrieved using dense similarity between sub‑queries and coarse node embeddings. From these seeds, a beam‑search traversal expands the subgraph by following edges. Crucially, edge scores are not pre‑computed; instead, they are obtained on‑the‑fly through a late‑interaction mechanism between the sub‑query embedding and the fine‑grained subcomponents attached to the candidate edge. This late interaction (e.g., dot‑product or cross‑attention) allows the model to exploit detailed visual or textual cues without incurring the cost of embedding every possible edge beforehand.

The method thus achieves two complementary benefits. The coarse layer filters out large swaths of irrelevant material, mitigating the “noise” problem inherent in full‑page screenshot approaches (VisRAG). The fine layer, accessed via late interaction, provides precise matching at the sentence, row, or object level, enabling accurate multihop reasoning across intra‑ and inter‑document links. Experiments on five public multimodal retrieval benchmarks (including MMQA, PDF‑Retrieval, and VQA‑Doc) show that LILaC outperforms state‑of‑the‑art baselines such as TextRAG, VisRAG, and ColPali. Notably, it reaches superior performance without any task‑specific fine‑tuning, relying solely on pretrained multimodal language‑vision models (e.g., MMEmbed, UniME, mmE5). Ablation studies confirm that both the layered graph structure and the late‑interaction scoring are essential; removing either component leads to a marked drop in recall and MAP.

While effective, LILaC requires an upfront preprocessing pipeline for text parsing, table extraction, and object detection to build the graph, which adds computational overhead. Moreover, the current design assumes a static document collection; handling dynamic updates or streaming documents would need additional mechanisms for incremental graph maintenance and efficient beam search in a distributed setting.

In summary, LILaC introduces a novel paradigm that jointly leverages hierarchical granularity and explicit structural relationships for multimodal document retrieval. By combining coarse‑to‑fine candidate generation with on‑demand late interaction, it mitigates irrelevant content, supports multihop reasoning, and achieves state‑of‑the‑art results with minimal training effort, offering a promising foundation for future open‑domain multimodal search systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment