From Ambiguity to Action: A POMDP Perspective on Partial Multi-Label Ambiguity and Its Horizon-One Resolution

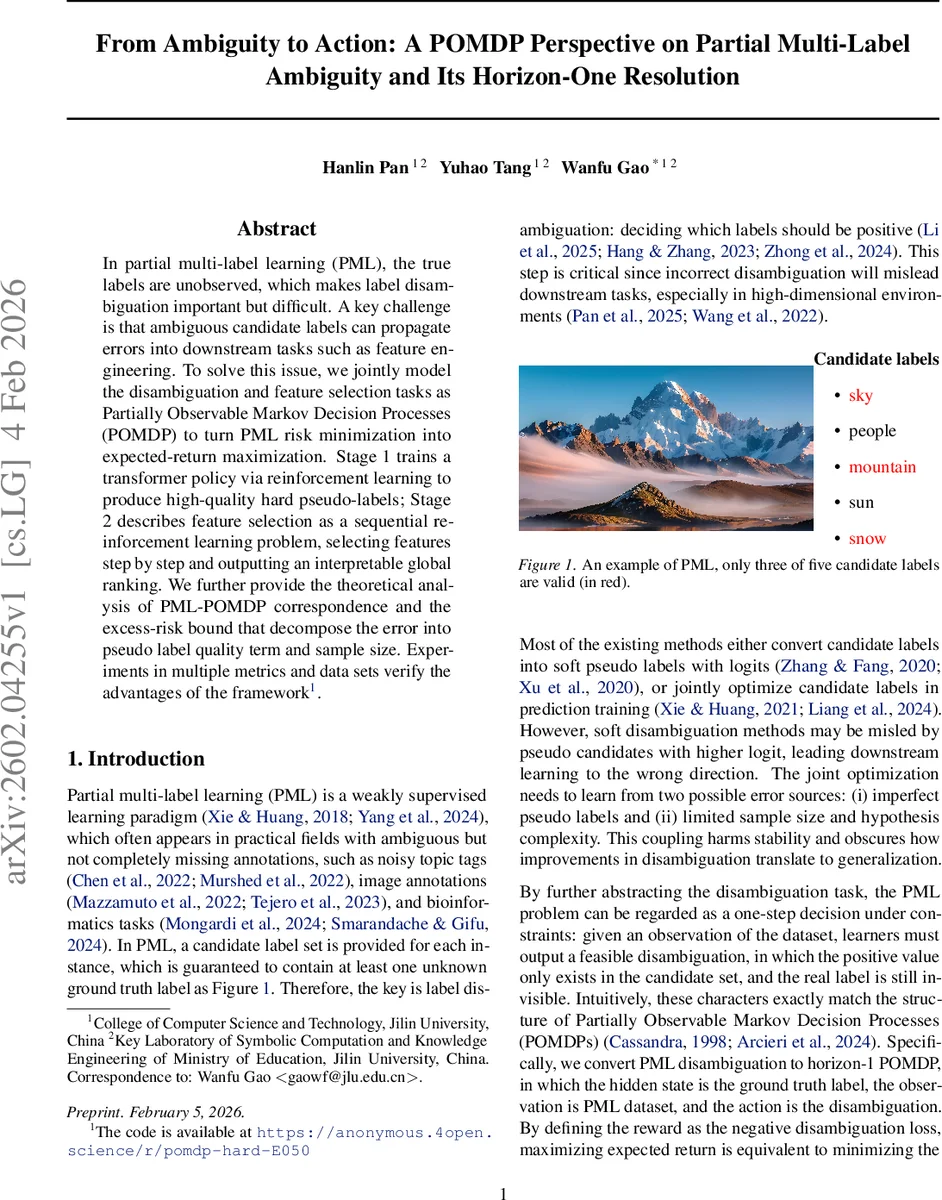

In partial multi-label learning (PML), the true labels are unobserved, which makes label disambiguation important but difficult. A key challenge is that ambiguous candidate labels can propagate errors into downstream tasks such as feature engineering. To solve this issue, we jointly model the disambiguation and feature selection tasks as Partially Observable Markov Decision Processes (POMDP) to turn PML risk minimization into expected-return maximization. Stage 1 trains a transformer policy via reinforcement learning to produce high-quality hard pseudo-labels; Stage 2 describes feature selection as a sequential reinforcement learning problem, selecting features step by step and outputting an interpretable global ranking. We further provide the theoretical analysis of PML-POMDP correspondence and the excess-risk bound that decompose the error into pseudo label quality term and sample size. Experiments in multiple metrics and data sets verify the advantages of the framework.

💡 Research Summary

The paper tackles the challenging problem of partial multi‑label learning (PML), where each training instance is accompanied by a candidate label set that is guaranteed to contain at least one true label, but the exact ground‑truth vector is hidden. Existing approaches either produce soft pseudo‑labels that can be misled by high‑scoring false candidates, or impose structural priors that are fragile to noise and often rely on iterative heuristics. Both families suffer from error propagation to downstream tasks such as feature engineering.

To address these issues, the authors reformulate PML as a horizon‑1 Partially Observable Markov Decision Process (POMDP). In this formulation the hidden state is the true label vector, the observation consists of the input features together with the candidate label set, and the action is a hard pseudo‑label vector. The reward is defined as the negative of a disambiguation surrogate loss ℓ_disc, which combines a multi‑label logistic loss with a graph‑Laplacian regularizer enforcing candidate‑set constraints. Maximizing the expected return of this one‑step POMDP is shown to be mathematically equivalent to minimizing the PML risk (Theorem 5.2).

Stage 1 – Hard Disambiguation.

A transformer encoder processes the observation (X, C) and feeds the resulting representation into two heads: a policy head and a discriminative head. The policy head predicts a Bernoulli probability for each candidate label; sampling these Bernoulli variables yields a hard pseudo‑label Z. The discriminative head outputs logits U that are compared against Z using ℓ_disc. The discriminative head and encoder are trained by directly minimizing ℓ_disc, while the policy head is updated via REINFORCE using the reward r = −ℓ_disc. The two heads are detached during back‑propagation so that each component receives a clean gradient signal. Under standard smoothness assumptions the policy converges to a first‑order stationary point of the surrogate objective (Theorem 5.3), guaranteeing a locally optimal hard labeling strategy.

Stage 2 – RL‑Based Sequential Feature Selection.

The hard pseudo‑labels from Stage 1 become fixed supervision for a second reinforcement‑learning problem. Given a budget k_fs, the agent starts with an empty feature mask and iteratively selects one feature at a time. At step t the state consists of the partially revealed feature vector (masked by m_t) together with the pseudo‑labels; the agent observes an embedding h_t produced by the same transformer encoder used in Stage 1. An action selects a new feature index, which updates the mask. After T = min(k_fs, d) steps the final mask m_T defines a feature subset. The discriminative head evaluates this subset by computing a binary‑cross‑entropy loss against the pseudo‑labels; the negative of this loss is the terminal reward. The policy π_ψ is trained with a standard policy‑gradient estimator (with a scalar baseline to reduce variance). The overall loss for Stage 2 combines the supervised BCE loss and the policy‑gradient loss, weighted by λ_fs. After training, feature importance scores are obtained by averaging the selection probabilities over the training set, yielding a global ranking that can be used to pick the top‑K features for any downstream classifier (e.g., SVM, ML‑kNN).

Theoretical Contributions.

- Equivalence Proof (Theorem 5.2). The authors rigorously prove that the expected return of the horizon‑1 POMDP equals the negative of the PML disambiguation risk, establishing a solid bridge between reinforcement learning and risk minimization.

- Convergence Guarantee (Theorem 5.3). Under mild regularity conditions, the REINFORCE updates for the policy head converge to a stationary point of the surrogate loss, ensuring that Stage 1 yields a stable hard labeling rule.

- Excess‑Risk Decomposition (Theorem 5.5). The final prediction error is decomposed into two additive terms: one controlled solely by the quality of the pseudo‑labels (Stage 1) and another that follows classic statistical learning bounds, depending on sample size, hypothesis complexity, and the sparsity induced by the feature budget. This decomposition clarifies when improvements in disambiguation translate into better downstream generalization.

Empirical Evaluation.

The framework is tested on a diverse collection of multi‑label datasets, including image (e.g., Pascal VOC), text (e.g., Reuters), and bio‑informatics (gene‑expression) benchmarks. Baselines comprise soft‑labeling methods, graph‑regularized approaches, and recent structure‑based PML algorithms. Evaluation metrics cover Hamming loss, ranking loss, macro‑F1, and micro‑F1. Across all datasets, the proposed two‑stage method consistently outperforms baselines, achieving reductions of 3–7 % in loss metrics and gains of 4–9 % in F1 scores. Ablation studies demonstrate the necessity of (i) the policy head for generating high‑quality hard labels, (ii) the graph regularizer for respecting candidate constraints, and (iii) Stage 2 for extracting label‑aware feature subsets.

Conclusion and Outlook.

By casting PML as a horizon‑1 POMDP, the authors provide a unified reinforcement‑learning perspective that simultaneously addresses label ambiguity and feature selection. Theoretical analysis guarantees that maximizing expected return aligns with minimizing PML risk, that the policy converges, and that the overall excess risk cleanly separates label‑quality and sample‑complexity effects. Empirical results validate the practical benefits of hard pseudo‑labels and label‑aware feature rankings. Future directions include extending the horizon to multi‑step decision processes, applying the framework to non‑vector data (e.g., sequences, graphs), and integrating active learning to query the most informative candidate labels.

Comments & Academic Discussion

Loading comments...

Leave a Comment