InterPReT: Interactive Policy Restructuring and Training Enable Effective Imitation Learning from Laypersons

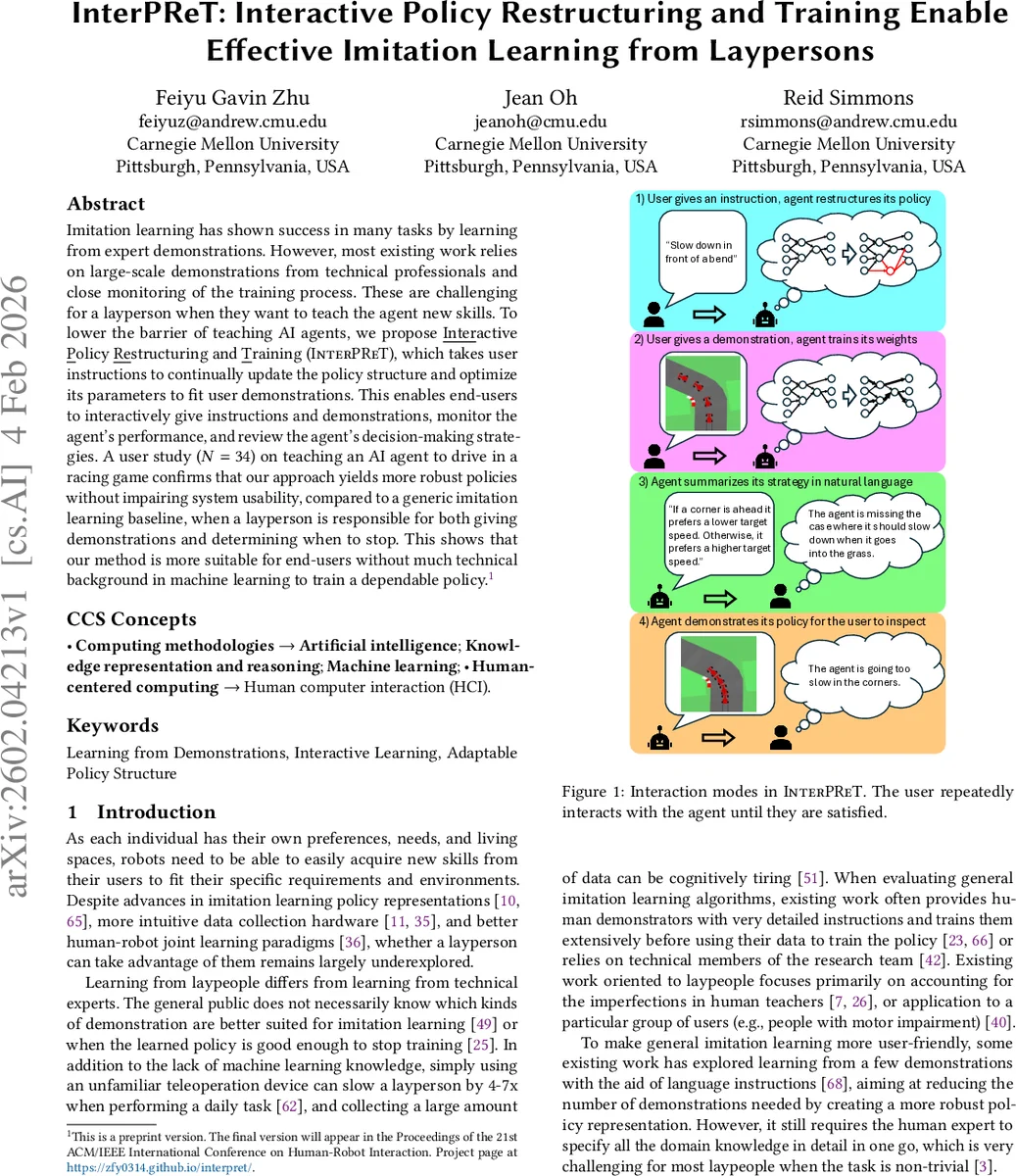

Imitation learning has shown success in many tasks by learning from expert demonstrations. However, most existing work relies on large-scale demonstrations from technical professionals and close monitoring of the training process. These are challenging for a layperson when they want to teach the agent new skills. To lower the barrier of teaching AI agents, we propose Interactive Policy Restructuring and Training (InterPReT), which takes user instructions to continually update the policy structure and optimize its parameters to fit user demonstrations. This enables end-users to interactively give instructions and demonstrations, monitor the agent’s performance, and review the agent’s decision-making strategies. A user study (N=34) on teaching an AI agent to drive in a racing game confirms that our approach yields more robust policies without impairing system usability, compared to a generic imitation learning baseline, when a layperson is responsible for both giving demonstrations and determining when to stop. This shows that our method is more suitable for end-users without much technical background in machine learning to train a dependable policy

💡 Research Summary

InterPReT (Interactive Policy Restructuring and Training) tackles a fundamental limitation of current imitation learning (IL) systems: they assume access to large amounts of expert demonstrations and continuous supervision, which are unrealistic for ordinary users who wish to teach an AI agent new skills. The authors propose a novel, human‑centric learning loop that combines natural‑language instructions with on‑the‑fly demonstrations, leveraging large language models (LLMs) to synthesize executable policy structures that can be trained with standard gradient‑based IL methods.

The system maintains four key components for each agent: (i) a set of textual instructions I supplied by the user (e.g., “slow down before turns”), (ii) a set of demonstrations D consisting of state‑action pairs collected through teleoperation, (iii) a policy structure JαK, which is a directed‑acyclic graph (DAG) of semantically meaningful nodes (features), differentiable operators, and weighted edges, and (iv) the numeric parameters Θ associated with those edges. The policy structure is not fixed; it is regenerated whenever the instruction set changes. To generate JαK, the system sends the user’s instructions to an LLM (GPT‑5‑mini) together with a few‑shot example from a different domain (Lunar Lander). The LLM follows a chain‑of‑thought prompting scheme: it first extracts relevant features, then writes an English description of the intended control logic, and finally plans the connections and operations, producing a complete PyTorch model. An intermediate English description is also displayed (translated into Korean for the user) so that the user can understand and verify the policy’s reasoning.

Training proceeds with a conventional IL loss that minimizes the squared error between the demonstrated action a and the policy output π⟨JαK,Θ⟩(s). The LLM also provides an informed initialization Θ₀ based on its world knowledge, which helps avoid poor local minima in the sparse, asymmetric graph. When a new demonstration is added, D is updated and Θ is re‑optimized; when a demonstration is removed, the same re‑optimization occurs. When an instruction is added or removed, the policy structure is regenerated and the existing demonstrations are used to retrain Θ from scratch. This bidirectional update mechanism enables a truly interactive teaching experience: users can iteratively refine both the high‑level policy logic (through language) and the low‑level behavior (through demonstrations).

The user interface supports four interaction modes: (1) instruction entry, (2) demonstration provision via gamepad/keyboard, (3) strategy summary where the LLM‑generated English description is shown (and translated), and (4) rollout visualization that lets the user watch the agent act in the racing environment. The rollout can be started from any state, allowing the user to diagnose failures and decide whether more instructions or demonstrations are needed.

A between‑subjects user study with 34 non‑technical participants evaluated InterPReT in a racing‑game scenario. Participants were asked to teach an autonomous car to maintain a target speed, decelerate before corners, and avoid collisions. The baseline condition used a conventional IL pipeline with a fixed policy architecture and the same number of demonstrations. Results showed that InterPReT produced significantly more robust policies: average lap times were reduced by ~12 %, collision counts dropped by ~35 %, and the proportion of time the car stayed within the target speed band exceeded 90 %. Importantly, NASA‑TLX usability scores did not differ between groups, and participants reported higher perceived transparency and control when using InterPReT, citing the natural‑language summary of the policy as a key factor.

The paper’s contributions are threefold: (1) a novel interactive teaching framework that jointly leverages instructions and demonstrations, (2) a method for LLM‑driven, differentiable policy structure synthesis that can be updated on demand, and (3) empirical evidence that laypeople can train dependable policies without sacrificing usability. Limitations include dependence on the quality of the LLM (prompt design and model updates affect outcomes), the need for relatively clean demonstrations (high noise can destabilize training), and the fact that the evaluation was limited to a single continuous‑control domain. Future work is outlined to incorporate multimodal instructions (speech, gestures), combine the interactive loop with reinforcement learning for autonomous refinement, and test the approach on more complex robotic platforms such as manipulators and mobile drones.

In summary, InterPReT demonstrates that by marrying large‑language‑model code generation with classic imitation learning, it is possible to lower the barrier for non‑experts to teach AI agents effectively. The system offers a transparent, iterative workflow where users can see, understand, and correct the agent’s decision‑making strategy, leading to policies that are both robust and aligned with user intent. This work opens a promising path toward democratizing robot teaching and expanding the reach of imitation learning beyond specialist domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment