VTok: A Unified Video Tokenizer with Decoupled Spatial-Temporal Latents

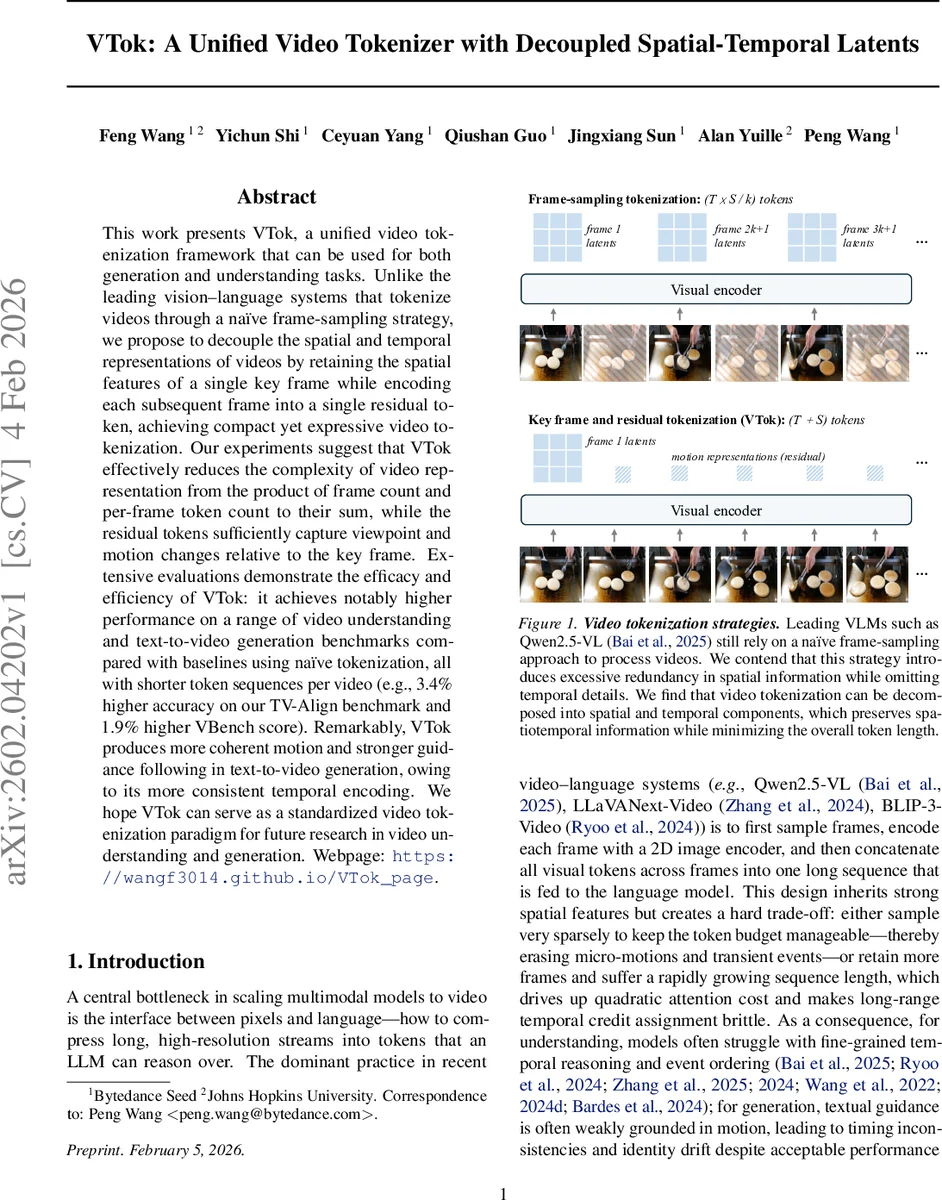

This work presents VTok, a unified video tokenization framework that can be used for both generation and understanding tasks. Unlike the leading vision-language systems that tokenize videos through a naive frame-sampling strategy, we propose to decouple the spatial and temporal representations of videos by retaining the spatial features of a single key frame while encoding each subsequent frame into a single residual token, achieving compact yet expressive video tokenization. Our experiments suggest that VTok effectively reduces the complexity of video representation from the product of frame count and per-frame token count to their sum, while the residual tokens sufficiently capture viewpoint and motion changes relative to the key frame. Extensive evaluations demonstrate the efficacy and efficiency of VTok: it achieves notably higher performance on a range of video understanding and text-to-video generation benchmarks compared with baselines using naive tokenization, all with shorter token sequences per video (e.g., 3.4% higher accuracy on our TV-Align benchmark and 1.9% higher VBench score). Remarkably, VTok produces more coherent motion and stronger guidance following in text-to-video generation, owing to its more consistent temporal encoding. We hope VTok can serve as a standardized video tokenization paradigm for future research in video understanding and generation.

💡 Research Summary

The paper introduces VTok, a unified video tokenization framework designed to serve both video understanding and text‑to‑video generation tasks. Traditional video‑language models rely on a naïve frame‑sampling strategy: they sample a subset of frames, encode each frame with a 2D image encoder, and concatenate all resulting visual tokens into a long sequence. This approach suffers from two major drawbacks. First, spatial information is heavily redundant because consecutive frames often share almost identical backgrounds and objects, leading to an explosion of token count proportional to the product of frame count (T) and per‑frame token count (S). Second, the temporal granularity is limited; fine‑grained motion cues are either lost (when sampling sparsely) or cause quadratic attention costs (when sampling densely).

VTok addresses these issues by explicitly decoupling spatial and temporal information. The first frame of a video clip is treated as a key frame. A conventional image tokenizer (E_key) extracts S spatial tokens V^(s) from this key frame, preserving full spatial detail. For every subsequent frame x_t (t > 1), VTok computes a residual motion token v_t^(m) that captures the difference between the current frame and the key frame. Concretely, a shared feature extractor F produces embeddings for both frames; the difference ΔF = F(x_t) − F(x_1) is projected by a lightweight motion encoder g_ϕ into a single token. Consequently, a video of T frames is represented by S + (T − 1) tokens, reducing the token budget from O(T·S) to O(T + S) while still encoding viewpoint changes, object motion, and subtle temporal dynamics.

The tokenization scheme is integrated into a unified multimodal large language model (MLLM) that shares the same autoregressive backbone for both understanding and generation. In the understanding branch, the visual token sequence (key‑frame tokens + residual tokens) is projected into the same embedding space as language tokens, concatenated with a textual prompt, and fed to the MLLM, which predicts textual outputs (captions, answers, explanations) via standard language‑modeling loss. In the generation branch, the MLLM receives only a textual prompt, autoregressively samples visual tokens in the key‑frame + residual format, and passes them to a pretrained diffusion transformer decoder (D_vid) that reconstructs the video frames. The overall training objective combines (i) the language‑modeling loss for understanding, (ii) a visual‑token language‑modeling loss for generation, and (iii) the diffusion decoder reconstruction loss, weighted by hyper‑parameters λ_visLM and λ_dec.

Extensive experiments evaluate VTok on a suite of benchmarks. On the newly proposed TV‑Align benchmark, which tests alignment between generated videos and high‑level textual instructions (object count, motion direction, relative size), VTok‑augmented models achieve a 3.4 % absolute accuracy gain over strong baselines that use naïve frame‑sampling tokenizers. On VBench, a standard video generation quality metric, VTok improves total and semantic scores by 1.92 and 4.33 points respectively, reflecting more coherent motion and stronger adherence to textual guidance. For video understanding, VTok‑enhanced models fine‑tuned on LLaVA‑Next achieve an average 2.3 % improvement across seven benchmarks, including Video‑MMMU. Ablation studies show that (a) using the first frame as the key frame yields the most stable performance, (b) residual token dimensionality of 512 balances expressiveness and efficiency, and (c) a simple difference‑based motion encoder already captures most temporal information, though more sophisticated motion encoders can provide marginal gains.

The paper highlights three primary advantages of VTok: (1) token efficiency—linear growth in token count dramatically reduces memory and quadratic attention costs; (2) temporal fidelity—residual tokens directly encode frame‑to‑key‑frame changes, preserving fine‑grained motion; (3) architectural simplicity—a single tokenizer serves both understanding and generation, enabling a truly unified multimodal system. Limitations are acknowledged: videos with rapid camera pans, abrupt scene cuts, or highly dynamic backgrounds may not be fully captured by a single residual token per frame, suggesting future work on multi‑key‑frame or hierarchical residual schemes.

In summary, VTok presents a principled redesign of video tokenization that decouples spatial and temporal latents, yielding compact yet expressive representations. Integrated into a unified MLLM, it delivers consistent performance gains across both video understanding and text‑to‑video generation, establishing a promising new paradigm for efficient, semantically aligned video‑language modeling.

Comments & Academic Discussion

Loading comments...

Leave a Comment