Continuous Degradation Modeling via Latent Flow Matching for Real-World Super-Resolution

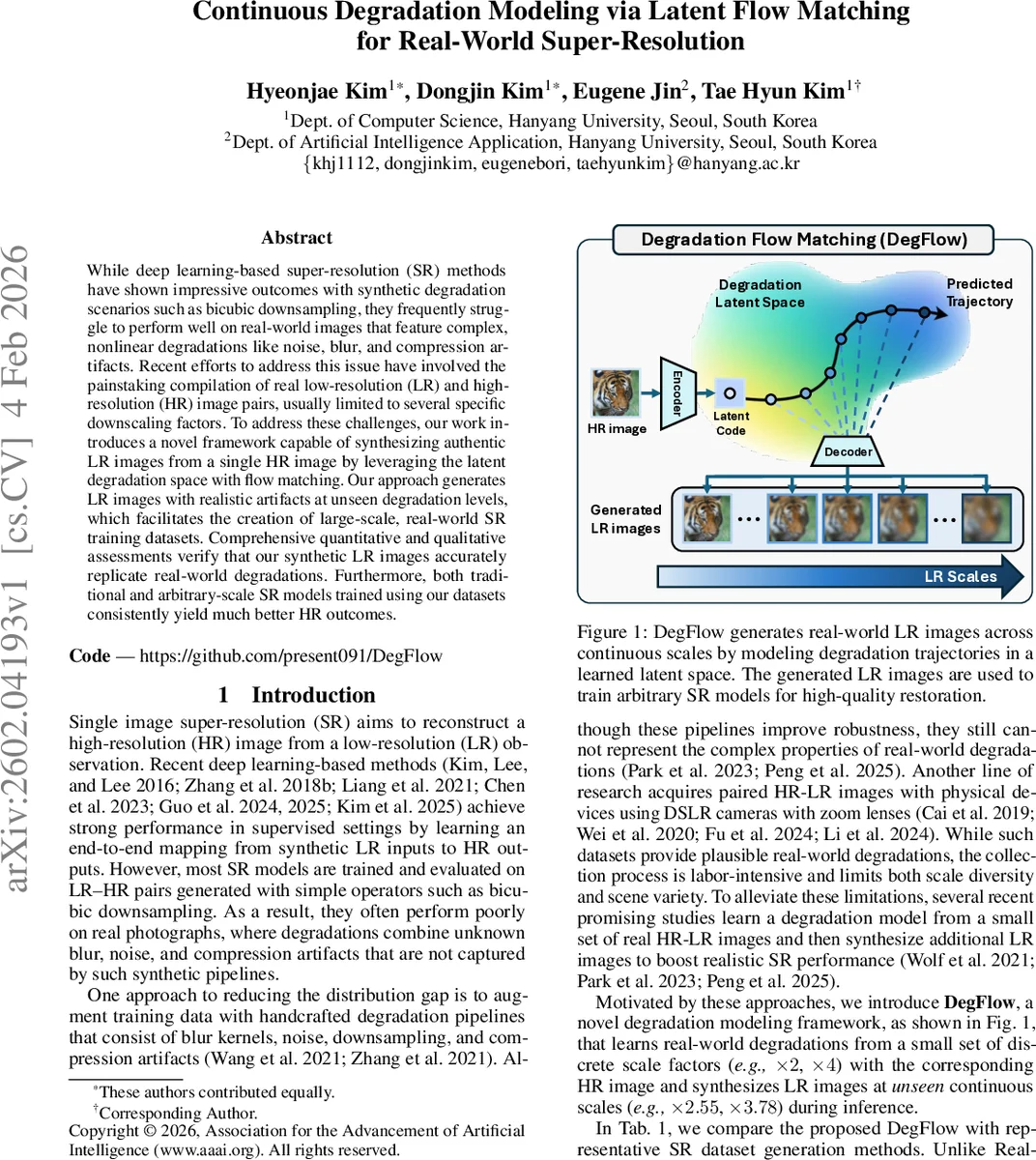

While deep learning-based super-resolution (SR) methods have shown impressive outcomes with synthetic degradation scenarios such as bicubic downsampling, they frequently struggle to perform well on real-world images that feature complex, nonlinear degradations like noise, blur, and compression artifacts. Recent efforts to address this issue have involved the painstaking compilation of real low-resolution (LR) and high-resolution (HR) image pairs, usually limited to several specific downscaling factors. To address these challenges, our work introduces a novel framework capable of synthesizing authentic LR images from a single HR image by leveraging the latent degradation space with flow matching. Our approach generates LR images with realistic artifacts at unseen degradation levels, which facilitates the creation of large-scale, real-world SR training datasets. Comprehensive quantitative and qualitative assessments verify that our synthetic LR images accurately replicate real-world degradations. Furthermore, both traditional and arbitrary-scale SR models trained using our datasets consistently yield much better HR outcomes.

💡 Research Summary

The paper introduces DegFlow, a novel framework for modeling real‑world image degradations in a continuous manner and generating realistic low‑resolution (LR) images from a single high‑resolution (HR) input. Traditional super‑resolution (SR) methods are typically trained on synthetically degraded data (e.g., bicubic downsampling) and therefore perform poorly on real photographs that contain a mixture of blur, noise, and compression artifacts. Collecting paired HR‑LR images with actual cameras alleviates this gap but is labor‑intensive and limited in scale and diversity.

DegFlow tackles these issues with a two‑stage pipeline. Stage 1 is a Residual Autoencoder (RAE) that encodes both HR and LR images into a compact latent space while preserving fine details through multi‑scale skip connections from the HR encoder to the decoder. The RAE is trained with an L2 reconstruction loss on both HR and randomly sampled LR scales, ensuring that the latent representation captures degradation cues across scales.

Stage 2 is Latent Flow Matching (LFM), which learns a continuous degradation trajectory in the latent space using conditional flow matching. Instead of simple linear interpolation, DegFlow fits a natural cubic spline through the latent pairs (z_HR, z_LR) and trains a neural velocity field to match the spline’s first derivative. This yields a smooth ODE‑based flow that can be evaluated at any time t∈

Comments & Academic Discussion

Loading comments...

Leave a Comment