Conditional Flow Matching for Visually-Guided Acoustic Highlighting

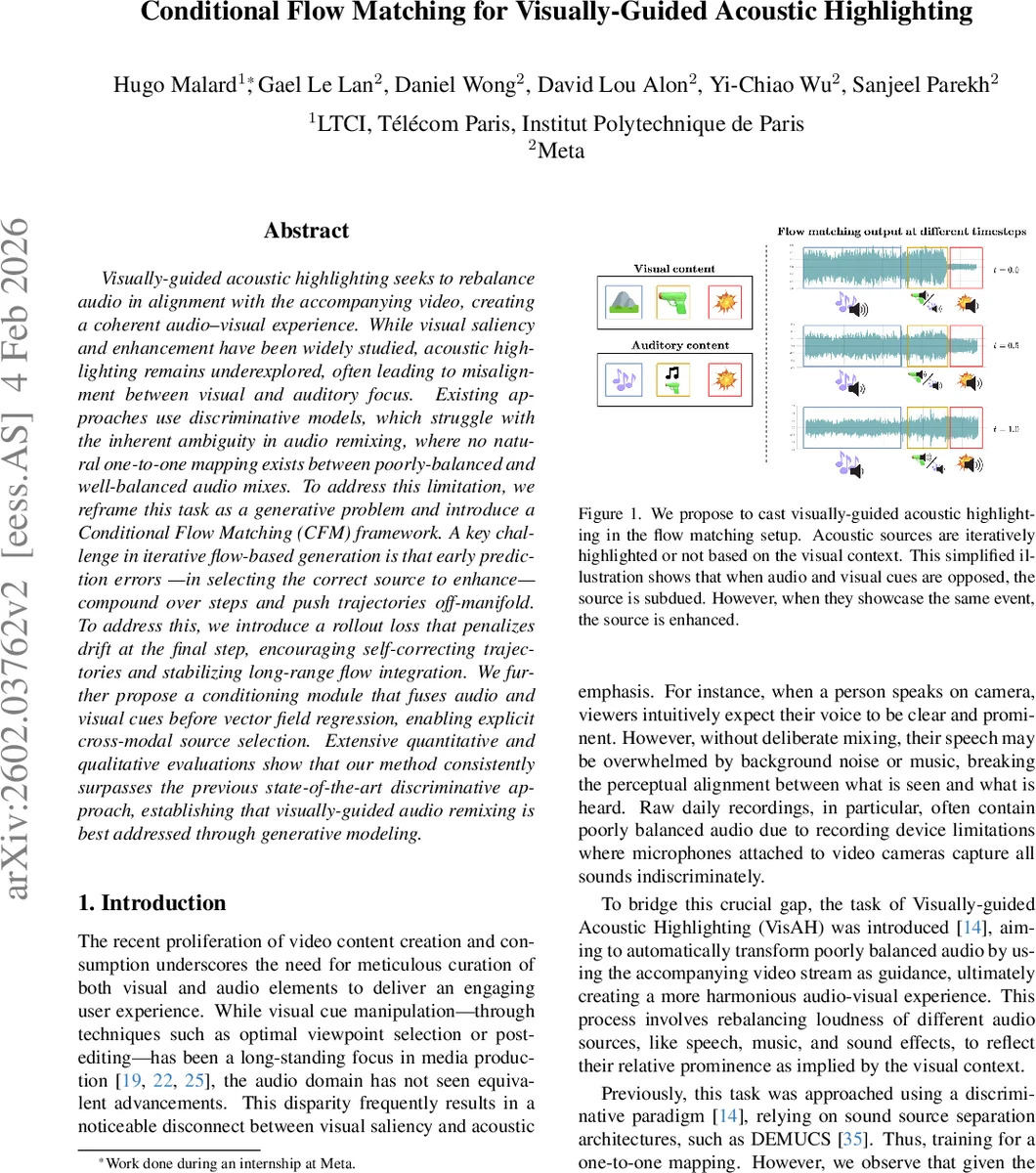

Visually-guided acoustic highlighting seeks to rebalance audio in alignment with the accompanying video, creating a coherent audio-visual experience. While visual saliency and enhancement have been widely studied, acoustic highlighting remains underexplored, often leading to misalignment between visual and auditory focus. Existing approaches use discriminative models, which struggle with the inherent ambiguity in audio remixing, where no natural one-to-one mapping exists between poorly-balanced and well-balanced audio mixes. To address this limitation, we reframe this task as a generative problem and introduce a Conditional Flow Matching (CFM) framework. A key challenge in iterative flow-based generation is that early prediction errors – in selecting the correct source to enhance – compound over steps and push trajectories off-manifold. To address this, we introduce a rollout loss that penalizes drift at the final step, encouraging self-correcting trajectories and stabilizing long-range flow integration. We further propose a conditioning module that fuses audio and visual cues before vector field regression, enabling explicit cross-modal source selection. Extensive quantitative and qualitative evaluations show that our method consistently surpasses the previous state-of-the-art discriminative approach, establishing that visually-guided audio remixing is best addressed through generative modeling.

💡 Research Summary

The paper tackles Visually‑Guided Acoustic Highlighting (VisAH), the task of rebalancing audio tracks so that the loudness of speech, music, and sound‑effects aligns with visual saliency in a video. Prior work approached VisAH with discriminative models (e.g., DEMUCS‑based source‑separation networks) that learn a deterministic one‑to‑one mapping from a poorly‑mixed audio input to a well‑balanced target. This formulation ignores the inherent many‑to‑many nature of the problem: a single video can correspond to many plausible balanced mixes, and a single balanced mix can be generated from many degraded versions. Consequently, discriminative models struggle to capture the underlying distributional shift and often mis‑identify which audio source should be emphasized or suppressed, especially when visual and auditory cues conflict.

To overcome these limitations, the authors recast VisAH as a generative problem using Conditional Flow Matching (CFM). In CFM, a neural network vθ(x_t, t, c) learns a time‑dependent velocity field that continuously transports samples from a base distribution (standard Gaussian) to the target distribution (well‑balanced audio) while being conditioned on visual context c. The continuous ODE formulation ẋ_t = (x₁ – x_t)/(1 – t) is approximated by vθ, and training minimizes the squared error between the true velocity (x₁ – x_t)/(1 – t) and the network output, averaged over random timesteps t ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment