A Unified Candidate Set with Scene-Adaptive Refinement via Diffusion for End-to-End Autonomous Driving

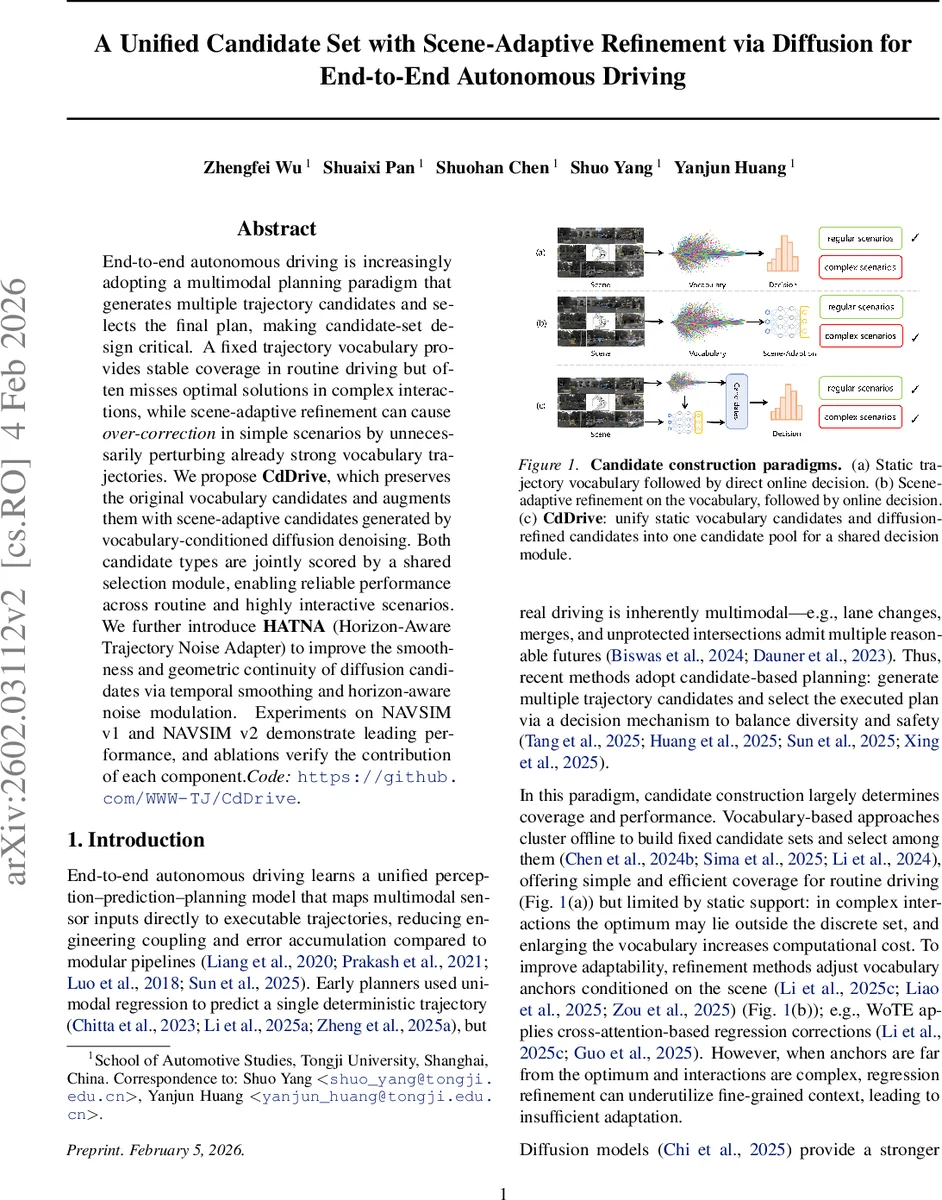

End-to-end autonomous driving is increasingly adopting a multimodal planning paradigm that generates multiple trajectory candidates and selects the final plan, making candidate-set design critical. A fixed trajectory vocabulary provides stable coverage in routine driving but often misses optimal solutions in complex interactions, while scene-adaptive refinement can cause over-correction in simple scenarios by unnecessarily perturbing already strong vocabulary trajectories.We propose CdDrive, which preserves the original vocabulary candidates and augments them with scene-adaptive candidates generated by vocabulary-conditioned diffusion denoising. Both candidate types are jointly scored by a shared selection module, enabling reliable performance across routine and highly interactive scenarios. We further introduce HATNA (Horizon-Aware Trajectory Noise Adapter) to improve the smoothness and geometric continuity of diffusion candidates via temporal smoothing and horizon-aware noise modulation. Experiments on NAVSIM v1 and NAVSIM v2 demonstrate leading performance, and ablations verify the contribution of each component. Code: https://github.com/WWW-TJ/CdDrive.

💡 Research Summary

The paper addresses a central challenge in end‑to‑end autonomous driving: how to construct a set of trajectory candidates that is both reliable for routine driving and flexible enough to handle complex interactions. Existing approaches fall into two camps. Fixed‑vocabulary methods (e.g., VADv2, Hydra‑MDP) pre‑compute a discrete set of trajectories by clustering expert demonstrations. This provides stable coverage and low computational cost, but the optimal trajectory may lie outside the vocabulary in highly interactive scenarios, and enlarging the vocabulary quickly becomes computationally prohibitive. Scene‑adaptive refinement methods (e.g., WOTE, iPad, DiffusionDrive) take the vocabulary anchors as a starting point and apply regression‑based or diffusion‑based adjustments conditioned on the current scene. While these methods improve performance in challenging scenes, they often over‑correct in simple situations, unnecessarily perturbing already good candidates and degrading overall quality.

CdDrive proposes to unify the strengths of both paradigms. It keeps the original vocabulary candidates untouched, ensuring robust coverage for everyday driving, and augments them with additional candidates generated by a conditional diffusion process that refines each anchor according to the current BEV perception. The diffusion model follows a truncated denoising schedule: instead of starting from pure Gaussian noise, it injects noise at an intermediate timestep t_tr, which reduces the number of denoising steps and computational load while still allowing substantial exploration. During reverse diffusion, the network predicts an anchor‑refinement Δp_θ for each noisy anchor, and the refined trajectory estimate is obtained via a DDIM‑style update.

A key technical contribution is the Horizon‑Aware Trajectory Noise Adapter (HA TNA). Standard diffusion adds i.i.d. noise of equal magnitude to every timestep, which creates “zig‑zag” artifacts because near‑horizon points have a finer spatial scale than far‑horizon points. HA TNA modifies the injected noise in two ways: (1) a temporal low‑pass filter smooths high‑frequency components, and (2) a horizon‑aware scaling vector s applies smaller noise amplitudes to early timesteps and larger amplitudes to later ones. This adaptation is applied only to the noise term, leaving the diffusion schedule and denoising network unchanged, yet it dramatically improves the smoothness and geometric continuity of diffusion‑generated trajectories, making them comparable to the clean vocabulary candidates.

All candidates—both original vocabulary and diffusion‑refined—are fed into a shared trajectory decision module. This module, built on multi‑head attention, scores each trajectory on safety, efficiency, collision avoidance, time‑to‑collision, comfort, and a composite metric (PDMS). Because the same scorer evaluates both types, the system can automatically select a vocabulary trajectory when it is already optimal, or switch to a diffusion‑refined one when the scene demands more nuanced adaptation.

Experiments on the NAVSIM v1 and NAVSIM v2 benchmarks (using a ResNet‑34 perception backbone) show that CdDrive outperforms a wide range of state‑of‑the‑art planners, including VADv2, UniAD, Hydra‑MDP, DiffusionDrive, WOTE, and others. It achieves the highest scores across all reported metrics (NC, DA, EP, TTC, Comfort, PDMS), with notable gains in complex interaction scenarios. Ablation studies confirm: (i) the combination of vocabulary and diffusion candidates yields the best overall performance; (ii) HA TNA significantly reduces far‑horizon kinks and improves selection stability; (iii) the truncated diffusion schedule maintains efficiency while preserving the benefits of diffusion‑based refinement.

In summary, CdDrive introduces a unified candidate set that leverages the stability of a fixed trajectory vocabulary and the adaptability of scene‑conditioned diffusion, while HA TNA ensures that diffusion‑generated trajectories remain smooth and comfortable. This design enables an end‑to‑end multimodal planner to deliver consistent, high‑quality decisions across both routine and highly interactive driving situations, offering a practical path toward real‑time deployment in autonomous vehicles.

Comments & Academic Discussion

Loading comments...

Leave a Comment