AERO: Autonomous Evolutionary Reasoning Optimization via Endogenous Dual-Loop Feedback

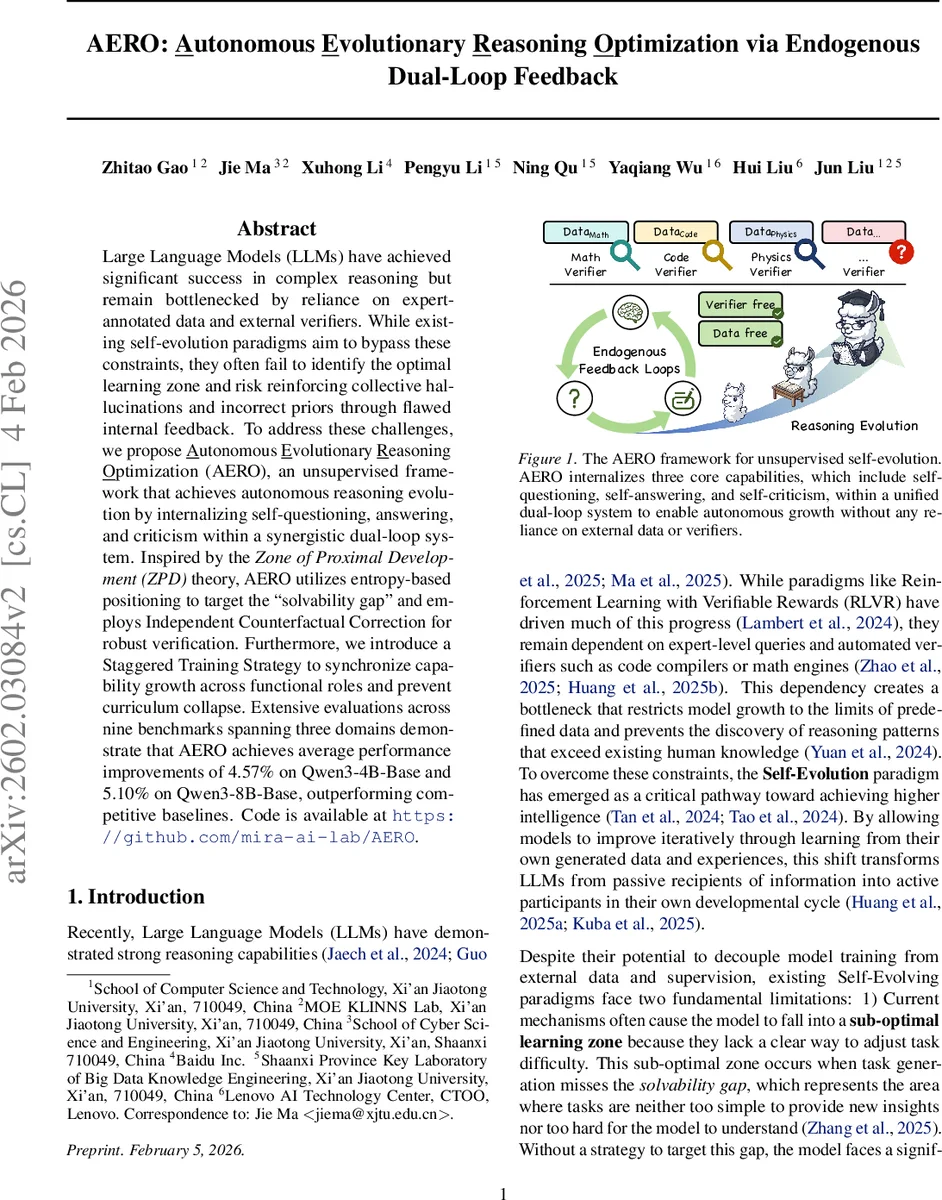

Large Language Models (LLMs) have achieved significant success in complex reasoning but remain bottlenecked by reliance on expert-annotated data and external verifiers. While existing self-evolution paradigms aim to bypass these constraints, they often fail to identify the optimal learning zone and risk reinforcing collective hallucinations and incorrect priors through flawed internal feedback. To address these challenges, we propose \underline{A}utonomous \underline{E}volutionary \underline{R}easoning \underline{O}ptimization (AERO), an unsupervised framework that achieves autonomous reasoning evolution by internalizing self-questioning, answering, and criticism within a synergistic dual-loop system. Inspired by the \textit{Zone of Proximal Development (ZPD)} theory, AERO utilizes entropy-based positioning to target the ``solvability gap’’ and employs Independent Counterfactual Correction for robust verification. Furthermore, we introduce a Staggered Training Strategy to synchronize capability growth across functional roles and prevent curriculum collapse. Extensive evaluations across nine benchmarks spanning three domains demonstrate that AERO achieves average performance improvements of 4.57% on Qwen3-4B-Base and 5.10% on Qwen3-8B-Base, outperforming competitive baselines. Code is available at https://github.com/mira-ai-lab/AERO.

💡 Research Summary

**

The paper introduces AERO (Autonomous Evolutionary Reasoning Optimization), a novel unsupervised framework that enables large language models (LLMs) to improve their reasoning abilities without any external data, labels, or verification tools. Existing self‑evolution approaches suffer from two critical shortcomings: (1) they lack a principled way to keep generated tasks within an optimal difficulty range, causing the model either to stagnate on overly easy problems or to be overwhelmed by tasks that produce noisy feedback; (2) they rely on internal signals such as majority voting or decoding confidence, which can reinforce collective hallucinations when the model holds incorrect beliefs. AERO addresses both issues through a synergistic dual‑loop architecture, an entropy‑based positioning mechanism inspired by Vygotsky’s Zone of Proximal Development (ZPD), an Independent Counterfactual Correction (ICC) verification scheme, and a Staggered Training Strategy that synchronizes the growth of three functional roles.

Dual‑Loop Architecture

A single LLM, denoted πθ, alternates among three roles: Self‑Questioning (Generator), Self‑Answering (Solver), and Self‑Criticism (Refiner). The inner loop acts as a sandbox where the Generator creates a batch of tasks Q_t, the Solver produces n independent reasoning trajectories for each task, and the Refiner evaluates and possibly revises these trajectories. The outer loop aggregates the verified experiences into three role‑specific preference datasets (D^g, D^s, D^r) and updates the model parameters via preference‑based policy optimization. This loop‑within‑loop design allows the model to act as its own data factory and trainer.

Entropy‑Based ZPD Positioning

For each generated task q_i, the Solver’s n answers are clustered, and the normalized Shannon entropy (\bar H(q_i)) of the cluster distribution is computed. (\bar H) ranges from 0 (complete consensus) to 1 (maximum divergence). Two thresholds τ_low and τ_high partition tasks into: (i) Zone of Mastery ( (\bar H < τ_{low}) ) – already mastered, low learning signal; (ii) Zone of Proximal Development ( τ_low ≤ (\bar H ≤ τ_{high}) ) – optimal learning zone where uncertainty is moderate; (iii) Zone of Chaos ( (\bar H > τ_{high}) ) – too difficult, yielding random guesses. AERO filters out tasks outside the ZPD, ensuring that only those providing the strongest learning gradients are used for further training.

Independent Counterfactual Correction (ICC)

To obtain a reliable truth proxy without external labels, ICC selects the two most frequent answer clusters c_{i,1} and c_{i,2}. The Refiner is prompted to re‑solve the task under the counterfactual assumption that the selected answer is wrong. This forces the model to generate an independent reasoning path (\tilde y_{i,j}) for each cluster. If the final answers of the two counterfactual paths converge (i.e., res((\tilde y_{i,1})) = res((\tilde y_{i,2}))), the converged answer is accepted as a verified truth proxy (\tilde y_i). Tasks that fail to achieve convergence are discarded. This mechanism breaks confirmation bias and prevents the model from reinforcing hallucinations through majority‑vote or confidence‑based rewards.

Tri‑Role Preference Synthesis

Verified experiences are transformed into binary preference pairs for each role. For the Generator, all ZPD‑filtered tasks are kept to teach the model where its frontier lies. For the Solver, each trajectory is labeled positive if its final answer matches the ICC truth proxy; otherwise it is negative. For the Refiner, only counterfactual correction paths that successfully overturn an initially wrong answer and reach the truth proxy are kept as positive samples. This separation yields three clean, role‑specific datasets that can be optimized independently.

Staggered Training Strategy

A key obstacle in self‑evolution is curriculum collapse caused by asynchronous learning speeds among roles. If the Generator creates new tasks based on the previous model π_{t‑1}, the updated model π_t may already master those tasks, leading to vanishing gradients. The Staggered Training Strategy introduces a temporal offset: the Generator always uses the current model, while the Solver and Refiner continue to train on data from the previous round. Formally, the total training set at round t is (D^{total}t = { D^g_t, D^s{t‑1}, D^r_{t‑1} }) for t > 1. This offset keeps the curriculum challenging for the newest questioning capability while allowing the solving and refining capabilities to consolidate knowledge, thereby stabilizing the evolutionary dynamics.

Experimental Evaluation

The authors evaluate AERO on nine benchmarks covering general reasoning, mathematical reasoning, and physical reasoning. Two base models, Qwen‑3‑4B‑Base and Qwen‑3‑8B‑Base, serve as backbones. Results show average accuracy improvements of 4.57 % and 5.10 % respectively over strong baselines that include RL with verifiable rewards, self‑play without ZPD filtering, and self‑play with majority‑vote verification. Ablation studies confirm that removing ZPD filtering, ICC, or the staggered schedule each leads to substantial performance degradation, validating the necessity of each component. Moreover, training curves demonstrate that AERO maintains a steady upward trajectory, whereas synchronous self‑play quickly plateaus or declines due to curriculum collapse.

Contributions and Impact

- Introduces the first fully endogenous dual‑loop framework that simultaneously evolves self‑questioning, self‑answering, and self‑criticism within a single LLM, eliminating the need for external supervision.

- Proposes entropy‑driven ZPD positioning to automatically locate the optimal learning zone and Independent Counterfactual Correction to provide a high‑fidelity internal verification signal.

- Develops a simple yet effective Staggered Training Strategy that aligns the growth of multiple capabilities and prevents curriculum collapse.

- Provides extensive empirical evidence across diverse reasoning domains, demonstrating robust, scalable performance gains and evolutionary stability.

- Releases code and data publicly, enabling reproducibility and future extensions to larger models or specialized domains.

In summary, AERO represents a significant step toward truly autonomous LLM reasoning evolution, combining cognitive‑theoretic insights with rigorous algorithmic design to overcome the long‑standing bottlenecks of data dependence and unreliable self‑feedback.

Comments & Academic Discussion

Loading comments...

Leave a Comment