De-Linearizing Agent Traces: Bayesian Inference of Latent Partial Orders for Efficient Execution

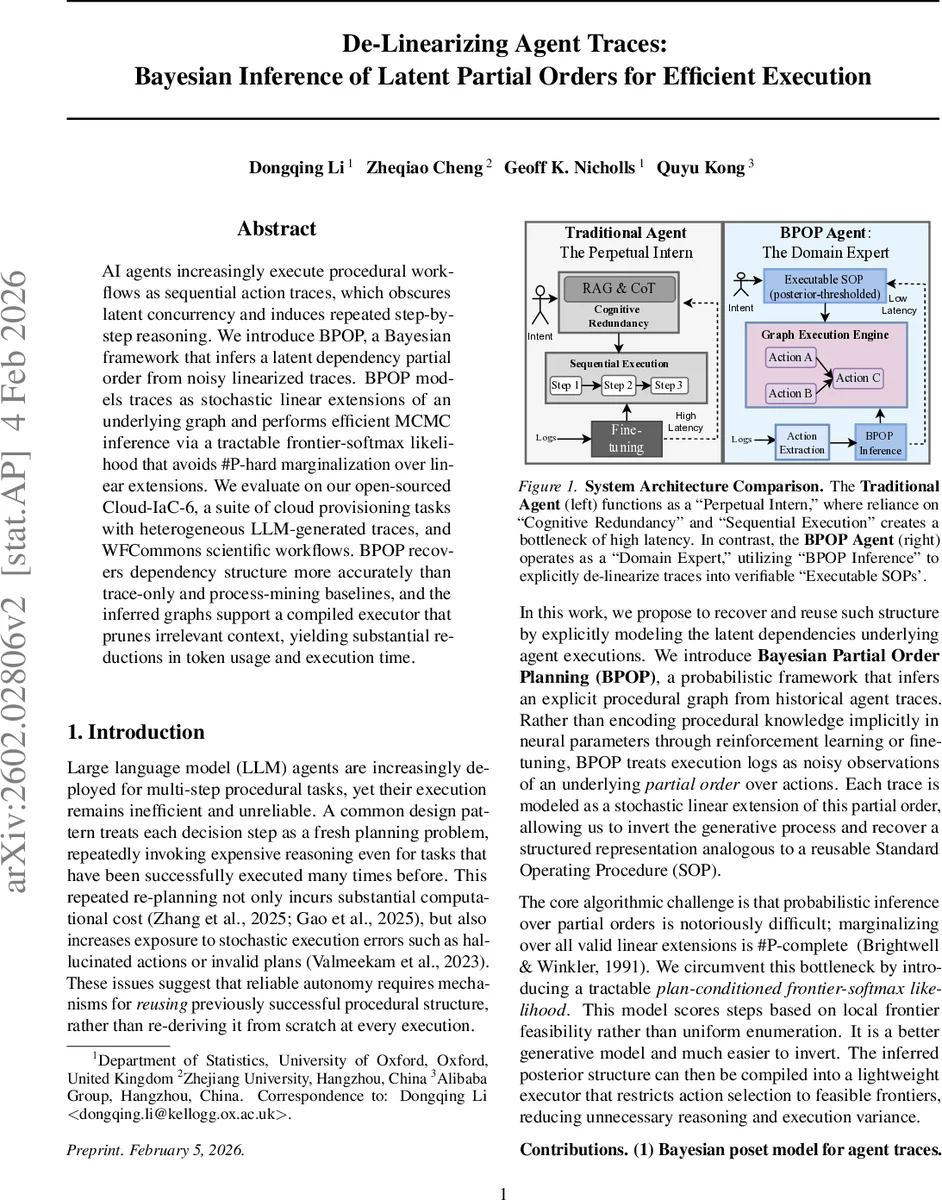

AI agents increasingly execute procedural workflows as sequential action traces, which obscures latent concurrency and induces repeated step-by-step reasoning. We introduce BPOP, a Bayesianframework that infers a latent dependency partial order from noisy linearized traces. BPOP models traces as stochastic linear extensions of an underlying graph and performs efficient MCMC inference via a tractable frontier-softmax likelihood that avoids #P-hard marginalization over linear extensions. We evaluate on our open-sourced Cloud-IaC-6, a suite of cloud provisioning tasks with heterogeneous LLM-generated traces, and WFCommons scientific workflows. BPOP recover dependency structure more accurately than trace-only and process-mining baselines, and the inferred graphs support a compiled executor that prunes irrelevant context, yielding substantial reductions in token usage and execution time.

💡 Research Summary

The paper introduces BPOP (Bayesian Partial Order Planning), a probabilistic framework that infers a latent dependency partial order (a DAG or SOP) from noisy, sequential execution traces produced by large language model (LLM) agents. Traditional agents treat each step as a fresh planning problem, which leads to repeated costly reasoning and exposure to hallucinations. BPOP instead treats each trace as a stochastic linear extension of an underlying strict partial order, thereby separating true precedence constraints from incidental serialization.

The model represents each atomic action with a K‑dimensional latent vector drawn from a Gaussian prior. A partial order is induced by component‑wise dominance: action i precedes j if its embedding is larger in every dimension. This construction can represent any finite partial order when K is sufficiently large. Given a latent order h, the likelihood of a trace is defined via a frontier‑softmax (stage‑wise Plackett–Luce) model. At each time step the feasible set (frontier) consists of actions whose predecessors have already been executed; the agent selects an action from this set with probability proportional to exp(β Q), where Q is a simple utility based on the number of descendants, β controls rationality, and a small ε adds uniform “trembling‑hand” noise to keep the likelihood strictly positive. Because the likelihood decomposes into per‑step choices, its evaluation is polynomial (O(|E|+T·|A|)) rather than #P‑complete.

Inference proceeds with a Metropolis‑within‑Gibbs sampler that jointly updates the embeddings, the correlation parameter of the Gaussian prior, the temperature β, and optionally the latent dimension K via reversible‑jump moves. From the posterior samples the marginal edge probabilities π̂_ij are computed. Two point‑estimation strategies are explored: (A) a marginal‑threshold estimator that includes an edge if π̂_ij exceeds a user‑specified α, allowing a precision‑recall trade‑off; (B) a marginal‑mode estimator that selects the most probable relation (i ≻ j, j ≻ i, or incomparable) for each pair, which is Bayes‑optimal under 0‑1 loss.

The authors evaluate BPOP on two benchmark suites. Cloud‑IaC‑6 comprises six cloud‑provisioning tasks with heterogeneous traces generated by multiple LLMs (e.g., GPT‑4, Claude‑2). WFCommons contains scientific workflows such as DNA sequencing pipelines. Baselines include classic process‑mining algorithms (Heuristics Miner, Inductive Miner) and simple rank‑aggregation methods. BPOP consistently outperforms baselines in edge‑wise precision, recall, and F1 (often >0.9 versus <0.8 for baselines). The paper also introduces the IP‑Cov metric (fraction of ground‑truth incomparable pairs observed in both directions) to quantify trace diversity; high IP‑Cov correlates with near‑perfect recovery.

Beyond structure recovery, the inferred partial order is compiled into a Graph Execution Engine (GEE). The GEE runs a frontier‑based scheduler that executes all currently feasible actions in parallel, eliminating the need for LLM calls at each step. A tri‑modal execution framework is built: (1) Expert mode runs the compiled SOP deterministically; (2) Hybrid mode falls back to an LLM planner if the GEE encounters an unexpected error; (3) Explore mode uses the LLM to generate new traces for data collection. Experiments show that using the compiled SOP reduces token consumption by roughly 45 % and overall execution time by more than 30 % compared with a naïve step‑by‑step LLM planner.

The contribution of the work is threefold: (1) a Bayesian latent‑poset model that can recover true procedural dependencies from noisy linear logs; (2) a tractable frontier‑softmax likelihood that sidesteps the #P‑hard counting problem inherent in linear‑extension models; (3) a practical pipeline that compiles the posterior graph into a reusable executor, delivering measurable efficiency gains and robustness. Limitations include the current inability to model state‑conditional dependencies (where precedence may depend on runtime data) and the lack of online updating for streaming traces. Future directions suggested are extending the model to conditional partial orders, variational inference for faster online learning, and scaling to multi‑agent collaborative workflows.

Comments & Academic Discussion

Loading comments...

Leave a Comment