Why Steering Works: Toward a Unified View of Language Model Parameter Dynamics

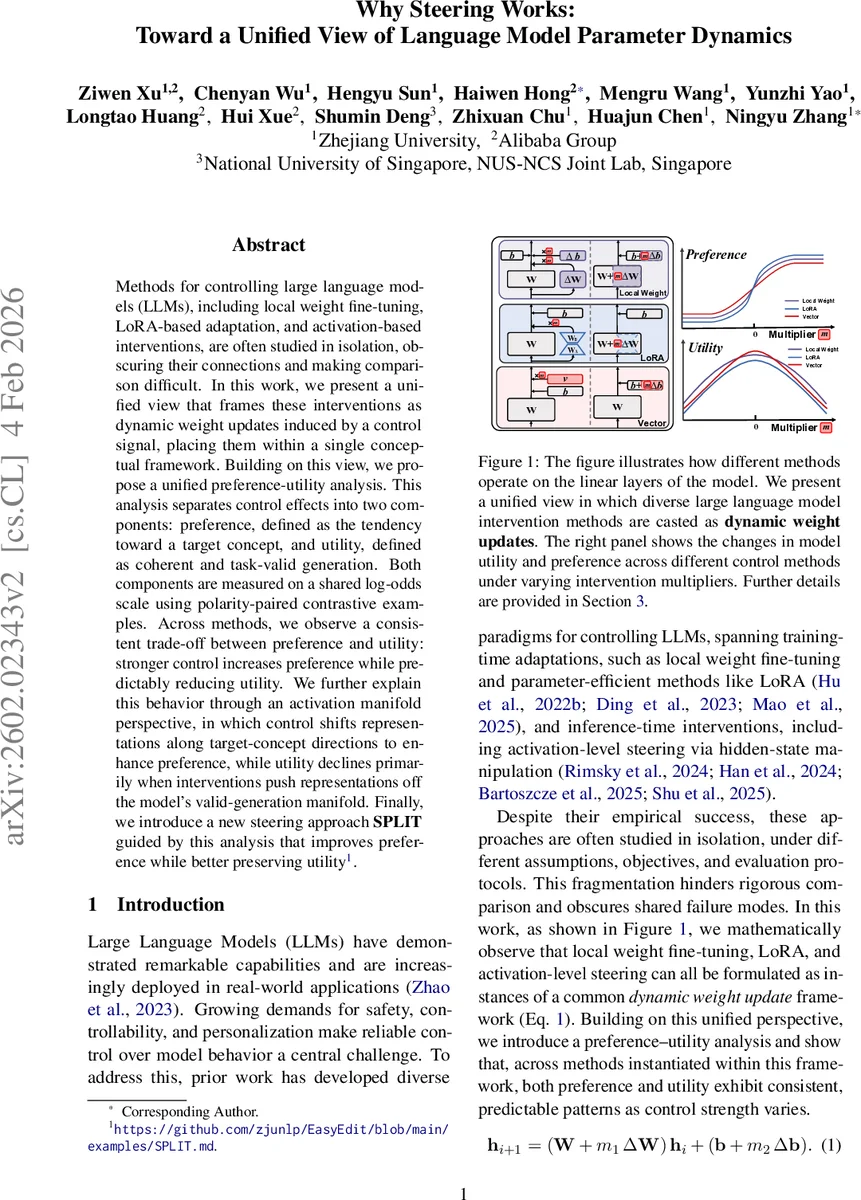

Methods for controlling large language models (LLMs), including local weight fine-tuning, LoRA-based adaptation, and activation-based interventions, are often studied in isolation, obscuring their connections and making comparison difficult. In this work, we present a unified view that frames these interventions as dynamic weight updates induced by a control signal, placing them within a single conceptual framework. Building on this view, we propose a unified preference-utility analysis that separates control effects into preference, defined as the tendency toward a target concept, and utility, defined as coherent and task-valid generation, and measures both on a shared log-odds scale using polarity-paired contrastive examples. Across methods, we observe a consistent trade-off between preference and utility: stronger control increases preference while predictably reducing utility. We further explain this behavior through an activation manifold perspective, in which control shifts representations along target-concept directions to enhance preference, while utility declines primarily when interventions push representations off the model’s valid-generation manifold. Finally, we introduce a new steering approach SPLIT guided by this analysis that improves preference while better preserving utility. Code is available at https://github.com/zjunlp/EasyEdit/blob/main/examples/SPLIT.md.

💡 Research Summary

The paper addresses the fragmented landscape of large language model (LLM) control techniques—local weight fine‑tuning, Low‑Rank Adaptation (LoRA), and activation‑level steering—by unifying them under a single mathematical framework and introducing a common evaluation metric. The authors first show that all three methods can be expressed as a dynamic weight update applied during inference:

h_{i+1} = (W + m₁ΔW) h_i + (b + m₂Δb)

where ΔW and Δb represent the method‑specific changes (full‑matrix update for local fine‑tuning, low‑rank BA for LoRA, bias shift for steering) and m₁, m₂ are scalar control strengths. This formulation makes it possible to continuously vary the intervention magnitude and to compare disparate methods on equal footing.

To assess the effect of interventions, the authors define two complementary quantities on a log‑odds scale: preference (the model’s internal inclination toward a target concept) and utility (task‑valid, coherent generation). For each query q they construct a polarity pair of completions A⁺ (concept‑positive) and A⁻ (concept‑negative). Using the cross‑entropy losses L⁺ = −log P(A⁺|q) and L⁻ = −log P(A⁻|q), they derive

PrefOdds(q) = L⁻ − L⁺

which isolates the concept bias, and

UtilOdds(q) = log (e^{−L⁺}+e^{−L⁻}) − log (1 − e^{−L⁺} − e^{−L⁻})

which captures the total probability mass assigned to the pair and therefore reflects task competence. Because both are additive log‑odds, they can be plotted against the control factor m to reveal systematic dynamics.

Experiments are conducted on two open‑source models (Gemma‑2‑9B‑IT and Qwen‑2.5‑7B‑Instruct) at intermediate layers, using three intervention types (local weight, LoRA, vector steering) and two training objectives (standard supervised fine‑tuning and the newly introduced RePS objective). Evaluation tasks include a psychopathy personality classification and open‑ended generation on PowerSeeking and the top‑10 concept subsets of AxBench.

Across all settings, the authors observe a remarkably consistent pattern: Preference log‑odds follow a three‑stage curve as |m| increases—initial linear growth, a transitional region, and eventual saturation—while Utility log‑odds peak near m ≈ 0 and then gradually decline, eventually stabilizing at a lower plateau. This indicates a universal trade‑off: stronger control amplifies the desired concept but simultaneously erodes the model’s ability to produce coherent, on‑topic text.

To explain this phenomenon, the paper proposes an activation‑manifold hypothesis. It assumes that intermediate activations lie on a high‑density low‑dimensional manifold learned during pre‑training. Small steering vectors translate representations along a fixed “preference direction” while staying on the manifold, thereby increasing preference without harming validity. Larger translations push the state off the manifold, causing a decay in “validity” that primarily drives utility loss. The authors formalize this as a combination of projection gain (increase in the preference component) and validity decay (exponential drop in utility), fit the resulting equations to the empirical PrefOdds‑m and UtilOdds‑m curves, and report R² > 0.95, confirming the model’s explanatory power.

Guided by this analysis, the authors introduce SPLIT (Steering with Preference‑Utility Trade‑off). SPLIT learns a steering vector that maximizes PrefOdds while adding a regularization term penalizing the predicted utility decay. In practice, SPLIT achieves comparable or higher preference levels than LoRA or naive vector steering but with 15‑20 % less utility degradation, especially on complex, ethically sensitive concepts.

The contributions are threefold: (1) a unified dynamic‑weight view that mathematically subsumes major LLM control methods; (2) a preference‑utility log‑odds framework that enables quantitative, method‑agnostic comparison; (3) a mechanistic activation‑manifold explanation of the observed trade‑off and a new steering algorithm (SPLIT) that leverages this insight to improve control quality. The work opens avenues for systematic analysis of future control techniques, deeper modeling of the activation manifold, and broader application to multilingual or multimodal models.

Comments & Academic Discussion

Loading comments...

Leave a Comment