Concise Geometric Description as a Bridge: Unleashing the Potential of LLM for Plane Geometry Problem Solving

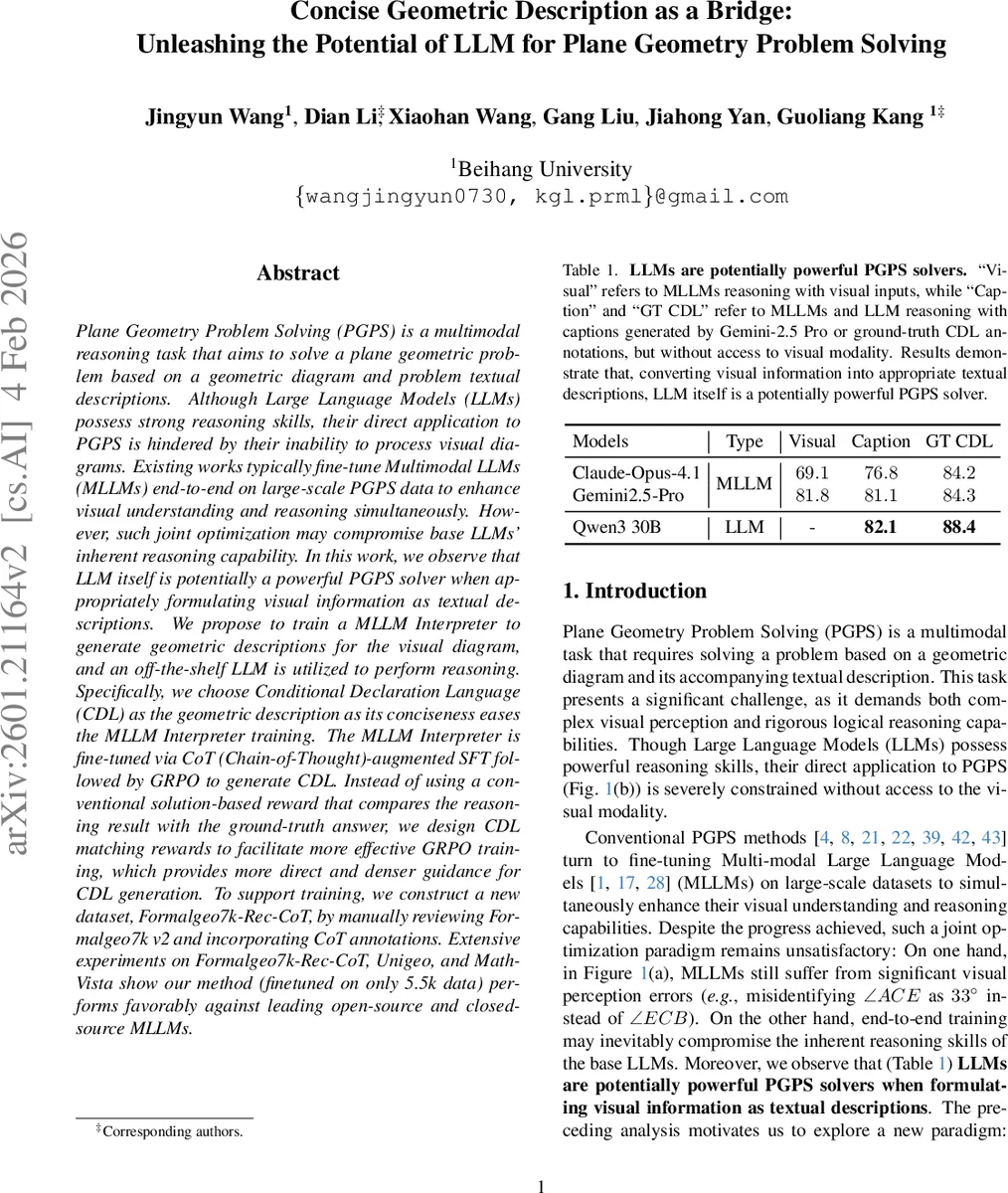

Plane Geometry Problem Solving (PGPS) is a multimodal reasoning task that aims to solve a plane geometric problem based on a geometric diagram and problem textual descriptions. Although Large Language Models (LLMs) possess strong reasoning skills, their direct application to PGPS is hindered by their inability to process visual diagrams. Existing works typically fine-tune Multimodal LLMs (MLLMs) end-to-end on large-scale PGPS data to enhance visual understanding and reasoning simultaneously. However, such joint optimization may compromise base LLMs’ inherent reasoning capability. In this work, we observe that LLM itself is potentially a powerful PGPS solver when appropriately formulating visual information as textual descriptions. We propose to train a MLLM Interpreter to generate geometric descriptions for the visual diagram, and an off-the-shelf LLM is utilized to perform reasoning. Specifically, we choose Conditional Declaration Language (CDL) as the geometric description as its conciseness eases the MLLM Interpreter training. The MLLM Interpreter is fine-tuned via CoT (Chain-of-Thought)-augmented SFT followed by GRPO to generate CDL. Instead of using a conventional solution-based reward that compares the reasoning result with the ground-truth answer, we design CDL matching rewards to facilitate more effective GRPO training, which provides more direct and denser guidance for CDL generation. To support training, we construct a new dataset, Formalgeo7k-Rec-CoT, by manually reviewing Formalgeo7k v2 and incorporating CoT annotations. Extensive experiments on Formalgeo7k-Rec-CoT, Unigeo, and MathVista show our method (finetuned on only 5.5k data) performs favorably against leading open-source and closed-source MLLMs.

💡 Research Summary

Plane Geometry Problem Solving (PGPS) requires a model to understand a geometric diagram and a textual problem statement, then produce a correct answer. Existing approaches typically fine‑tune multimodal large language models (MLLMs) end‑to‑end on large PGPS datasets. While this improves visual perception, it also entangles visual learning with the base LLM’s reasoning ability, often degrading the latter and still suffering from visual errors (e.g., mis‑identifying angles).

The authors observe that a pure LLM can be a powerful PGPS solver if the visual information is first transformed into an appropriate textual representation. They therefore propose a two‑stage paradigm: (1) train a MLLM Interpreter that converts a diagram into a concise, structured description called Conditional Declaration Language (CDL), and (2) feed the generated CDL to an off‑the‑shelf LLM (e.g., Claude‑Opus‑4.1, GPT‑4) which performs the reasoning entirely in text.

Why CDL?

CDL consists of three families of statements:

- ConsCDL – construction primitives such as

Shape,Collinear,Cocircular. - ImgCDL – geometric relations derived from the image (e.g.,

Equal(MeasureOfAngle(EAC),95)). - TextCDL – algebraic or textual constraints from the problem statement.

Compared with free‑form natural‑language captions, CDL is highly compact and formally defined, drastically shrinking the output search space and making it easier for the interpreter to learn.

Training the Interpreter

The authors build a high‑quality dataset named Formalgeo7k‑Rec‑CoT. Starting from FormalGeo7k v2, they manually correct diagram‑question mismatches and erroneous CDL annotations (four annotators, double‑review). They then generate Chain‑of‑Thought (CoT) sequences automatically from the CDL using a Python parser; each training example follows the format: <think> …CoT… </think> <cdl> …CDL… </cdl>.

Training proceeds in two stages:

-

CoT‑augmented Supervised Fine‑Tuning (SFT) – The interpreter (initialized from open‑source vision‑language models such as Qwen 2.5‑VL or Qwen 3‑VL) is fine‑tuned to predict both the CoT and the final CDL given the diagram. This stage equips the model with step‑by‑step reasoning but alone yields sub‑optimal CDL quality.

-

Group Relative Policy Optimization (GRPO) with CDL Matching Rewards – In the RL stage, the model samples a set of candidate (CoT, CDL) pairs, evaluates each with a CDL matching reward that measures token‑level recall and precision for ConsCDL, ImgCDL, and TextCDL separately, plus a format reward. Unlike conventional solution‑based rewards that compare the final numeric answer, these dense rewards give immediate feedback on each piece of the description, encouraging completeness while penalizing duplication or incorrect statements. GRPO updates the policy using advantage‑normalized scores and a KL‑penalty to keep the new policy close to the reference.

LLM Solver

After the interpreter is trained, it generates CDL for any new diagram. The CDL is fed directly to a standard LLM, which solves the problem using its internal chain‑of‑thought reasoning. No visual module is needed at inference time, and the LLM’s original reasoning capability remains untouched.

Experiments

The method is evaluated on three benchmarks: Formalgeo7k‑Rec‑CoT, UniGeo, and the geometry subset of MathVista. Training uses only 5.5 k annotated examples. Results show:

- The proposed approach outperforms all open‑source MLLMs (including Gemini‑2.5‑Pro, Qwen‑3‑30B) and achieves performance comparable to leading closed‑source models.

- When only captions generated by Gemini‑2.5‑Pro are provided (no CDL), LLMs still reach ~80 % accuracy, confirming that LLMs can solve PGPS once visual information is expressed textually.

- Error analysis reveals a dramatic reduction in visual perception mistakes, while logical reasoning errors remain at the baseline LLM level.

Contributions

- Demonstrates that a vanilla LLM becomes a strong PGPS solver when supplied with concise geometric descriptions.

- Introduces CDL as a compact, formal bridge and designs a two‑stage training pipeline (CoT‑augmented SFT + GRPO with matching rewards) that efficiently learns the interpreter with limited data.

- Provides a cleaned, CoT‑annotated dataset (Formalgeo7k‑Rec‑CoT) for the community.

- Shows that only 5.5 k training samples suffice to rival much larger multimodal models.

Limitations & Future Work

The current CDL is tailored to planar Euclidean geometry; extending to 3‑D geometry, non‑Euclidean spaces, or other scientific domains will require new declarative languages. The interpreter may still produce incomplete CDL; integrating a verification module or iterative refinement could further improve robustness. Finally, scaling the dataset and testing under noisy or partially occluded diagrams are promising directions.

Overall, the paper proposes a clean separation of visual perception and textual reasoning, leverages a structured description language, and introduces dense reward‑driven RL to train a lightweight interpreter, thereby unlocking the full potential of existing LLMs for plane geometry problem solving.

Comments & Academic Discussion

Loading comments...

Leave a Comment