DEEPMED: Building a Medical DeepResearch Agent via Multi-hop Med-Search Data and Turn-Controlled Agentic Training & Inference

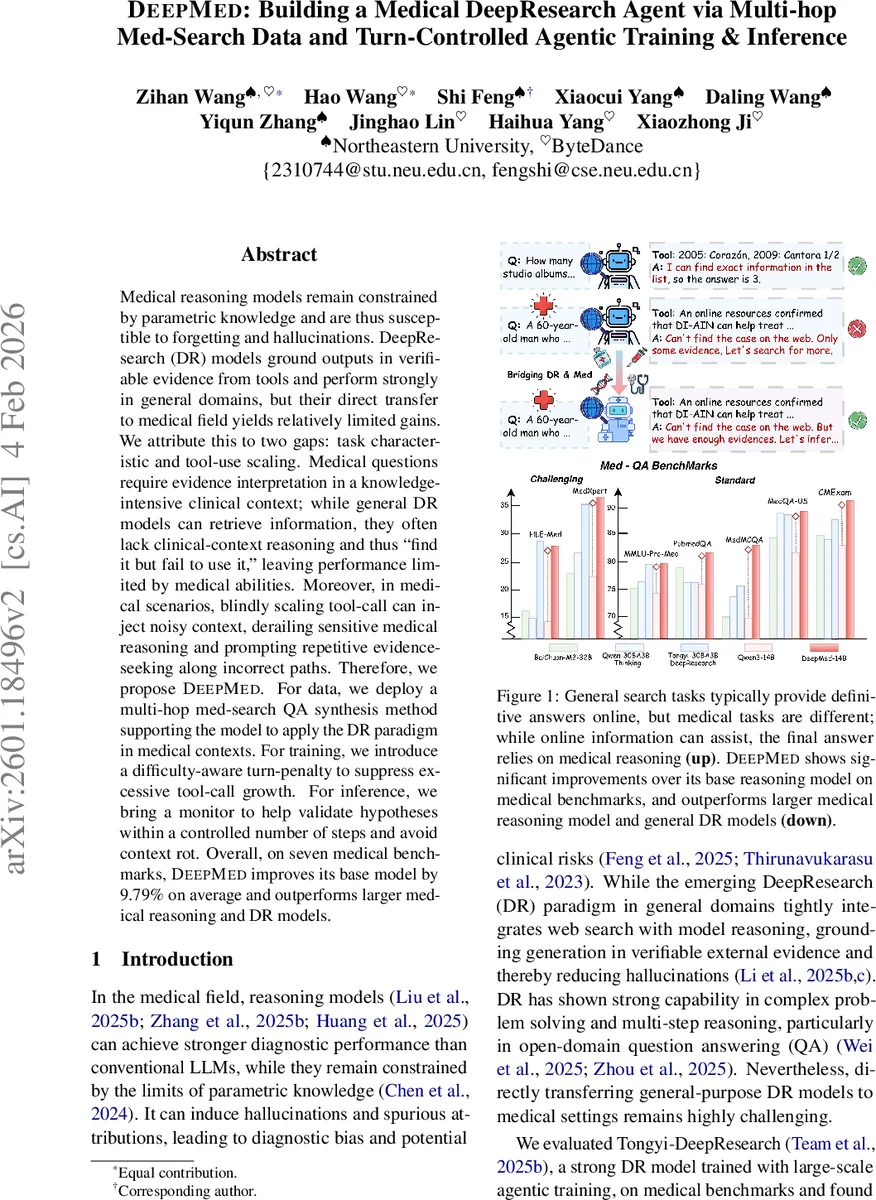

Medical reasoning models remain constrained by parametric knowledge and are thus susceptible to forgetting and hallucinations. DeepResearch (DR) models ground outputs in verifiable evidence from tools and perform strongly in general domains, but their direct transfer to medical field yields relatively limited gains. We attribute this to two gaps: task characteristic and tool-use scaling. Medical questions require evidence interpretation in a knowledge-intensive clinical context; while general DR models can retrieve information, they often lack clinical-context reasoning and thus “find it but fail to use it,” leaving performance limited by medical abilities. Moreover, in medical scenarios, blindly scaling tool-call can inject noisy context, derailing sensitive medical reasoning and prompting repetitive evidence-seeking along incorrect paths. Therefore, we propose DeepMed. For data, we deploy a multi-hop med-search QA synthesis method supporting the model to apply the DR paradigm in medical contexts. For training, we introduce a difficulty-aware turn-penalty to suppress excessive tool-call growth. For inference, we bring a monitor to help validate hypotheses within a controlled number of steps and avoid context rot. Overall, on seven medical benchmarks, DeepMed improves its base model by 9.79% on average and outperforms larger medical reasoning and DR models.

💡 Research Summary

DeepMed is a medical‑focused DeepResearch (DR) agent that bridges the gap between general‑purpose DR models and the demanding requirements of clinical reasoning. The authors first identify two fundamental mismatches that hinder the direct transfer of DR to medicine: (1) task characteristic mismatch – medical questions require not only factual retrieval but also interpretation of clinical implications, weighing conflicting evidence, and applying medical priors; general DR models tend to “find it but fail to use it.” (2) tool‑scaling profit mismatch – while increasing the number of tool calls improves performance on open‑domain QA, in medicine excessive searching injects noisy context, accelerates “context rot,” and can trigger an “over‑evidence” loop where the model repeatedly validates already‑known information, wasting steps and degrading accuracy.

To address these issues, DeepMed introduces three core components. First, a multi‑hop medical search QA synthesis pipeline builds web‑derived entity chains (e.g., disease → symptom → treatment) and then obscures entity names, forcing the model to reconstruct the chain via tool calls. Chain length and obfuscation level are varied to control difficulty, and strong LLMs (GPT‑5, Gemini‑2.5‑pro) filter for logical consistency and factual correctness. Second, a difficulty‑aware turn‑penalty is incorporated into the Agentic Reinforcement Learning (ARL) stage. The reward function penalizes overly long successful rollouts (λ·r_turn), encouraging minimal tool usage on easy samples while allowing moderate expansion on hard samples. Third, an over‑evidence monitor is deployed at inference time: when the model begins to repeat the same hypothesis validation beyond a predefined turn budget, the monitor halts further tool calls and forces answer generation, effectively breaking the redundant verification loop.

Training proceeds in two stages. In the Agentic Supervised Fine‑Tuning (ASFT) stage, the model learns to interleave reasoning (

Evaluation on seven medical benchmarks—including standard USMLE‑style tests and harder diagnostic QA sets—shows that DeepMed improves over its base model (Qwen‑3‑14B) by an average of 9.79 % absolute accuracy, with a 13.92 % gain on the two most challenging datasets. It outperforms larger medical reasoning models (e.g., HuatuoGPT‑o1, AlphaMed) and general DR agents (e.g., Tongyi‑DeepResearch) despite having fewer parameters. Ablation studies confirm that the multi‑hop data, turn‑penalty, and over‑evidence monitor each contribute significantly to reducing hallucinations and preventing context rot.

In summary, DeepMed demonstrates that a carefully engineered combination of multi‑hop medical search data, difficulty‑aware tool‑use regulation, and runtime monitoring can adapt the DeepResearch paradigm to the high‑stakes domain of medicine. This work not only advances the state of the art in medical AI but also provides a blueprint for extending tool‑augmented LLMs to other safety‑critical fields such as law, finance, and policy.

Comments & Academic Discussion

Loading comments...

Leave a Comment